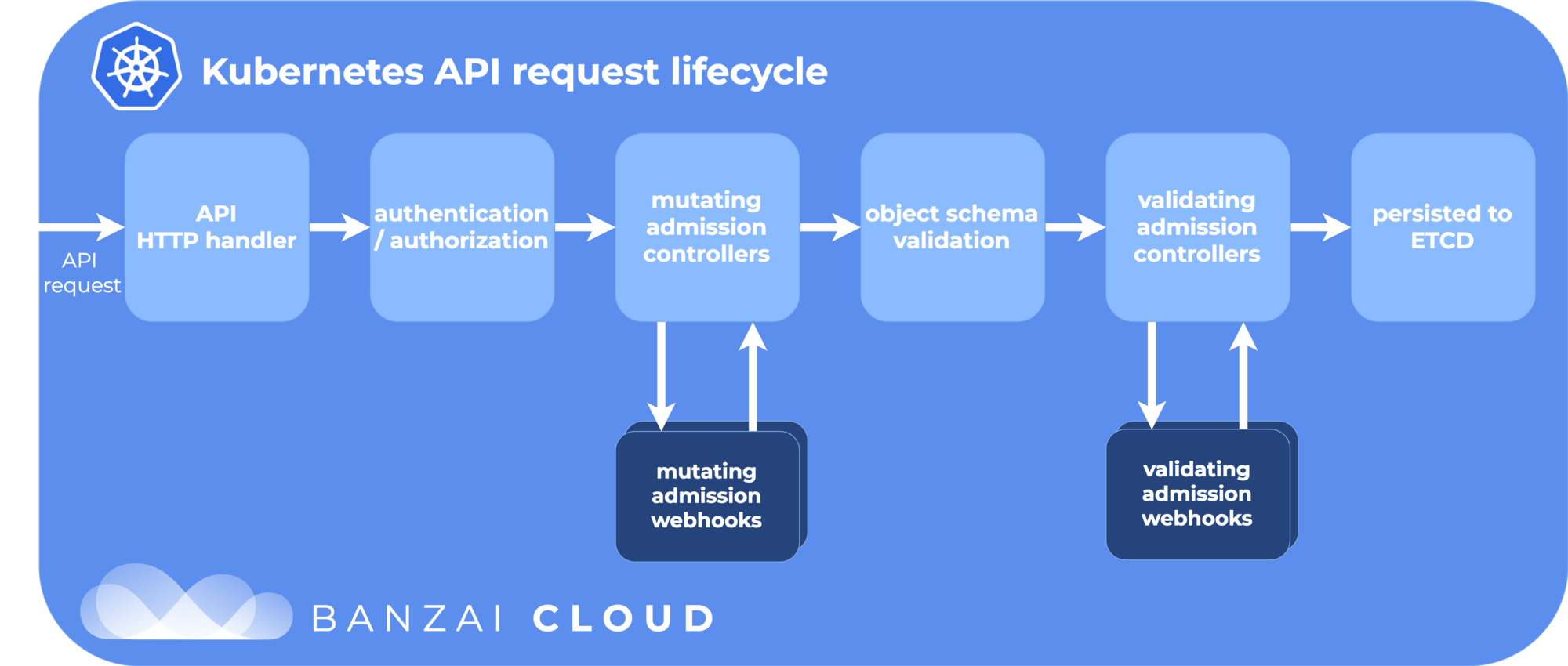

class: title, self-paced Advanced<br/>Kubernetes<br/>Concepts<br/> .nav[*Self-paced version*] .debug[ ``` ``` These slides have been built from commit: 0bf5d9b [shared/title.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/title.md)] --- class: title, in-person Advanced<br/>Kubernetes<br/>Concepts<br/><br/></br> .footnote[ **Slides[:](https://www.youtube.com/watch?v=h16zyxiwDLY) https://2020-09-skillsmatter.container.training/** ] <!-- WiFi: **Something**<br/> Password: **Something** **Be kind to the WiFi!**<br/> *Use the 5G network.* *Don't use your hotspot.*<br/> *Don't stream videos or download big files during the workshop*<br/> *Thank you!* --> .debug[[shared/title.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/title.md)] --- ## Intros - Hello! I'm Jérôme Petazzoni ([@jpetazzo](https://twitter.com/jpetazzo), [Enix SAS](https://enix.io/)) - The training will run for 4 hours, with short breaks every hour (and a slightly longer break in the middle) - Feel free to interrupt for questions at any time - *Especially when you see full screen container pictures!* - Live feedback, questions, help: Slack (or Zoom as a backup) .debug[[logistics.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/logistics.md)] --- ## A brief introduction - This was initially written by [Jérôme Petazzoni](https://twitter.com/jpetazzo) to support in-person, instructor-led workshops and tutorials - Credit is also due to [multiple contributors](https://github.com/jpetazzo/container.training/graphs/contributors) — thank you! - You can also follow along on your own, at your own pace - We included as much information as possible in these slides - We recommend having a mentor to help you ... - ... Or be comfortable spending some time reading the Kubernetes [documentation](https://kubernetes.io/docs/) ... - ... And looking for answers on [StackOverflow](http://stackoverflow.com/questions/tagged/kubernetes) and other outlets .debug[[k8s/intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/intro.md)] --- class: self-paced ## Hands on, you shall practice - Nobody ever became a Jedi by spending their lives reading Wookiepedia - Likewise, it will take more than merely *reading* these slides to make you an expert - These slides include *tons* of exercises and examples - They assume that you have access to a Kubernetes cluster - If you are attending a workshop or tutorial: <br/>you will be given specific instructions to access your cluster - If you are doing this on your own: <br/>the first chapter will give you various options to get your own cluster .debug[[k8s/intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/intro.md)] --- ## Accessing these slides now - We recommend that you open these slides in your browser: https://2020-09-skillsmatter.container.training/ - Use arrows to move to next/previous slide (up, down, left, right, page up, page down) - Type a slide number + ENTER to go to that slide - The slide number is also visible in the URL bar (e.g. .../#123 for slide 123) .debug[[shared/about-slides.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/about-slides.md)] --- ## Accessing these slides later - Slides will remain online so you can review them later if needed (let's say we'll keep them online at least 1 year, how about that?) - You can download the slides using that URL: https://2020-09-skillsmatter.container.training/slides.zip (then open the file `kube-twodays.yml.html`) - You will find new versions of these slides on: https://container.training/ .debug[[shared/about-slides.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/about-slides.md)] --- ## These slides are open source - You are welcome to use, re-use, share these slides - These slides are written in markdown - The sources of these slides are available in a public GitHub repository: https://github.com/jpetazzo/container.training - Typos? Mistakes? Questions? Feel free to hover over the bottom of the slide ... .footnote[.emoji[👇] Try it! The source file will be shown and you can view it on GitHub and fork and edit it.] <!-- .exercise[ ```open https://github.com/jpetazzo/container.training/tree/master/slides/common/about-slides.md``` ] --> .debug[[shared/about-slides.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/about-slides.md)] --- class: extra-details ## Extra details - This slide has a little magnifying glass in the top left corner - This magnifying glass indicates slides that provide extra details - Feel free to skip them if: - you are in a hurry - you are new to this and want to avoid cognitive overload - you want only the most essential information - You can review these slides another time if you want, they'll be waiting for you ☺ .debug[[shared/about-slides.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/about-slides.md)] --- ## Chat room - We've set up a chat room that we will monitor during the workshop - Don't hesitate to use it to ask questions, or get help, or share feedback - The chat room will also be available after the workshop - Join the chat room: Slack (or Zoom as a backup) - Say hi in the chat room! .debug[[shared/chat-room-im.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/chat-room-im.md)] --- name: toc-module-1 ## Module 1 - [Deploying a sample application](#toc-deploying-a-sample-application) - [Kustomize](#toc-kustomize) - [Managing stacks with Helm](#toc-managing-stacks-with-helm) - [Helm chart format](#toc-helm-chart-format) - [Creating a basic chart](#toc-creating-a-basic-chart) - [Creating better Helm charts](#toc-creating-better-helm-charts) - [Helm secrets](#toc-helm-secrets) .debug[(auto-generated TOC)] --- name: toc-module-2 ## Module 2 - [Network policies](#toc-network-policies) - [Authentication and authorization](#toc-authentication-and-authorization) - [Static pods](#toc-static-pods) - [Pod Security Policies](#toc-pod-security-policies) - [OpenID Connect](#toc-openid-connect) - [The CSR API](#toc-the-csr-api) .debug[(auto-generated TOC)] --- name: toc-module-3 ## Module 3 - [Resource Limits](#toc-resource-limits) - [Defining min, max, and default resources](#toc-defining-min-max-and-default-resources) - [Namespace quotas](#toc-namespace-quotas) - [Limiting resources in practice](#toc-limiting-resources-in-practice) - [Checking pod and node resource usage](#toc-checking-pod-and-node-resource-usage) - [Cluster sizing](#toc-cluster-sizing) - [The Horizontal Pod Autoscaler](#toc-the-horizontal-pod-autoscaler) - [Extending the Kubernetes API](#toc-extending-the-kubernetes-api) - [Operators](#toc-operators) .debug[(auto-generated TOC)] --- name: toc-module-4 ## Module 4 - [Volumes](#toc-volumes) - [Managing configuration](#toc-managing-configuration) - [Stateful sets](#toc-stateful-sets) - [Running a Consul cluster](#toc-running-a-consul-cluster) - [Persistent Volumes Claims](#toc-persistent-volumes-claims) - [Local Persistent Volumes](#toc-local-persistent-volumes) - [Highly available Persistent Volumes](#toc-highly-available-persistent-volumes) .debug[(auto-generated TOC)] .debug[[shared/toc.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/toc.md)] --- class: in-person ## Connecting to our lab environment .exercise[ - Log into the first VM (`node1`) with your SSH client: ```bash ssh `user`@`A.B.C.D` ``` (Replace `user` and `A.B.C.D` with the user and IP address provided to you) <!-- ```bash for N in $(awk '/\Wnode/{print $2}' /etc/hosts); do ssh -o StrictHostKeyChecking=no $N true done ``` ```bash ### FIXME find a way to reset the cluster, maybe? ``` --> ] You should see a prompt looking like this: ``` [A.B.C.D] (...) user@node1 ~ $ ``` If anything goes wrong — ask for help! .debug[[shared/connecting.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/connecting.md)] --- class: in-person ## `tailhist` - The shell history of the instructor is available online in real time - Note the IP address of the instructor's virtual machine (A.B.C.D) - Open http://A.B.C.D:1088 in your browser and you should see the history - The history is updated in real time (using a WebSocket connection) - It should be green when the WebSocket is connected (if it turns red, reloading the page should fix it) .debug[[shared/connecting.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/connecting.md)] --- ## Doing or re-doing the workshop on your own? - Use something like [Play-With-Docker](http://play-with-docker.com/) or [Play-With-Kubernetes](https://training.play-with-kubernetes.com/) Zero setup effort; but environment are short-lived and might have limited resources - Create your own cluster (local or cloud VMs) Small setup effort; small cost; flexible environments - Create a bunch of clusters for you and your friends ([instructions](https://github.com/jpetazzo/container.training/tree/master/prepare-vms)) Bigger setup effort; ideal for group training .debug[[shared/connecting.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/connecting.md)] --- ## For a consistent Kubernetes experience ... - If you are using your own Kubernetes cluster, you can use [shpod](https://github.com/jpetazzo/shpod) - `shpod` provides a shell running in a pod on your own cluster - It comes with many tools pre-installed (helm, stern...) - These tools are used in many exercises in these slides - `shpod` also gives you completion and a fancy prompt .debug[[shared/connecting.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/connecting.md)] --- class: self-paced ## Get your own Docker nodes - If you already have some Docker nodes: great! - If not: let's get some thanks to Play-With-Docker .exercise[ - Go to http://www.play-with-docker.com/ - Log in - Create your first node <!-- ```open http://www.play-with-docker.com/``` --> ] You will need a Docker ID to use Play-With-Docker. (Creating a Docker ID is free.) .debug[[shared/connecting.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/connecting.md)] --- ## We will (mostly) interact with node1 only *These remarks apply only when using multiple nodes, of course.* - Unless instructed, **all commands must be run from the first VM, `node1`** - We will only check out/copy the code on `node1` - During normal operations, we do not need access to the other nodes - If we had to troubleshoot issues, we would use a combination of: - SSH (to access system logs, daemon status...) - Docker API (to check running containers and container engine status) .debug[[shared/connecting.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/connecting.md)] --- ## Terminals Once in a while, the instructions will say: <br/>"Open a new terminal." There are multiple ways to do this: - create a new window or tab on your machine, and SSH into the VM; - use screen or tmux on the VM and open a new window from there. You are welcome to use the method that you feel the most comfortable with. .debug[[shared/connecting.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/connecting.md)] --- ## Tmux cheatsheet [Tmux](https://en.wikipedia.org/wiki/Tmux) is a terminal multiplexer like `screen`. *You don't have to use it or even know about it to follow along. <br/> But some of us like to use it to switch between terminals. <br/> It has been preinstalled on your workshop nodes.* - Ctrl-b c → creates a new window - Ctrl-b n → go to next window - Ctrl-b p → go to previous window - Ctrl-b " → split window top/bottom - Ctrl-b % → split window left/right - Ctrl-b Alt-1 → rearrange windows in columns - Ctrl-b Alt-2 → rearrange windows in rows - Ctrl-b arrows → navigate to other windows - Ctrl-b d → detach session - tmux attach → reattach to session .debug[[shared/connecting.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/connecting.md)] --- class: pic .interstitial[] --- name: toc-deploying-a-sample-application class: title Deploying a sample application .nav[ [Previous section](#toc-) | [Back to table of contents](#toc-module-1) | [Next section](#toc-kustomize) ] .debug[(automatically generated title slide)] --- # Deploying a sample application - We will connect to our new Kubernetes cluster - We will deploy a sample application, "DockerCoins" - That app features multiple micro-services and a web UI .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- ## Connecting to our Kubernetes cluster - Our cluster has multiple nodes named `node1`, `node2`, etc. - We will do everything from `node1` - We have SSH access to the other nodes, but won't need it (but we can use it for debugging, troubleshooting, etc.) .exercise[ - Log into `node1` - Check that all nodes are `Ready`: ```bash kubectl get nodes ``` ] .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- ## Cloning the repository - We will need to clone the training repository - It has the DockerCoins demo app ... - ... as well as these slides, some scripts, more manifests .exercise[ - Clone the repository on `node1`: ```bash git clone https://github.com/jpetazzo/container.training ``` ] .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- ## Running the application Without further ado, let's start this application! .exercise[ - Apply the manifest for dockercoins: ```bash kubectl apply -f ~/container.training/k8s/dockercoins.yaml ``` ] .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- ## What's this application? -- - It is a DockerCoin miner! .emoji[💰🐳📦🚢] -- - No, you can't buy coffee with DockerCoins -- - How DockerCoins works: - generate a few random bytes - hash these bytes - increment a counter (to keep track of speed) - repeat forever! -- - DockerCoins is *not* a cryptocurrency (the only common points are "randomness", "hashing", and "coins" in the name) .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- ## DockerCoins in the microservices era - DockerCoins is made of 5 services: - `rng` = web service generating random bytes - `hasher` = web service computing hash of POSTed data - `worker` = background process calling `rng` and `hasher` - `webui` = web interface to watch progress - `redis` = data store (holds a counter updated by `worker`) - These 5 services are visible in the application's Compose file, [docker-compose.yml]( https://github.com/jpetazzo/container.training/blob/master/dockercoins/docker-compose.yml) .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- ## How DockerCoins works - `worker` invokes web service `rng` to generate random bytes - `worker` invokes web service `hasher` to hash these bytes - `worker` does this in an infinite loop - every second, `worker` updates `redis` to indicate how many loops were done - `webui` queries `redis`, and computes and exposes "hashing speed" in our browser *(See diagram on next slide!)* .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- class: pic  .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- ## Service discovery in container-land How does each service find out the address of the other ones? -- - We do not hard-code IP addresses in the code - We do not hard-code FQDNs in the code, either - We just connect to a service name, and container-magic does the rest (And by container-magic, we mean "a crafty, dynamic, embedded DNS server") .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- ## Example in `worker/worker.py` ```python redis = Redis("`redis`") def get_random_bytes(): r = requests.get("http://`rng`/32") return r.content def hash_bytes(data): r = requests.post("http://`hasher`/", data=data, headers={"Content-Type": "application/octet-stream"}) ``` (Full source code available [here]( https://github.com/jpetazzo/container.training/blob/8279a3bce9398f7c1a53bdd95187c53eda4e6435/dockercoins/worker/worker.py#L17 )) .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- ## Show me the code! - You can check the GitHub repository with all the materials of this workshop: <br/>https://github.com/jpetazzo/container.training - The application is in the [dockercoins]( https://github.com/jpetazzo/container.training/tree/master/dockercoins) subdirectory - The Compose file ([docker-compose.yml]( https://github.com/jpetazzo/container.training/blob/master/dockercoins/docker-compose.yml)) lists all 5 services - `redis` is using an official image from the Docker Hub - `hasher`, `rng`, `worker`, `webui` are each built from a Dockerfile - Each service's Dockerfile and source code is in its own directory (`hasher` is in the [hasher](https://github.com/jpetazzo/container.training/blob/master/dockercoins/hasher/) directory, `rng` is in the [rng](https://github.com/jpetazzo/container.training/blob/master/dockercoins/rng/) directory, etc.) .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- ## Our application at work - We can check the logs of our application's pods .exercise[ - Check the logs of the various components: ```bash kubectl logs deploy/worker kubectl logs deploy/hasher ``` ] .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- ## Connecting to the web UI - "Logs are exciting and fun!" (No-one, ever) - The `webui` container exposes a web dashboard; let's view it .exercise[ - Check the NodePort allocated to the web UI: ```bash kubectl get svc webui ``` - Open that in a web browser ] A drawing area should show up, and after a few seconds, a blue graph will appear. ??? :EN:- Deploying a sample app with YAML manifests :FR:- Lancer une application de démo avec du YAML .debug[[k8s/kubercoins.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kubercoins.md)] --- class: pic .interstitial[] --- name: toc-kustomize class: title Kustomize .nav[ [Previous section](#toc-deploying-a-sample-application) | [Back to table of contents](#toc-module-1) | [Next section](#toc-managing-stacks-with-helm) ] .debug[(automatically generated title slide)] --- # Kustomize - Kustomize lets us transform YAML files representing Kubernetes resources - The original YAML files are valid resource files (e.g. they can be loaded with `kubectl apply -f`) - They are left untouched by Kustomize - Kustomize lets us define *kustomizations* - A *kustomization* is conceptually similar to a *layer* - Technically, a *kustomization* is a file named `kustomization.yaml` (or a directory containing that files + additional files) .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## What's in a kustomization - A kustomization can do any combination of the following: - include other kustomizations - include Kubernetes resources defined in YAML files - patch Kubernetes resources (change values) - add labels or annotations to all resources - specify ConfigMaps and Secrets from literal values or local files (... And a few more advanced features that we won't cover today!) .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## A simple kustomization This features a Deployment, Service, and Ingress (in separate files), and a couple of patches (to change the number of replicas and the hostname used in the Ingress). ```yaml apiVersion: kustomize.config.k8s.io/v1beta1 kind: Kustomization patchesStrategicMerge: - scale-deployment.yaml - ingress-hostname.yaml resources: - deployment.yaml - service.yaml - ingress.yaml ``` On the next slide, let's see a more complex example ... .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ```yaml apiVersion: kustomize.config.k8s.io/v1beta1 kind: Kustomization commonLabels: add-this-to-all-my-resources: please patchesStrategicMerge: - prod-scaling.yaml - prod-healthchecks.yaml bases: - api/ - frontend/ - db/ - github.com/example/app?ref=tag-or-branch resources: - ingress.yaml - permissions.yaml configMapGenerator: - name: appconfig files: - global.conf - local.conf=prod.conf ``` .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Glossary - A *base* is a kustomization that is referred to by other kustomizations - An *overlay* is a kustomization that refers to other kustomizations - A kustomization can be both a base and an overlay at the same time (a kustomization can refer to another, which can refer to a third) - A *patch* describes how to alter an existing resource (e.g. to change the image in a Deployment; or scaling parameters; etc.) - A *variant* is the final outcome of applying bases + overlays (See the [kustomize glossary](https://github.com/kubernetes-sigs/kustomize/blob/master/docs/glossary.md) for more definitions!) .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## What Kustomize *cannot* do - By design, there are a number of things that Kustomize won't do - For instance: - using command-line arguments or environment variables to generate a variant - overlays can only *add* resources, not *remove* them - See the full list of [eschewed features](https://github.com/kubernetes-sigs/kustomize/blob/master/docs/eschewedFeatures.md) for more details .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Kustomize workflows - The Kustomize documentation proposes two different workflows - *Bespoke configuration* - base and overlays managed by the same team - *Off-the-shelf configuration* (OTS) - base and overlays managed by different teams - base is regularly updated by "upstream" (e.g. a vendor) - our overlays and patches should (hopefully!) apply cleanly - we may regularly update the base, or use a remote base .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Remote bases - Kustomize can fetch remote bases using Hashicorp go-getter library - Examples: github.com/jpetazzo/kubercoins (remote git repository) github.com/jpetazzo/kubercoins?ref=kustomize (specific tag or branch) https://releases.hello.io/k/1.0.zip (remote archive) https://releases.hello.io/k/1.0.zip//some-subdir (subdirectory in archive) - See [hashicorp/go-getter URL format docs](https://github.com/hashicorp/go-getter#url-format) for more examples .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Managing `kustomization.yaml` - There are many ways to manage `kustomization.yaml` files, including: - web wizards like [Replicated Ship](https://www.replicated.com/ship/) - the `kustomize` CLI - opening the file with our favorite text editor - Let's see these in action! .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## An easy way to get started with Kustomize - We are going to use [Replicated Ship](https://www.replicated.com/ship/) to experiment with Kustomize - The [Replicated Ship CLI](https://github.com/replicatedhq/ship/releases) has been installed on our clusters - Replicated Ship has multiple workflows; here is what we will do: - initialize a Kustomize overlay from a remote GitHub repository - customize some values using the web UI provided by Ship - look at the resulting files and apply them to the cluster .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Getting started with Ship - We need to run `ship init` in a new directory - `ship init` requires a URL to a remote repository containing Kubernetes YAML - It will clone that repository and start a web UI - Later, it can watch that repository and/or update from it - We will use the [jpetazzo/kubercoins](https://github.com/jpetazzo/kubercoins) repository (it contains all the DockerCoins resources as YAML files) .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## `ship init` .exercise[ - Change to a new directory: ```bash mkdir ~/kustomcoins cd ~/kustomcoins ``` - Run `ship init` with the kustomcoins repository: ```bash ship init https://github.com/jpetazzo/kubercoins ``` <!-- ```wait Open browser``` --> ] .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Access the web UI - `ship init` tells us to connect on `localhost:8800` - We need to replace `localhost` with the address of our node (since we run on a remote machine) - Follow the steps in the web UI, and change one parameter (e.g. set the number of replicas in the worker Deployment) - Complete the web workflow, and go back to the CLI .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Inspect the results - Look at the content of our directory - `base` contains the kubercoins repository + a `kustomization.yaml` file - `overlays/ship` contains the Kustomize overlay referencing the base + our patch(es) - `rendered.yaml` is a YAML bundle containing the patched application - `.ship` contains a state file used by Ship .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Using the results - We can `kubectl apply -f rendered.yaml` (on any version of Kubernetes) - Starting with Kubernetes 1.14, we can apply the overlay directly with: ```bash kubectl apply -k overlays/ship ``` - But let's not do that for now! - We will create a new copy of DockerCoins in another namespace .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Deploy DockerCoins with Kustomize .exercise[ - Create a new namespace: ```bash kubectl create namespace kustomcoins ``` - Deploy DockerCoins: ```bash kubectl apply -f rendered.yaml --namespace=kustomcoins ``` - Or, with Kubernetes 1.14, you can also do this: ```bash kubectl apply -k overlays/ship --namespace=kustomcoins ``` ] .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Checking our new copy of DockerCoins - We can check the worker logs, or the web UI .exercise[ - Retrieve the NodePort number of the web UI: ```bash kubectl get service webui --namespace=kustomcoins ``` - Open it in a web browser - Look at the worker logs: ```bash kubectl logs deploy/worker --tail=10 --follow --namespace=kustomcoins ``` <!-- ```wait units of work done``` ```key ^C``` --> ] Note: it might take a minute or two for the worker to start. .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Working with the `kustomize` CLI - This is another way to get started - General workflow: `kustomize create` to generate an empty `kustomization.yaml` file `kustomize edit add resource` to add Kubernetes YAML files to it `kustomize edit add patch` to add patches to said resources `kustomize build | kubectl apply -f-` or `kubectl apply -k .` .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## `kubectl apply -k` - Kustomize has been integrated in `kubectl` - The `kustomize` tool is still needed if we want to use `create`, `edit`, ... - Also, warning: `kubectl apply -k` is a slightly older version than `kustomize`! - In recent versions of `kustomize`, bases can be listed in `resources` (and `kustomize edit add base` will add its arguments to `resources`) - `kubectl apply -k` requires bases to be listed in `bases` (so after using `kustomize edit add base`, we need to fix `kustomization.yaml`) .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- ## Differences with Helm - Helm charts use placeholders `{{ like.this }}` - Kustomize "bases" are standard Kubernetes YAML - It is possible to use an existing set of YAML as a Kustomize base - As a result, writing a Helm chart is more work ... - ... But Helm charts are also more powerful; e.g. they can: - use flags to conditionally include resources or blocks - check if a given Kubernetes API group is supported - [and much more](https://helm.sh/docs/chart_template_guide/) ??? :EN:- Packaging and running apps with Kustomize :FR:- *Packaging* d'applications avec Kustomize .debug[[k8s/kustomize.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/kustomize.md)] --- class: pic .interstitial[] --- name: toc-managing-stacks-with-helm class: title Managing stacks with Helm .nav[ [Previous section](#toc-kustomize) | [Back to table of contents](#toc-module-1) | [Next section](#toc-helm-chart-format) ] .debug[(automatically generated title slide)] --- # Managing stacks with Helm - We created our first resources with `kubectl run`, `kubectl expose` ... - We have also created resources by loading YAML files with `kubectl apply -f` - For larger stacks, managing thousands of lines of YAML is unreasonable - These YAML bundles need to be customized with variable parameters (E.g.: number of replicas, image version to use ...) - It would be nice to have an organized, versioned collection of bundles - It would be nice to be able to upgrade/rollback these bundles carefully - [Helm](https://helm.sh/) is an open source project offering all these things! .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## CNCF graduation status - On April 30th 2020, Helm was the 10th project to *graduate* within the CNCF .emoji[🎉] (alongside Containerd, Prometheus, and Kubernetes itself) - This is an acknowledgement by the CNCF for projects that *demonstrate thriving adoption, an open governance process, <br/> and a strong commitment to community, sustainability, and inclusivity.* - See [CNCF announcement](https://www.cncf.io/announcement/2020/04/30/cloud-native-computing-foundation-announces-helm-graduation/) and [Helm announcement](https://helm.sh/blog/celebrating-helms-cncf-graduation/) .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Helm concepts - `helm` is a CLI tool - It is used to find, install, upgrade *charts* - A chart is an archive containing templatized YAML bundles - Charts are versioned - Charts can be stored on private or public repositories .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Differences between charts and packages - A package (deb, rpm...) contains binaries, libraries, etc. - A chart contains YAML manifests (the binaries, libraries, etc. are in the images referenced by the chart) - On most distributions, a package can only be installed once (installing another version replaces the installed one) - A chart can be installed multiple times - Each installation is called a *release* - This allows to install e.g. 10 instances of MongoDB (with potentially different versions and configurations) .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- class: extra-details ## Wait a minute ... *But, on my Debian system, I have Python 2 **and** Python 3. <br/> Also, I have multiple versions of the Postgres database engine!* Yes! But they have different package names: - `python2.7`, `python3.8` - `postgresql-10`, `postgresql-11` Good to know: the Postgres package in Debian includes provisions to deploy multiple Postgres servers on the same system, but it's an exception (and it's a lot of work done by the package maintainer, not by the `dpkg` or `apt` tools). .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Helm 2 vs Helm 3 - Helm 3 was released [November 13, 2019](https://helm.sh/blog/helm-3-released/) - Charts remain compatible between Helm 2 and Helm 3 - The CLI is very similar (with minor changes to some commands) - The main difference is that Helm 2 uses `tiller`, a server-side component - Helm 3 doesn't use `tiller` at all, making it simpler (yay!) .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- class: extra-details ## With or without `tiller` - With Helm 3: - the `helm` CLI communicates directly with the Kubernetes API - it creates resources (deployments, services...) with our credentials - With Helm 2: - the `helm` CLI communicates with `tiller`, telling `tiller` what to do - `tiller` then communicates with the Kubernetes API, using its own credentials - This indirect model caused significant permissions headaches (`tiller` required very broad permissions to function) - `tiller` was removed in Helm 3 to simplify the security aspects .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Installing Helm - If the `helm` CLI is not installed in your environment, install it .exercise[ - Check if `helm` is installed: ```bash helm ``` - If it's not installed, run the following command: ```bash curl https://raw.githubusercontent.com/kubernetes/helm/master/scripts/get-helm-3 \ | bash ``` ] (To install Helm 2, replace `get-helm-3` with `get`.) .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- class: extra-details ## Only if using Helm 2 ... - We need to install Tiller and give it some permissions - Tiller is composed of a *service* and a *deployment* in the `kube-system` namespace - They can be managed (installed, upgraded...) with the `helm` CLI .exercise[ - Deploy Tiller: ```bash helm init ``` ] At the end of the install process, you will see: ``` Happy Helming! ``` .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- class: extra-details ## Only if using Helm 2 ... - Tiller needs permissions to create Kubernetes resources - In a more realistic deployment, you might create per-user or per-team service accounts, roles, and role bindings .exercise[ - Grant `cluster-admin` role to `kube-system:default` service account: ```bash kubectl create clusterrolebinding add-on-cluster-admin \ --clusterrole=cluster-admin --serviceaccount=kube-system:default ``` ] (Defining the exact roles and permissions on your cluster requires a deeper knowledge of Kubernetes' RBAC model. The command above is fine for personal and development clusters.) .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Charts and repositories - A *repository* (or repo in short) is a collection of charts - It's just a bunch of files (they can be hosted by a static HTTP server, or on a local directory) - We can add "repos" to Helm, giving them a nickname - The nickname is used when referring to charts on that repo (for instance, if we try to install `hello/world`, that means the chart `world` on the repo `hello`; and that repo `hello` might be something like https://blahblah.hello.io/charts/) .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Managing repositories - Let's check what repositories we have, and add the `stable` repo (the `stable` repo contains a set of official-ish charts) .exercise[ - List our repos: ```bash helm repo list ``` - Add the `stable` repo: ```bash helm repo add stable https://kubernetes-charts.storage.googleapis.com/ ``` ] Adding a repo can take a few seconds (it downloads the list of charts from the repo). It's OK to add a repo that already exists (it will merely update it). .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Search available charts - We can search available charts with `helm search` - We need to specify where to search (only our repos, or Helm Hub) - Let's search for all charts mentioning tomcat! .exercise[ - Search for tomcat in the repo that we added earlier: ```bash helm search repo tomcat ``` - Search for tomcat on the Helm Hub: ```bash helm search hub tomcat ``` ] [Helm Hub](https://hub.helm.sh/) indexes many repos, using the [Monocular](https://github.com/helm/monocular) server. .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Charts and releases - "Installing a chart" means creating a *release* - We need to name that release (or use the `--generate-name` to get Helm to generate one for us) .exercise[ - Install the tomcat chart that we found earlier: ```bash helm install java4ever stable/tomcat ``` - List the releases: ```bash helm list ``` ] .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- class: extra-details ## Searching and installing with Helm 2 - Helm 2 doesn't have support for the Helm Hub - The `helm search` command only takes a search string argument (e.g. `helm search tomcat`) - With Helm 2, the name is optional: `helm install stable/tomcat` will automatically generate a name `helm install --name java4ever stable/tomcat` will specify a name .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Viewing resources of a release - This specific chart labels all its resources with a `release` label - We can use a selector to see these resources .exercise[ - List all the resources created by this release: ```bash kubectl get all --selector=release=java4ever ``` ] Note: this `release` label wasn't added automatically by Helm. <br/> It is defined in that chart. In other words, not all charts will provide this label. .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Configuring a release - By default, `stable/tomcat` creates a service of type `LoadBalancer` - We would like to change that to a `NodePort` - We could use `kubectl edit service java4ever-tomcat`, but ... ... our changes would get overwritten next time we update that chart! - Instead, we are going to *set a value* - Values are parameters that the chart can use to change its behavior - Values have default values - Each chart is free to define its own values and their defaults .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Checking possible values - We can inspect a chart with `helm show` or `helm inspect` .exercise[ - Look at the README for tomcat: ```bash helm show readme stable/tomcat ``` - Look at the values and their defaults: ```bash helm show values stable/tomcat ``` ] The `values` may or may not have useful comments. The `readme` may or may not have (accurate) explanations for the values. (If we're unlucky, there won't be any indication about how to use the values!) .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Setting values - Values can be set when installing a chart, or when upgrading it - We are going to update `java4ever` to change the type of the service .exercise[ - Update `java4ever`: ```bash helm upgrade java4ever stable/tomcat --set service.type=NodePort ``` ] Note that we have to specify the chart that we use (`stable/tomcat`), even if we just want to update some values. We can set multiple values. If we want to set many values, we can use `-f`/`--values` and pass a YAML file with all the values. All unspecified values will take the default values defined in the chart. .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- ## Connecting to tomcat - Let's check the tomcat server that we just installed - Note: its readiness probe has a 60s delay (so it will take 60s after the initial deployment before the service works) .exercise[ - Check the node port allocated to the service: ```bash kubectl get service java4ever-tomcat PORT=$(kubectl get service java4ever-tomcat -o jsonpath={..nodePort}) ``` - Connect to it, checking the demo app on `/sample/`: ```bash curl localhost:$PORT/sample/ ``` ] ??? :EN:- Helm concepts :EN:- Installing software with Helm :EN:- Helm 2, Helm 3, and the Helm Hub :FR:- Fonctionnement général de Helm :FR:- Installer des composants via Helm :FR:- Helm 2, Helm 3, et le *Helm Hub* .debug[[k8s/helm-intro.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-intro.md)] --- class: pic .interstitial[] --- name: toc-helm-chart-format class: title Helm chart format .nav[ [Previous section](#toc-managing-stacks-with-helm) | [Back to table of contents](#toc-module-1) | [Next section](#toc-creating-a-basic-chart) ] .debug[(automatically generated title slide)] --- # Helm chart format - What exactly is a chart? - What's in it? - What would be involved in creating a chart? (we won't create a chart, but we'll see the required steps) .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## What is a chart - A chart is a set of files - Some of these files are mandatory for the chart to be viable (more on that later) - These files are typically packed in a tarball - These tarballs are stored in "repos" (which can be static HTTP servers) - We can install from a repo, from a local tarball, or an unpacked tarball (the latter option is preferred when developing a chart) .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## What's in a chart - A chart must have at least: - a `templates` directory, with YAML manifests for Kubernetes resources - a `values.yaml` file, containing (tunable) parameters for the chart - a `Chart.yaml` file, containing metadata (name, version, description ...) - Let's look at a simple chart, `stable/tomcat` .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## Downloading a chart - We can use `helm pull` to download a chart from a repo .exercise[ - Download the tarball for `stable/tomcat`: ```bash helm pull stable/tomcat ``` (This will create a file named `tomcat-X.Y.Z.tgz`.) - Or, download + untar `stable/tomcat`: ```bash helm pull stable/tomcat --untar ``` (This will create a directory named `tomcat`.) ] .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## Looking at the chart's content - Let's look at the files and directories in the `tomcat` chart .exercise[ - Display the tree structure of the chart we just downloaded: ```bash tree tomcat ``` ] We see the components mentioned above: `Chart.yaml`, `templates/`, `values.yaml`. .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## Templates - The `templates/` directory contains YAML manifests for Kubernetes resources (Deployments, Services, etc.) - These manifests can contain template tags (using the standard Go template library) .exercise[ - Look at the template file for the tomcat Service resource: ```bash cat tomcat/templates/appsrv-svc.yaml ``` ] .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## Analyzing the template file - Tags are identified by `{{ ... }}` - `{{ template "x.y" }}` expands a [named template](https://helm.sh/docs/chart_template_guide/named_templates/#declaring-and-using-templates-with-define-and-template) (previously defined with `{{ define "x.y "}}...stuff...{{ end }}`) - The `.` in `{{ template "x.y" . }}` is the *context* for that named template (so that the named template block can access variables from the local context) - `{{ .Release.xyz }}` refers to [built-in variables](https://helm.sh/docs/chart_template_guide/builtin_objects/) initialized by Helm (indicating the chart name, version, whether we are installing or upgrading ...) - `{{ .Values.xyz }}` refers to tunable/settable [values](https://helm.sh/docs/chart_template_guide/values_files/) (more on that in a minute) .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## Values - Each chart comes with a [values file](https://helm.sh/docs/chart_template_guide/values_files/) - It's a YAML file containing a set of default parameters for the chart - The values can be accessed in templates with e.g. `{{ .Values.x.y }}` (corresponding to field `y` in map `x` in the values file) - The values can be set or overridden when installing or ugprading a chart: - with `--set x.y=z` (can be used multiple times to set multiple values) - with `--values some-yaml-file.yaml` (set a bunch of values from a file) - Charts following best practices will have values following specific patterns (e.g. having a `service` map allowing to set `service.type` etc.) .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## Other useful tags - `{{ if x }} y {{ end }}` allows to include `y` if `x` evaluates to `true` (can be used for e.g. healthchecks, annotations, or even an entire resource) - `{{ range x }} y {{ end }}` iterates over `x`, evaluating `y` each time (the elements of `x` are assigned to `.` in the range scope) - `{{- x }}`/`{{ x -}}` will remove whitespace on the left/right - The whole [Sprig](http://masterminds.github.io/sprig/) library, with additions: `lower` `upper` `quote` `trim` `default` `b64enc` `b64dec` `sha256sum` `indent` `toYaml` ... .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## Pipelines - `{{ quote blah }}` can also be expressed as `{{ blah | quote }}` - With multiple arguments, `{{ x y z }}` can be expressed as `{{ z | x y }}`) - Example: `{{ .Values.annotations | toYaml | indent 4 }}` - transforms the map under `annotations` into a YAML string - indents it with 4 spaces (to match the surrounding context) - Pipelines are not specific to Helm, but a feature of Go templates (check the [Go text/template documentation](https://golang.org/pkg/text/template/) for more details and examples) .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## README and NOTES.txt - At the top-level of the chart, it's a good idea to have a README - It will be viewable with e.g. `helm show readme stable/tomcat` - In the `templates/` directory, we can also have a `NOTES.txt` file - When the template is installed (or upgraded), `NOTES.txt` is processed too (i.e. its `{{ ... }}` tags are evaluated) - It gets displayed after the install or upgrade - It's a great place to generate messages to tell the user: - how to connect to the release they just deployed - any passwords or other thing that we generated for them .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## Additional files - We can place arbitrary files in the chart (outside of the `templates/` directory) - They can be accessed in templates with `.Files` - They can be transformed into ConfigMaps or Secrets with `AsConfig` and `AsSecrets` (see [this example](https://helm.sh/docs/chart_template_guide/accessing_files/#configmap-and-secrets-utility-functions) in the Helm docs) .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- ## Hooks and tests - We can define *hooks* in our templates - Hooks are resources annotated with `"helm.sh/hook": NAME-OF-HOOK` - Hook names include `pre-install`, `post-install`, `test`, [and much more](https://helm.sh/docs/topics/charts_hooks/#the-available-hooks) - The resources defined in hooks are loaded at a specific time - Hook execution is *synchronous* (if the resource is a Job or Pod, Helm will wait for its completion) - This can be use for database migrations, backups, notifications, smoke tests ... - Hooks named `test` are executed only when running `helm test RELEASE-NAME` ??? :EN:- Helm charts format :FR:- Le format des *Helm charts* .debug[[k8s/helm-chart-format.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-chart-format.md)] --- class: pic .interstitial[] --- name: toc-creating-a-basic-chart class: title Creating a basic chart .nav[ [Previous section](#toc-helm-chart-format) | [Back to table of contents](#toc-module-1) | [Next section](#toc-creating-better-helm-charts) ] .debug[(automatically generated title slide)] --- # Creating a basic chart - We are going to show a way to create a *very simplified* chart - In a real chart, *lots of things* would be templatized (Resource names, service types, number of replicas...) .exercise[ - Create a sample chart: ```bash helm create dockercoins ``` - Move away the sample templates and create an empty template directory: ```bash mv dockercoins/templates dockercoins/default-templates mkdir dockercoins/templates ``` ] .debug[[k8s/helm-create-basic-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-basic-chart.md)] --- ## Exporting the YAML for our application - The following section assumes that DockerCoins is currently running - If DockerCoins is not running, see next slide .exercise[ - Create one YAML file for each resource that we need: .small[ ```bash while read kind name; do kubectl get -o yaml $kind $name > dockercoins/templates/$name-$kind.yaml done <<EOF deployment worker deployment hasher daemonset rng deployment webui deployment redis service hasher service rng service webui service redis EOF ``` ] ] .debug[[k8s/helm-create-basic-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-basic-chart.md)] --- ## Obtaining DockerCoins YAML - If DockerCoins is not running, we can also obtain the YAML from a public repository .exercise[ - Clone the kubercoins repository: ```bash git clone https://github.com/jpetazzo/kubercoins ``` - Copy the YAML files to the `templates/` directory: ```bash cp kubercoins/*.yaml dockercoins/templates/ ``` ] .debug[[k8s/helm-create-basic-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-basic-chart.md)] --- ## Testing our helm chart .exercise[ - Let's install our helm chart! ``` helm install helmcoins dockercoins ``` (`helmcoins` is the name of the release; `dockercoins` is the local path of the chart) ] -- - Since the application is already deployed, this will fail: ``` Error: rendered manifests contain a resource that already exists. Unable to continue with install: existing resource conflict: kind: Service, namespace: default, name: hasher ``` - To avoid naming conflicts, we will deploy the application in another *namespace* .debug[[k8s/helm-create-basic-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-basic-chart.md)] --- ## Switching to another namespace - We need create a new namespace (Helm 2 creates namespaces automatically; Helm 3 doesn't anymore) - We need to tell Helm which namespace to use .exercise[ - Create a new namespace: ```bash kubectl create namespace helmcoins ``` - Deploy our chart in that namespace: ```bash helm install helmcoins dockercoins --namespace=helmcoins ``` ] .debug[[k8s/helm-create-basic-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-basic-chart.md)] --- ## Helm releases are namespaced - Let's try to see the release that we just deployed .exercise[ - List Helm releases: ```bash helm list ``` ] Our release doesn't show up! We have to specify its namespace (or switch to that namespace). .debug[[k8s/helm-create-basic-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-basic-chart.md)] --- ## Specifying the namespace - Try again, with the correct namespace .exercise[ - List Helm releases in `helmcoins`: ```bash helm list --namespace=helmcoins ``` ] .debug[[k8s/helm-create-basic-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-basic-chart.md)] --- ## Checking our new copy of DockerCoins - We can check the worker logs, or the web UI .exercise[ - Retrieve the NodePort number of the web UI: ```bash kubectl get service webui --namespace=helmcoins ``` - Open it in a web browser - Look at the worker logs: ```bash kubectl logs deploy/worker --tail=10 --follow --namespace=helmcoins ``` ] Note: it might take a minute or two for the worker to start. .debug[[k8s/helm-create-basic-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-basic-chart.md)] --- ## Discussion, shortcomings - Helm (and Kubernetes) best practices recommend to add a number of annotations (e.g. `app.kubernetes.io/name`, `helm.sh/chart`, `app.kubernetes.io/instance` ...) - Our basic chart doesn't have any of these - Our basic chart doesn't use any template tag - Does it make sense to use Helm in that case? - *Yes,* because Helm will: - track the resources created by the chart - save successive revisions, allowing us to rollback [Helm docs](https://helm.sh/docs/topics/chart_best_practices/labels/) and [Kubernetes docs](https://kubernetes.io/docs/concepts/overview/working-with-objects/common-labels/) have details about recommended annotations and labels. .debug[[k8s/helm-create-basic-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-basic-chart.md)] --- ## Cleaning up - Let's remove that chart before moving on .exercise[ - Delete the release (don't forget to specify the namespace): ```bash helm delete helmcoins --namespace=helmcoins ``` ] ??? :EN:- Writing a basic Helm chart for the whole app :FR:- Écriture d'un *chart* Helm simplifié .debug[[k8s/helm-create-basic-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-basic-chart.md)] --- class: pic .interstitial[] --- name: toc-creating-better-helm-charts class: title Creating better Helm charts .nav[ [Previous section](#toc-creating-a-basic-chart) | [Back to table of contents](#toc-module-1) | [Next section](#toc-helm-secrets) ] .debug[(automatically generated title slide)] --- # Creating better Helm charts - We are going to create a chart with the helper `helm create` - This will give us a chart implementing lots of Helm best practices (labels, annotations, structure of the `values.yaml` file ...) - We will use that chart as a generic Helm chart - We will use it to deploy DockerCoins - Each component of DockerCoins will have its own *release* - In other words, we will "install" that Helm chart multiple times (one time per component of DockerCoins) .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Creating a generic chart - Rather than starting from scratch, we will use `helm create` - This will give us a basic chart that we will customize .exercise[ - Create a basic chart: ```bash cd ~ helm create helmcoins ``` ] This creates a basic chart in the directory `helmcoins`. .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## What's in the basic chart? - The basic chart will create a Deployment and a Service - Optionally, it will also include an Ingress - If we don't pass any values, it will deploy the `nginx` image - We can override many things in that chart - Let's try to deploy DockerCoins components with that chart! .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Writing `values.yaml` for our components - We need to write one `values.yaml` file for each component (hasher, redis, rng, webui, worker) - We will start with the `values.yaml` of the chart, and remove what we don't need - We will create 5 files: hasher.yaml, redis.yaml, rng.yaml, webui.yaml, worker.yaml - In each file, we want to have: ```yaml image: repository: IMAGE-REPOSITORY-NAME tag: IMAGE-TAG ``` .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Getting started - For component X, we want to use the image dockercoins/X:v0.1 (for instance, for rng, we want to use the image dockercoins/rng:v0.1) - Exception: for redis, we want to use the official image redis:latest .exercise[ - Write YAML files for the 5 components, with the following model: ```yaml image: repository: `IMAGE-REPOSITORY-NAME` (e.g. dockercoins/worker) tag: `IMAGE-TAG` (e.g. v0.1) ``` ] .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Deploying DockerCoins components - For convenience, let's work in a separate namespace .exercise[ - Create a new namespace (if it doesn't already exist): ```bash kubectl create namespace helmcoins ``` - Switch to that namespace: ```bash kns helmcoins ``` ] .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Deploying the chart - To install a chart, we can use the following command: ```bash helm install COMPONENT-NAME CHART-DIRECTORY ``` - We can also use the following command, which is *idempotent*: ```bash helm upgrade COMPONENT-NAME CHART-DIRECTORY --install ``` .exercise[ - Install the 5 components of DockerCoins: ```bash for COMPONENT in hasher redis rng webui worker; do helm upgrade $COMPONENT helmcoins --install --values=$COMPONENT.yaml done ``` ] .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- class: extra-details ## "Idempotent" - Idempotent = that can be applied multiple times without changing the result (the word is commonly used in maths and computer science) - In this context, this means: - if the action (installing the chart) wasn't done, do it - if the action was already done, don't do anything - Ideally, when such an action fails, it can be retried safely (as opposed to, e.g., installing a new release each time we run it) - Other example: `kubectl -f some-file.yaml` .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Checking what we've done - Let's see if DockerCoins is working! .exercise[ - Check the logs of the worker: ```bash stern worker ``` - Look at the resources that were created: ```bash kubectl get all ``` ] There are *many* issues to fix! .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Can't pull image - It looks like our images can't be found .exercise[ - Use `kubectl describe` on any of the pods in error ] - We're trying to pull `rng:1.16.0` instead of `rng:v0.1`! - Where does that `1.16.0` tag come from? .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Inspecting our template - Let's look at the `templates/` directory (and try to find the one generating the Deployment resource) .exercise[ - Show the structure of the `helmcoins` chart that Helm generated: ```bash tree helmcoins ``` - Check the file `helmcoins/templates/deployment.yaml` - Look for the `image:` parameter ] *The image tag references `{{ .Chart.AppVersion }}`. Where does that come from?* .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## The `.Chart` variable - `.Chart` is a map corresponding to the values in `Chart.yaml` - Let's look for `AppVersion` there! .exercise[ - Check the file `helmcoins/Chart.yaml` - Look for the `appVersion:` parameter ] (Yes, the case is different between the template and the Chart file.) .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Using the correct tags - If we change `AppVersion` to `v0.1`, it will change for *all* deployments (including redis) - Instead, let's change the *template* to use `{{ .Values.image.tag }}` (to match what we've specified in our values YAML files) .exercise[ - Edit `helmcoins/templates/deployment.yaml` - Replace `{{ .Chart.AppVersion }}` with `{{ .Values.image.tag }}` ] .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Upgrading to use the new template - Technically, we just made a new version of the *chart* - To use the new template, we need to *upgrade* the release to use that chart .exercise[ - Upgrade all components: ```bash for COMPONENT in hasher redis rng webui worker; do helm upgrade $COMPONENT helmcoins done ``` - Check how our pods are doing: ```bash kubectl get pods ``` ] We should see all pods "Running". But ... not all of them are READY. .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Troubleshooting readiness - `hasher`, `rng`, `webui` should show up as `1/1 READY` - But `redis` and `worker` should show up as `0/1 READY` - Why? .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Troubleshooting pods - The easiest way to troubleshoot pods is to look at *events* - We can look at all the events on the cluster (with `kubectl get events`) - Or we can use `kubectl describe` on the objects that have problems (`kubectl describe` will retrieve the events related to the object) .exercise[ - Check the events for the redis pods: ```bash kubectl describe pod -l app.kubernetes.io/name=redis ``` ] It's failing both its liveness and readiness probes! .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Healthchecks - The default chart defines healthchecks doing HTTP requests on port 80 - That won't work for redis and worker (redis is not HTTP, and not on port 80; worker doesn't even listen) -- - We could remove or comment out the healthchecks - We could also make them conditional - This sounds more interesting, let's do that! .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Conditionals - We need to enclose the healthcheck block with: `{{ if false }}` at the beginning (we can change the condition later) `{{ end }}` at the end .exercise[ - Edit `helmcoins/templates/deployment.yaml` - Add `{{ if false }}` on the line before `livenessProbe` - Add `{{ end }}` after the `readinessProbe` section (see next slide for details) ] .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- This is what the new YAML should look like (added lines in yellow): ```yaml ports: - name: http containerPort: 80 protocol: TCP `{{ if false }}` livenessProbe: httpGet: path: / port: http readinessProbe: httpGet: path: / port: http `{{ end }}` resources: {{- toYaml .Values.resources | nindent 12 }} ``` .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Testing the new chart - We need to upgrade all the services again to use the new chart .exercise[ - Upgrade all components: ```bash for COMPONENT in hasher redis rng webui worker; do helm upgrade $COMPONENT helmcoins done ``` - Check how our pods are doing: ```bash kubectl get pods ``` ] Everything should now be running! .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## What's next? - Is this working now? .exercise[ - Let's check the logs of the worker: ```bash stern worker ``` ] This error might look familiar ... The worker can't resolve `redis`. Typically, that error means that the `redis` service doesn't exist. .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Checking services - What about the services created by our chart? .exercise[ - Check the list of services: ```bash kubectl get services ``` ] They are named `COMPONENT-helmcoins` instead of just `COMPONENT`. We need to change that! .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Where do the service names come from? - Look at the YAML template used for the services - It should be using `{{ include "helmcoins.fullname" }}` - `include` indicates a *template block* defined somewhere else .exercise[ - Find where that `fullname` thing is defined: ```bash grep define.*fullname helmcoins/templates/* ``` ] It should be in `_helpers.tpl`. We can look at the definition, but it's fairly complex ... .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Changing service names - Instead of that `{{ include }}` tag, let's use the name of the release - The name of the release is available as `{{ .Release.Name }}` .exercise[ - Edit `helmcoins/templates/service.yaml` - Replace the service name with `{{ .Release.Name }}` - Upgrade all the releases to use the new chart - Confirm that the services now have the right names ] .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Is it working now? - If we look at the worker logs, it appears that the worker is still stuck - What could be happening? -- - The redis service is not on port 80! - Let's see how the port number is set - We need to look at both the *deployment* template and the *service* template .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Service template - In the service template, we have the following section: ```yaml ports: - port: {{ .Values.service.port }} targetPort: http protocol: TCP name: http ``` - `port` is the port on which the service is "listening" (i.e. to which our code needs to connect) - `targetPort` is the port on which the pods are listening - The `name` is not important (it's OK if it's `http` even for non-HTTP traffic) .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Setting the redis port - Let's add a `service.port` value to the redis release .exercise[ - Edit `redis.yaml` to add: ```yaml service: port: 6379 ``` - Apply the new values file: ```bash helm upgrade redis helmcoins --values=redis.yaml ``` ] .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Deployment template - If we look at the deployment template, we see this section: ```yaml ports: - name: http containerPort: 80 protocol: TCP ``` - The container port is hard-coded to 80 - We'll change it to use the port number specified in the values .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Changing the deployment template .exercise[ - Edit `helmcoins/templates/deployment.yaml` - The line with `containerPort` should be: ```yaml containerPort: {{ .Values.service.port }} ``` ] .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Apply changes - Re-run the for loop to execute `helm upgrade` one more time - Check the worker logs - This time, it should be working! .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- ## Extra steps - We don't need to create a service for the worker - We can put the whole service block in a conditional (this will require additional changes in other files referencing the service) - We can set the webui to be a NodePort service - We can change the number of workers with `replicaCount` - And much more! ??? :EN:- Writing better Helm charts for app components :FR:- Écriture de *charts* composant par composant .debug[[k8s/helm-create-better-chart.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-create-better-chart.md)] --- class: pic .interstitial[] --- name: toc-helm-secrets class: title Helm secrets .nav[ [Previous section](#toc-creating-better-helm-charts) | [Back to table of contents](#toc-module-1) | [Next section](#toc-network-policies) ] .debug[(automatically generated title slide)] --- # Helm secrets - Helm can do *rollbacks*: - to previously installed charts - to previous sets of values - How and where does it store the data needed to do that? - Let's investigate! .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- ## We need a release - We need to install something with Helm - Let's use the `stable/tomcat` chart as an example .exercise[ - Install a release called `tomcat` with the chart `stable/tomcat`: ```bash helm upgrade tomcat stable/tomcat --install ``` - Let's upgrade that release, and change a value: ```bash helm upgrade tomcat stable/tomcat --set ingress.enabled=true ``` ] .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- ## Release history - Helm stores successive revisions of each release .exercise[ - View the history for that release: ```bash helm history tomcat ``` ] Where does that come from? .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- ## Investigate - Possible options: - local filesystem (no, because history is visible from other machines) - persistent volumes (no, Helm works even without them) - ConfigMaps, Secrets? .exercise[ - Look for ConfigMaps and Secrets: ```bash kubectl get configmaps,secrets ``` ] -- We should see a number of secrets with TYPE `helm.sh/release.v1`. .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- ## Unpacking a secret - Let's find out what is in these Helm secrets .exercise[ - Examine the secret corresponding to the second release of `tomcat`: ```bash kubectl describe secret sh.helm.release.v1.tomcat.v2 ``` (`v1` is the secret format; `v2` means revision 2 of the `tomcat` release) ] There is a key named `release`. .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- ## Unpacking the release data - Let's see what's in this `release` thing! .exercise[ - Dump the secret: ```bash kubectl get secret sh.helm.release.v1.tomcat.v2 \ -o go-template='{{ .data.release }}' ``` ] Secrets are encoded in base64. We need to decode that! .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- ## Decoding base64 - We can pipe the output through `base64 -d` or use go-template's `base64decode` .exercise[ - Decode the secret: ```bash kubectl get secret sh.helm.release.v1.tomcat.v2 \ -o go-template='{{ .data.release | base64decode }}' ``` ] -- ... Wait, this *still* looks like base64. What's going on? -- Let's try one more round of decoding! .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- ## Decoding harder - Just add one more base64 decode filter .exercise[ - Decode it twice: ```bash kubectl get secret sh.helm.release.v1.tomcat.v2 \ -o go-template='{{ .data.release | base64decode | base64decode }}' ``` ] -- ... OK, that was *a lot* of binary data. What sould we do with it? .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- ## Guessing data type - We could use `file` to figure out the data type .exercise[ - Pipe the decoded release through `file -`: ```bash kubectl get secret sh.helm.release.v1.tomcat.v2 \ -o go-template='{{ .data.release | base64decode | base64decode }}' \ | file - ``` ] -- Gzipped data! It can be decoded with `gunzip -c`. .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- ## Uncompressing the data - Let's uncompress the data and save it to a file .exercise[ - Rerun the previous command, but with `| gunzip -c > release-info` : ```bash kubectl get secret sh.helm.release.v1.tomcat.v2 \ -o go-template='{{ .data.release | base64decode | base64decode }}' \ | gunzip -c > release-info ``` - Look at `release-info`: ```bash cat release-info ``` ] -- It's a bundle of ~~YAML~~ JSON. .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- ## Looking at the JSON If we inspect that JSON (e.g. with `jq keys release-info`), we see: - `chart` (contains the entire chart used for that release) - `config` (contains the values that we've set) - `info` (date of deployment, status messages) - `manifest` (YAML generated from the templates) - `name` (name of the release, so `tomcat`) - `namespace` (namespace where we deployed the release) - `version` (revision number within that release; starts at 1) The chart is in a structured format, but it's entirely captured in this JSON. .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- ## Conclusions - Helm stores each release information in a Secret in the namespace of the release - The secret is JSON object (gzipped and encoded in base64) - It contains the manifests generated for that release - ... And everything needed to rebuild these manifests (including the full source of the chart, and the values used) - This allows arbitrary rollbacks, as well as tweaking values even without having access to the source of the chart (or the chart repo) used for deployment ??? :EN:- Deep dive into Helm internals :FR:- Fonctionnement interne de Helm .debug[[k8s/helm-secrets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/helm-secrets.md)] --- class: pic .interstitial[] --- name: toc-network-policies class: title Network policies .nav[ [Previous section](#toc-helm-secrets) | [Back to table of contents](#toc-module-2) | [Next section](#toc-authentication-and-authorization) ] .debug[(automatically generated title slide)] --- # Network policies - Namespaces help us to *organize* resources - Namespaces do not provide isolation - By default, every pod can contact every other pod - By default, every service accepts traffic from anyone - If we want this to be different, we need *network policies* .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## What's a network policy? A network policy is defined by the following things. - A *pod selector* indicating which pods it applies to e.g.: "all pods in namespace `blue` with the label `zone=internal`" - A list of *ingress rules* indicating which inbound traffic is allowed e.g.: "TCP connections to ports 8000 and 8080 coming from pods with label `zone=dmz`, and from the external subnet 4.42.6.0/24, except 4.42.6.5" - A list of *egress rules* indicating which outbound traffic is allowed A network policy can provide ingress rules, egress rules, or both. .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## How do network policies apply? - A pod can be "selected" by any number of network policies - If a pod isn't selected by any network policy, then its traffic is unrestricted (In other words: in the absence of network policies, all traffic is allowed) - If a pod is selected by at least one network policy, then all traffic is blocked ... ... unless it is explicitly allowed by one of these network policies .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- class: extra-details ## Traffic filtering is flow-oriented - Network policies deal with *connections*, not individual packets - Example: to allow HTTP (80/tcp) connections to pod A, you only need an ingress rule (You do not need a matching egress rule to allow response traffic to go through) - This also applies for UDP traffic (Allowing DNS traffic can be done with a single rule) - Network policy implementations use stateful connection tracking .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Pod-to-pod traffic - Connections from pod A to pod B have to be allowed by both pods: - pod A has to be unrestricted, or allow the connection as an *egress* rule - pod B has to be unrestricted, or allow the connection as an *ingress* rule - As a consequence: if a network policy restricts traffic going from/to a pod, <br/> the restriction cannot be overridden by a network policy selecting another pod - This prevents an entity managing network policies in namespace A (but without permission to do so in namespace B) from adding network policies giving them access to namespace B .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## The rationale for network policies - In network security, it is generally considered better to "deny all, then allow selectively" (The other approach, "allow all, then block selectively" makes it too easy to leave holes) - As soon as one network policy selects a pod, the pod enters this "deny all" logic - Further network policies can open additional access - Good network policies should be scoped as precisely as possible - In particular: make sure that the selector is not too broad (Otherwise, you end up affecting pods that were otherwise well secured) .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Our first network policy This is our game plan: - run a web server in a pod - create a network policy to block all access to the web server - create another network policy to allow access only from specific pods .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Running our test web server .exercise[ - Let's use the `nginx` image: ```bash kubectl create deployment testweb --image=nginx ``` <!-- ```bash kubectl wait deployment testweb --for condition=available ``` --> - Find out the IP address of the pod with one of these two commands: ```bash kubectl get pods -o wide -l app=testweb IP=$(kubectl get pods -l app=testweb -o json | jq -r .items[0].status.podIP) ``` - Check that we can connect to the server: ```bash curl $IP ``` ] The `curl` command should show us the "Welcome to nginx!" page. .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Adding a very restrictive network policy - The policy will select pods with the label `app=testweb` - It will specify an empty list of ingress rules (matching nothing) .exercise[ - Apply the policy in this YAML file: ```bash kubectl apply -f ~/container.training/k8s/netpol-deny-all-for-testweb.yaml ``` - Check if we can still access the server: ```bash curl $IP ``` <!-- ```wait curl``` ```key ^C``` --> ] The `curl` command should now time out. .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Looking at the network policy This is the file that we applied: ```yaml kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: deny-all-for-testweb spec: podSelector: matchLabels: app: testweb ingress: [] ``` .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Allowing connections only from specific pods - We want to allow traffic from pods with the label `run=testcurl` - Reminder: this label is automatically applied when we do `kubectl run testcurl ...` .exercise[ - Apply another policy: ```bash kubectl apply -f ~/container.training/k8s/netpol-allow-testcurl-for-testweb.yaml ``` ] .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Looking at the network policy This is the second file that we applied: ```yaml kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: allow-testcurl-for-testweb spec: podSelector: matchLabels: app: testweb ingress: - from: - podSelector: matchLabels: run: testcurl ``` .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Testing the network policy - Let's create pods with, and without, the required label .exercise[ - Try to connect to testweb from a pod with the `run=testcurl` label: ```bash kubectl run testcurl --rm -i --image=centos -- curl -m3 $IP ``` - Try to connect to testweb with a different label: ```bash kubectl run testkurl --rm -i --image=centos -- curl -m3 $IP ``` ] The first command will work (and show the "Welcome to nginx!" page). The second command will fail and time out after 3 seconds. (The timeout is obtained with the `-m3` option.) .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## An important warning - Some network plugins only have partial support for network policies - For instance, Weave added support for egress rules [in version 2.4](https://github.com/weaveworks/weave/pull/3313) (released in July 2018) - But only recently added support for ipBlock [in version 2.5](https://github.com/weaveworks/weave/pull/3367) (released in Nov 2018) - Unsupported features might be silently ignored (Making you believe that you are secure, when you're not) .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Network policies, pods, and services - Network policies apply to *pods* - A *service* can select multiple pods (And load balance traffic across them) - It is possible that we can connect to some pods, but not some others (Because of how network policies have been defined for these pods) - In that case, connections to the service will randomly pass or fail (Depending on whether the connection was sent to a pod that we have access to or not) .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Network policies and namespaces - A good strategy is to isolate a namespace, so that: - all the pods in the namespace can communicate together - other namespaces cannot access the pods - external access has to be enabled explicitly - Let's see what this would look like for the DockerCoins app! .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Network policies for DockerCoins - We are going to apply two policies - The first policy will prevent traffic from other namespaces - The second policy will allow traffic to the `webui` pods - That's all we need for that app! .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Blocking traffic from other namespaces This policy selects all pods in the current namespace. It allows traffic only from pods in the current namespace. (An empty `podSelector` means "all pods.") ```yaml kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: deny-from-other-namespaces spec: podSelector: {} ingress: - from: - podSelector: {} ``` .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Allowing traffic to `webui` pods This policy selects all pods with label `app=webui`. It allows traffic from any source. (An empty `from` field means "all sources.") ```yaml kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: allow-webui spec: podSelector: matchLabels: app: webui ingress: - from: [] ``` .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Applying both network policies - Both network policies are declared in the file `k8s/netpol-dockercoins.yaml` .exercise[ - Apply the network policies: ```bash kubectl apply -f ~/container.training/k8s/netpol-dockercoins.yaml ``` - Check that we can still access the web UI from outside <br/> (and that the app is still working correctly!) - Check that we can't connect anymore to `rng` or `hasher` through their ClusterIP ] Note: using `kubectl proxy` or `kubectl port-forward` allows us to connect regardless of existing network policies. This allows us to debug and troubleshoot easily, without having to poke holes in our firewall. .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Cleaning up our network policies - The network policies that we have installed block all traffic to the default namespace - We should remove them, otherwise further exercises will fail! .exercise[ - Remove all network policies: ```bash kubectl delete networkpolicies --all ``` ] .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Protecting the control plane - Should we add network policies to block unauthorized access to the control plane? (etcd, API server, etc.) -- - At first, it seems like a good idea ... -- - But it *shouldn't* be necessary: - not all network plugins support network policies - the control plane is secured by other methods (mutual TLS, mostly) - the code running in our pods can reasonably expect to contact the API <br/> (and it can do so safely thanks to the API permission model) - If we block access to the control plane, we might disrupt legitimate code - ...Without necessarily improving security .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- ## Further resources - As always, the [Kubernetes documentation](https://kubernetes.io/docs/concepts/services-networking/network-policies/) is a good starting point - The API documentation has a lot of detail about the format of various objects: - [NetworkPolicy](https://kubernetes.io/docs/reference/generated/kubernetes-api/v1.12/#networkpolicy-v1-networking-k8s-io) - [NetworkPolicySpec](https://kubernetes.io/docs/reference/generated/kubernetes-api/v1.12/#networkpolicyspec-v1-networking-k8s-io) - [NetworkPolicyIngressRule](https://kubernetes.io/docs/reference/generated/kubernetes-api/v1.12/#networkpolicyingressrule-v1-networking-k8s-io) - etc. - And two resources by [Ahmet Alp Balkan](https://ahmet.im/): - a [very good talk about network policies](https://www.youtube.com/watch?list=PLj6h78yzYM2P-3-xqvmWaZbbI1sW-ulZb&v=3gGpMmYeEO8) at KubeCon North America 2017 - a repository of [ready-to-use recipes](https://github.com/ahmetb/kubernetes-network-policy-recipes) for network policies ??? :EN:- Isolating workloads with Network Policies :FR:- Isolation réseau avec les *network policies* .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/netpol.md)] --- class: pic .interstitial[] --- name: toc-authentication-and-authorization class: title Authentication and authorization .nav[ [Previous section](#toc-network-policies) | [Back to table of contents](#toc-module-2) | [Next section](#toc-static-pods) ] .debug[(automatically generated title slide)] --- # Authentication and authorization - In this section, we will: - define authentication and authorization - explain how they are implemented in Kubernetes - talk about tokens, certificates, service accounts, RBAC ... - But first: why do we need all this? .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## The need for fine-grained security - The Kubernetes API should only be available for identified users - we don't want "guest access" (except in very rare scenarios) - we don't want strangers to use our compute resources, delete our apps ... - our keys and passwords should not be exposed to the public - Users will often have different access rights - cluster admin (similar to UNIX "root") can do everything - developer might access specific resources, or a specific namespace - supervision might have read only access to *most* resources .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Example: custom HTTP load balancer - Let's imagine that we have a custom HTTP load balancer for multiple apps - Each app has its own *Deployment* resource - By default, the apps are "sleeping" and scaled to zero - When a request comes in, the corresponding app gets woken up - After some inactivity, the app is scaled down again - This HTTP load balancer needs API access (to scale up/down) - What if *a wild vulnerability appears*? .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Consequences of vulnerability - If the HTTP load balancer has the same API access as we do: *full cluster compromise (easy data leak, cryptojacking...)* - If the HTTP load balancer has `update` permissions on the Deployments: *defacement (easy), MITM / impersonation (medium to hard)* - If the HTTP load balancer only has permission to `scale` the Deployments: *denial-of-service* - All these outcomes are bad, but some are worse than others .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Definitions - Authentication = verifying the identity of a person On a UNIX system, we can authenticate with login+password, SSH keys ... - Authorization = listing what they are allowed to do On a UNIX system, this can include file permissions, sudoer entries ... - Sometimes abbreviated as "authn" and "authz" - In good modular systems, these things are decoupled (so we can e.g. change a password or SSH key without having to reset access rights) .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Authentication in Kubernetes - When the API server receives a request, it tries to authenticate it (it examines headers, certificates... anything available) - Many authentication methods are available and can be used simultaneously (we will see them on the next slide) - It's the job of the authentication method to produce: - the user name - the user ID - a list of groups - The API server doesn't interpret these; that'll be the job of *authorizers* .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Authentication methods - TLS client certificates (that's what we've been doing with `kubectl` so far) - Bearer tokens (a secret token in the HTTP headers of the request) - [HTTP basic auth](https://en.wikipedia.org/wiki/Basic_access_authentication) (carrying user and password in an HTTP header; [deprecated since Kubernetes 1.19](https://github.com/kubernetes/kubernetes/pull/89069)) - Authentication proxy (sitting in front of the API and setting trusted headers) .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Anonymous requests - If any authentication method *rejects* a request, it's denied (`401 Unauthorized` HTTP code) - If a request is neither rejected nor accepted by anyone, it's anonymous - the user name is `system:anonymous` - the list of groups is `[system:unauthenticated]` - By default, the anonymous user can't do anything (that's what you get if you just `curl` the Kubernetes API) .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Authentication with TLS certificates - This is enabled in most Kubernetes deployments - The user name is derived from the `CN` in the client certificates - The groups are derived from the `O` fields in the client certificate - From the point of view of the Kubernetes API, users do not exist (i.e. they are not stored in etcd or anywhere else) - Users can be created (and added to groups) independently of the API - The Kubernetes API can be set up to use your custom CA to validate client certs .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Viewing our admin certificate - Let's inspect the certificate we've been using all this time! .exercise[ - This command will show the `CN` and `O` fields for our certificate: ```bash kubectl config view \ --raw \ -o json \ | jq -r .users[0].user[\"client-certificate-data\"] \ | openssl base64 -d -A \ | openssl x509 -text \ | grep Subject: ``` ] Let's break down that command together! 😅 .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Breaking down the command - `kubectl config view` shows the Kubernetes user configuration - `--raw` includes certificate information (which shows as REDACTED otherwise) - `-o json` outputs the information in JSON format - `| jq ...` extracts the field with the user certificate (in base64) - `| openssl base64 -d -A` decodes the base64 format (now we have a PEM file) - `| openssl x509 -text` parses the certificate and outputs it as plain text - `| grep Subject:` shows us the line that interests us → We are user `kubernetes-admin`, in group `system:masters`. (We will see later how and why this gives us the permissions that we have.) .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## User certificates in practice - The Kubernetes API server does not support certificate revocation (see issue [#18982](https://github.com/kubernetes/kubernetes/issues/18982)) - As a result, we don't have an easy way to terminate someone's access (if their key is compromised, or they leave the organization) - Option 1: re-create a new CA and re-issue everyone's certificates <br/> → Maybe OK if we only have a few users; no way otherwise - Option 2: don't use groups; grant permissions to individual users <br/> → Inconvenient if we have many users and teams; error-prone - Option 3: issue short-lived certificates (e.g. 24 hours) and renew them often <br/> → This can be facilitated by e.g. Vault or by the Kubernetes CSR API .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Authentication with tokens - Tokens are passed as HTTP headers: `Authorization: Bearer and-then-here-comes-the-token` - Tokens can be validated through a number of different methods: - static tokens hard-coded in a file on the API server - [bootstrap tokens](https://kubernetes.io/docs/reference/access-authn-authz/bootstrap-tokens/) (special case to create a cluster or join nodes) - [OpenID Connect tokens](https://kubernetes.io/docs/reference/access-authn-authz/authentication/#openid-connect-tokens) (to delegate authentication to compatible OAuth2 providers) - service accounts (these deserve more details, coming right up!) .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Service accounts - A service account is a user that exists in the Kubernetes API (it is visible with e.g. `kubectl get serviceaccounts`) - Service accounts can therefore be created / updated dynamically (they don't require hand-editing a file and restarting the API server) - A service account is associated with a set of secrets (the kind that you can view with `kubectl get secrets`) - Service accounts are generally used to grant permissions to applications, services... (as opposed to humans) .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Token authentication in practice - We are going to list existing service accounts - Then we will extract the token for a given service account - And we will use that token to authenticate with the API .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Listing service accounts .exercise[ - The resource name is `serviceaccount` or `sa` for short: ```bash kubectl get sa ``` ] There should be just one service account in the default namespace: `default`. .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Finding the secret .exercise[ - List the secrets for the `default` service account: ```bash kubectl get sa default -o yaml SECRET=$(kubectl get sa default -o json | jq -r .secrets[0].name) ``` ] It should be named `default-token-XXXXX`. .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Extracting the token - The token is stored in the secret, wrapped with base64 encoding .exercise[ - View the secret: ```bash kubectl get secret $SECRET -o yaml ``` - Extract the token and decode it: ```bash TOKEN=$(kubectl get secret $SECRET -o json \ | jq -r .data.token | openssl base64 -d -A) ``` ] .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Using the token - Let's send a request to the API, without and with the token .exercise[ - Find the ClusterIP for the `kubernetes` service: ```bash kubectl get svc kubernetes API=$(kubectl get svc kubernetes -o json | jq -r .spec.clusterIP) ``` - Connect without the token: ```bash curl -k https://$API ``` - Connect with the token: ```bash curl -k -H "Authorization: Bearer $TOKEN" https://$API ``` ] .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Results - In both cases, we will get a "Forbidden" error - Without authentication, the user is `system:anonymous` - With authentication, it is shown as `system:serviceaccount:default:default` - The API "sees" us as a different user - But neither user has any rights, so we can't do nothin' - Let's change that! .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Authorization in Kubernetes - There are multiple ways to grant permissions in Kubernetes, called [authorizers](https://kubernetes.io/docs/reference/access-authn-authz/authorization/#authorization-modules): - [Node Authorization](https://kubernetes.io/docs/reference/access-authn-authz/node/) (used internally by kubelet; we can ignore it) - [Attribute-based access control](https://kubernetes.io/docs/reference/access-authn-authz/abac/) (powerful but complex and static; ignore it too) - [Webhook](https://kubernetes.io/docs/reference/access-authn-authz/webhook/) (each API request is submitted to an external service for approval) - [Role-based access control](https://kubernetes.io/docs/reference/access-authn-authz/rbac/) (associates permissions to users dynamically) - The one we want is the last one, generally abbreviated as RBAC .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Role-based access control - RBAC allows to specify fine-grained permissions - Permissions are expressed as *rules* - A rule is a combination of: - [verbs](https://kubernetes.io/docs/reference/access-authn-authz/authorization/#determine-the-request-verb) like create, get, list, update, delete... - resources (as in "API resource," like pods, nodes, services...) - resource names (to specify e.g. one specific pod instead of all pods) - in some case, [subresources](https://kubernetes.io/docs/reference/access-authn-authz/rbac/#referring-to-resources) (e.g. logs are subresources of pods) .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## From rules to roles to rolebindings - A *role* is an API object containing a list of *rules* Example: role "external-load-balancer-configurator" can: - [list, get] resources [endpoints, services, pods] - [update] resources [services] - A *rolebinding* associates a role with a user Example: rolebinding "external-load-balancer-configurator": - associates user "external-load-balancer-configurator" - with role "external-load-balancer-configurator" - Yes, there can be users, roles, and rolebindings with the same name - It's a good idea for 1-1-1 bindings; not so much for 1-N ones .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Cluster-scope permissions - API resources Role and RoleBinding are for objects within a namespace - We can also define API resources ClusterRole and ClusterRoleBinding - These are a superset, allowing us to: - specify actions on cluster-wide objects (like nodes) - operate across all namespaces - We can create Role and RoleBinding resources within a namespace - ClusterRole and ClusterRoleBinding resources are global .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Pods and service accounts - A pod can be associated with a service account - by default, it is associated with the `default` service account - as we saw earlier, this service account has no permissions anyway - The associated token is exposed to the pod's filesystem (in `/var/run/secrets/kubernetes.io/serviceaccount/token`) - Standard Kubernetes tooling (like `kubectl`) will look for it there - So Kubernetes tools running in a pod will automatically use the service account .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## In practice - We are going to create a service account - We will use a default cluster role (`view`) - We will bind together this role and this service account - Then we will run a pod using that service account - In this pod, we will install `kubectl` and check our permissions .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Creating a service account - We will call the new service account `viewer` (note that nothing prevents us from calling it `view`, like the role) .exercise[ - Create the new service account: ```bash kubectl create serviceaccount viewer ``` - List service accounts now: ```bash kubectl get serviceaccounts ``` ] .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Binding a role to the service account - Binding a role = creating a *rolebinding* object - We will call that object `viewercanview` (but again, we could call it `view`) .exercise[ - Create the new role binding: ```bash kubectl create rolebinding viewercanview \ --clusterrole=view \ --serviceaccount=default:viewer ``` ] It's important to note a couple of details in these flags... .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Roles vs Cluster Roles - We used `--clusterrole=view` - What would have happened if we had used `--role=view`? - we would have bound the role `view` from the local namespace <br/>(instead of the cluster role `view`) - the command would have worked fine (no error) - but later, our API requests would have been denied - This is a deliberate design decision (we can reference roles that don't exist, and create/update them later) .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Users vs Service Accounts - We used `--serviceaccount=default:viewer` - What would have happened if we had used `--user=default:viewer`? - we would have bound the role to a user instead of a service account - again, the command would have worked fine (no error) - ...but our API requests would have been denied later - What's about the `default:` prefix? - that's the namespace of the service account - yes, it could be inferred from context, but... `kubectl` requires it .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Testing - We will run an `alpine` pod and install `kubectl` there .exercise[ - Run a one-time pod: ```bash kubectl run eyepod --rm -ti --restart=Never \ --serviceaccount=viewer \ --image alpine ``` - Install `curl`, then use it to install `kubectl`: ```bash apk add --no-cache curl URLBASE=https://storage.googleapis.com/kubernetes-release/release KUBEVER=$(curl -s $URLBASE/stable.txt) curl -LO $URLBASE/$KUBEVER/bin/linux/amd64/kubectl chmod +x kubectl ``` ] .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Running `kubectl` in the pod - We'll try to use our `view` permissions, then to create an object .exercise[ - Check that we can, indeed, view things: ```bash ./kubectl get all ``` - But that we can't create things: ``` ./kubectl create deployment testrbac --image=nginx ``` - Exit the container with `exit` or `^D` <!-- ```key ^D``` --> ] .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- ## Testing directly with `kubectl` - We can also check for permission with `kubectl auth can-i`: ```bash kubectl auth can-i list nodes kubectl auth can-i create pods kubectl auth can-i get pod/name-of-pod kubectl auth can-i get /url-fragment-of-api-request/ kubectl auth can-i '*' services ``` - And we can check permissions on behalf of other users: ```bash kubectl auth can-i list nodes \ --as some-user kubectl auth can-i list nodes \ --as system:serviceaccount:<namespace>:<name-of-service-account> ``` .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Where does this `view` role come from? - Kubernetes defines a number of ClusterRoles intended to be bound to users - `cluster-admin` can do *everything* (think `root` on UNIX) - `admin` can do *almost everything* (except e.g. changing resource quotas and limits) - `edit` is similar to `admin`, but cannot view or edit permissions - `view` has read-only access to most resources, except permissions and secrets *In many situations, these roles will be all you need.* *You can also customize them!* .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Customizing the default roles - If you need to *add* permissions to these default roles (or others), <br/> you can do it through the [ClusterRole Aggregation](https://kubernetes.io/docs/reference/access-authn-authz/rbac/#aggregated-clusterroles) mechanism - This happens by creating a ClusterRole with the following labels: ```yaml metadata: labels: rbac.authorization.k8s.io/aggregate-to-admin: "true" rbac.authorization.k8s.io/aggregate-to-edit: "true" rbac.authorization.k8s.io/aggregate-to-view: "true" ``` - This ClusterRole permissions will be added to `admin`/`edit`/`view` respectively - This is particulary useful when using CustomResourceDefinitions (since Kubernetes cannot guess which resources are sensitive and which ones aren't) .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Where do our permissions come from? - When interacting with the Kubernetes API, we are using a client certificate - We saw previously that this client certificate contained: `CN=kubernetes-admin` and `O=system:masters` - Let's look for these in existing ClusterRoleBindings: ```bash kubectl get clusterrolebindings -o yaml | grep -e kubernetes-admin -e system:masters ``` (`system:masters` should show up, but not `kubernetes-admin`.) - Where does this match come from? .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## The `system:masters` group - If we eyeball the output of `kubectl get clusterrolebindings -o yaml`, we'll find out! - It is in the `cluster-admin` binding: ```bash kubectl describe clusterrolebinding cluster-admin ``` - This binding associates `system:masters` with the cluster role `cluster-admin` - And the `cluster-admin` is, basically, `root`: ```bash kubectl describe clusterrole cluster-admin ``` .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: extra-details ## Figuring out who can do what - For auditing purposes, sometimes we want to know who can perform an action - There are a few tools to help us with that - [kubectl-who-can](https://github.com/aquasecurity/kubectl-who-can) by Aqua Security - [Review Access (aka Rakkess)](https://github.com/corneliusweig/rakkess) - Both are available as standalone programs, or as plugins for `kubectl` (`kubectl` plugins can be installed and managed with `krew`) ??? :EN:- Authentication and authorization in Kubernetes :EN:- Authentication with tokens and certificates :EN:- Authorization with RBAC (Role-Based Access Control) :EN:- Restricting permissions with Service Accounts :EN:- Working with Roles, Cluster Roles, Role Bindings, etc. :FR:- Identification et droits d'accès dans Kubernetes :FR:- Mécanismes d'identification par jetons et certificats :FR:- Le modèle RBAC *(Role-Based Access Control)* :FR:- Restreindre les permissions grâce aux *Service Accounts* :FR:- Comprendre les *Roles*, *Cluster Roles*, *Role Bindings*, etc. .debug[[k8s/authn-authz.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/authn-authz.md)] --- class: pic .interstitial[] --- name: toc-static-pods class: title Static pods .nav[ [Previous section](#toc-authentication-and-authorization) | [Back to table of contents](#toc-module-2) | [Next section](#toc-pod-security-policies) ] .debug[(automatically generated title slide)] --- # Static pods - Hosting the Kubernetes control plane on Kubernetes has advantages: - we can use Kubernetes' replication and scaling features for the control plane - we can leverage rolling updates to upgrade the control plane - However, there is a catch: - deploying on Kubernetes requires the API to be available - the API won't be available until the control plane is deployed - How can we get out of that chicken-and-egg problem? .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- ## A possible approach - Since each component of the control plane can be replicated... - We could set up the control plane outside of the cluster - Then, once the cluster is fully operational, create replicas running on the cluster - Finally, remove the replicas that are running outside of the cluster *What could possibly go wrong?* .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- ## Sawing off the branch you're sitting on - What if anything goes wrong? (During the setup or at a later point) - Worst case scenario, we might need to: - set up a new control plane (outside of the cluster) - restore a backup from the old control plane - move the new control plane to the cluster (again) - This doesn't sound like a great experience .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- ## Static pods to the rescue - Pods are started by kubelet (an agent running on every node) - To know which pods it should run, the kubelet queries the API server - The kubelet can also get a list of *static pods* from: - a directory containing one (or multiple) *manifests*, and/or - a URL (serving a *manifest*) - These "manifests" are basically YAML definitions (As produced by `kubectl get pod my-little-pod -o yaml`) .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- ## Static pods are dynamic - Kubelet will periodically reload the manifests - It will start/stop pods accordingly (i.e. it is not necessary to restart the kubelet after updating the manifests) - When connected to the Kubernetes API, the kubelet will create *mirror pods* - Mirror pods are copies of the static pods (so they can be seen with e.g. `kubectl get pods`) .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- ## Bootstrapping a cluster with static pods - We can run control plane components with these static pods - They can start without requiring access to the API server - Once they are up and running, the API becomes available - These pods are then visible through the API (We cannot upgrade them from the API, though) *This is how kubeadm has initialized our clusters.* .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- ## Static pods vs normal pods - The API only gives us read-only access to static pods - We can `kubectl delete` a static pod... ...But the kubelet will re-mirror it immediately - Static pods can be selected just like other pods (So they can receive service traffic) - A service can select a mixture of static and other pods .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- ## From static pods to normal pods - Once the control plane is up and running, it can be used to create normal pods - We can then set up a copy of the control plane in normal pods - Then the static pods can be removed - The scheduler and the controller manager use leader election (Only one is active at a time; removing an instance is seamless) - Each instance of the API server adds itself to the `kubernetes` service - Etcd will typically require more work! .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- ## From normal pods back to static pods - Alright, but what if the control plane is down and we need to fix it? - We restart it using static pods! - This can be done automatically with the [Pod Checkpointer] - The Pod Checkpointer automatically generates manifests of running pods - The manifests are used to restart these pods if API contact is lost (More details in the [Pod Checkpointer] documentation page) - This technique is used by [bootkube] [Pod Checkpointer]: https://github.com/kubernetes-incubator/bootkube/blob/master/cmd/checkpoint/README.md [bootkube]: https://github.com/kubernetes-incubator/bootkube .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- ## Where should the control plane run? *Is it better to run the control plane in static pods, or normal pods?* - If I'm a *user* of the cluster: I don't care, it makes no difference to me - What if I'm an *admin*, i.e. the person who installs, upgrades, repairs... the cluster? - If I'm using a managed Kubernetes cluster (AKS, EKS, GKE...) it's not my problem (I'm not the one setting up and managing the control plane) - If I already picked a tool (kubeadm, kops...) to set up my cluster, the tool decides for me - What if I haven't picked a tool yet, or if I'm installing from scratch? - static pods = easier to set up, easier to troubleshoot, less risk of outage - normal pods = easier to upgrade, easier to move (if nodes need to be shut down) .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- ## Static pods in action - On our clusters, the `staticPodPath` is `/etc/kubernetes/manifests` .exercise[ - Have a look at this directory: ```bash ls -l /etc/kubernetes/manifests ``` ] We should see YAML files corresponding to the pods of the control plane. .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- class: static-pods-exercise ## Running a static pod - We are going to add a pod manifest to the directory, and kubelet will run it .exercise[ - Copy a manifest to the directory: ```bash sudo cp ~/container.training/k8s/just-a-pod.yaml /etc/kubernetes/manifests ``` - Check that it's running: ```bash kubectl get pods ``` ] The output should include a pod named `hello-node1`. .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- class: static-pods-exercise ## Remarks In the manifest, the pod was named `hello`. ```yaml apiVersion: v1 kind: Pod metadata: name: hello namespace: default spec: containers: - name: hello image: nginx ``` The `-node1` suffix was added automatically by kubelet. If we delete the pod (with `kubectl delete`), it will be recreated immediately. To delete the pod, we need to delete (or move) the manifest file. ??? :EN:- Static pods :FR:- Les *static pods* .debug[[k8s/staticpods.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/staticpods.md)] --- class: pic .interstitial[] --- name: toc-pod-security-policies class: title Pod Security Policies .nav[ [Previous section](#toc-static-pods) | [Back to table of contents](#toc-module-2) | [Next section](#toc-openid-connect) ] .debug[(automatically generated title slide)] --- # Pod Security Policies - By default, our pods and containers can do *everything* (including taking over the entire cluster) - We are going to show an example of a malicious pod - Then we will explain how to avoid this with PodSecurityPolicies - We will enable PodSecurityPolicies on our cluster - We will create a couple of policies (restricted and permissive) - Finally we will see how to use them to improve security on our cluster .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Setting up a namespace - For simplicity, let's work in a separate namespace - Let's create a new namespace called "green" .exercise[ - Create the "green" namespace: ```bash kubectl create namespace green ``` - Change to that namespace: ```bash kns green ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Creating a basic Deployment - Just to check that everything works correctly, deploy NGINX .exercise[ - Create a Deployment using the official NGINX image: ```bash kubectl create deployment web --image=nginx ``` - Confirm that the Deployment, ReplicaSet, and Pod exist, and that the Pod is running: ```bash kubectl get all ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## One example of malicious pods - We will now show an escalation technique in action - We will deploy a DaemonSet that adds our SSH key to the root account (on *each* node of the cluster) - The Pods of the DaemonSet will do so by mounting `/root` from the host .exercise[ - Check the file `k8s/hacktheplanet.yaml` with a text editor: ```bash vim ~/container.training/k8s/hacktheplanet.yaml ``` - If you would like, change the SSH key (by changing the GitHub user name) ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Deploying the malicious pods - Let's deploy our "exploit"! .exercise[ - Create the DaemonSet: ```bash kubectl create -f ~/container.training/k8s/hacktheplanet.yaml ``` - Check that the pods are running: ```bash kubectl get pods ``` - Confirm that the SSH key was added to the node's root account: ```bash sudo cat /root/.ssh/authorized_keys ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Cleaning up - Before setting up our PodSecurityPolicies, clean up that namespace .exercise[ - Remove the DaemonSet: ```bash kubectl delete daemonset hacktheplanet ``` - Remove the Deployment: ```bash kubectl delete deployment web ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Pod Security Policies in theory - To use PSPs, we need to activate their specific *admission controller* - That admission controller will intercept each pod creation attempt - It will look at: - *who/what* is creating the pod - which PodSecurityPolicies they can use - which PodSecurityPolicies can be used by the Pod's ServiceAccount - Then it will compare the Pod with each PodSecurityPolicy one by one - If a PodSecurityPolicy accepts all the parameters of the Pod, it is created - Otherwise, the Pod creation is denied and it won't even show up in `kubectl get pods` .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Pod Security Policies fine print - With RBAC, using a PSP corresponds to the verb `use` on the PSP (that makes sense, right?) - If no PSP is defined, no Pod can be created (even by cluster admins) - Pods that are already running are *not* affected - If we create a Pod directly, it can use a PSP to which *we* have access - If the Pod is created by e.g. a ReplicaSet or DaemonSet, it's different: - the ReplicaSet / DaemonSet controllers don't have access to *our* policies - therefore, we need to give access to the PSP to the Pod's ServiceAccount .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Pod Security Policies in practice - We are going to enable the PodSecurityPolicy admission controller - At that point, we won't be able to create any more pods (!) - Then we will create a couple of PodSecurityPolicies - ...And associated ClusterRoles (giving `use` access to the policies) - Then we will create RoleBindings to grant these roles to ServiceAccounts - We will verify that we can't run our "exploit" anymore .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Enabling Pod Security Policies - To enable Pod Security Policies, we need to enable their *admission plugin* - This is done by adding a flag to the API server - On clusters deployed with `kubeadm`, the control plane runs in static pods - These pods are defined in YAML files located in `/etc/kubernetes/manifests` - Kubelet watches this directory - Each time a file is added/removed there, kubelet creates/deletes the corresponding pod - Updating a file causes the pod to be deleted and recreated .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Updating the API server flags - Let's edit the manifest for the API server pod .exercise[ - Have a look at the static pods: ```bash ls -l /etc/kubernetes/manifests ``` - Edit the one corresponding to the API server: ```bash sudo vim /etc/kubernetes/manifests/kube-apiserver.yaml ``` <!-- ```wait apiVersion``` --> ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Adding the PSP admission plugin - There should already be a line with `--enable-admission-plugins=...` - Let's add `PodSecurityPolicy` on that line .exercise[ - Locate the line with `--enable-admission-plugins=` - Add `PodSecurityPolicy` It should read: `--enable-admission-plugins=NodeRestriction,PodSecurityPolicy` - Save, quit <!-- ```keys /--enable-admission-plugins=``` ```key ^J``` ```key $``` ```keys a,PodSecurityPolicy``` ```key Escape``` ```keys :wq``` ```key ^J``` --> ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Waiting for the API server to restart - The kubelet detects that the file was modified - It kills the API server pod, and starts a new one - During that time, the API server is unavailable .exercise[ - Wait until the API server is available again ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Check that the admission plugin is active - Normally, we can't create any Pod at this point .exercise[ - Try to create a Pod directly: ```bash kubectl run testpsp1 --image=nginx --restart=Never ``` <!-- ```wait forbidden: no providers available``` --> - Try to create a Deployment: ```bash kubectl create deployment testpsp2 --image=nginx ``` - Look at existing resources: ```bash kubectl get all ``` ] We can get hints at what's happening by looking at the ReplicaSet and Events. .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Introducing our Pod Security Policies - We will create two policies: - privileged (allows everything) - restricted (blocks some unsafe mechanisms) - For each policy, we also need an associated ClusterRole granting *use* .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Creating our Pod Security Policies - We have a couple of files, each defining a PSP and associated ClusterRole: - k8s/psp-privileged.yaml: policy `privileged`, role `psp:privileged` - k8s/psp-restricted.yaml: policy `restricted`, role `psp:restricted` .exercise[ - Create both policies and their associated ClusterRoles: ```bash kubectl create -f ~/container.training/k8s/psp-restricted.yaml kubectl create -f ~/container.training/k8s/psp-privileged.yaml ``` ] - The privileged policy comes from [the Kubernetes documentation](https://kubernetes.io/docs/concepts/policy/pod-security-policy/#example-policies) - The restricted policy is inspired by that same documentation page .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Check that we can create Pods again - We haven't bound the policy to any user yet - But `cluster-admin` can implicitly `use` all policies .exercise[ - Check that we can now create a Pod directly: ```bash kubectl run testpsp3 --image=nginx --restart=Never ``` - Create a Deployment as well: ```bash kubectl create deployment testpsp4 --image=nginx ``` - Confirm that the Deployment is *not* creating any Pods: ```bash kubectl get all ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## What's going on? - We can create Pods directly (thanks to our root-like permissions) - The Pods corresponding to a Deployment are created by the ReplicaSet controller - The ReplicaSet controller does *not* have root-like permissions - We need to either: - grant permissions to the ReplicaSet controller *or* - grant permissions to our Pods' ServiceAccount - The first option would allow *anyone* to create pods - The second option will allow us to scope the permissions better .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Binding the restricted policy - Let's bind the role `psp:restricted` to ServiceAccount `green:default` (aka the default ServiceAccount in the green Namespace) - This will allow Pod creation in the green Namespace (because these Pods will be using that ServiceAccount automatically) .exercise[ - Create the following RoleBinding: ```bash kubectl create rolebinding psp:restricted \ --clusterrole=psp:restricted \ --serviceaccount=green:default ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Trying it out - The Deployments that we created earlier will *eventually* recover (the ReplicaSet controller will retry to create Pods once in a while) - If we create a new Deployment now, it should work immediately .exercise[ - Create a simple Deployment: ```bash kubectl create deployment testpsp5 --image=nginx ``` - Look at the Pods that have been created: ```bash kubectl get all ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Trying to hack the cluster - Let's create the same DaemonSet we used earlier .exercise[ - Create a hostile DaemonSet: ```bash kubectl create -f ~/container.training/k8s/hacktheplanet.yaml ``` - Look at the state of the namespace: ```bash kubectl get all ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- class: extra-details ## What's in our restricted policy? - The restricted PSP is similar to the one provided in the docs, but: - it allows containers to run as root - it doesn't drop capabilities - Many containers run as root by default, and would require additional tweaks - Many containers use e.g. `chown`, which requires a specific capability (that's the case for the NGINX official image, for instance) - We still block: hostPath, privileged containers, and much more! .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- class: extra-details ## The case of static pods - If we list the pods in the `kube-system` namespace, `kube-apiserver` is missing - However, the API server is obviously running (otherwise, `kubectl get pods --namespace=kube-system` wouldn't work) - The API server Pod is created directly by kubelet (without going through the PSP admission plugin) - Then, kubelet creates a "mirror pod" representing that Pod in etcd - That "mirror pod" creation goes through the PSP admission plugin - And it gets blocked! - This can be fixed by binding `psp:privileged` to group `system:nodes` .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## .warning[Before moving on...] - Our cluster is currently broken (we can't create pods in namespaces kube-system, default, ...) - We need to either: - disable the PSP admission plugin - allow use of PSP to relevant users and groups - For instance, we could: - bind `psp:restricted` to the group `system:authenticated` - bind `psp:privileged` to the ServiceAccount `kube-system:default` .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- ## Fixing the cluster - Let's disable the PSP admission plugin .exercise[ - Edit the Kubernetes API server static pod manifest - Remove the PSP admission plugin - This can be done with this one-liner: ```bash sudo sed -i s/,PodSecurityPolicy// /etc/kubernetes/manifests/kube-apiserver.yaml ``` ] ??? :EN:- Preventing privilege escalation with Pod Security Policies :FR:- Limiter les droits des conteneurs avec les *Pod Security Policies* .debug[[k8s/podsecuritypolicy.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/podsecuritypolicy.md)] --- class: pic .interstitial[] --- name: toc-openid-connect class: title OpenID Connect .nav[ [Previous section](#toc-pod-security-policies) | [Back to table of contents](#toc-module-2) | [Next section](#toc-the-csr-api) ] .debug[(automatically generated title slide)] --- # OpenID Connect - The Kubernetes API server can perform authentication with OpenID connect - This requires an *OpenID provider* (external authorization server using the OAuth 2.0 protocol) - We can use a third-party provider (e.g. Google) or run our own (e.g. Dex) - We are going to give an overview of the protocol - We will show it in action (in a simplified scenario) .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## Workflow overview - We want to access our resources (a Kubernetes cluster) - We authenticate with the OpenID provider - we can do this directly (e.g. by going to https://accounts.google.com) - or maybe a kubectl plugin can open a browser page on our behalf - After authenticating us, the OpenID provider gives us: - an *id token* (a short-lived signed JSON Web Token, see next slide) - a *refresh token* (to renew the *id token* when needed) - We can now issue requests to the Kubernetes API with the *id token* - The API server will verify that token's content to authenticate us .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## JSON Web Tokens - A JSON Web Token (JWT) has three parts: - a header specifying algorithms and token type - a payload (indicating who issued the token, for whom, which purposes...) - a signature generated by the issuer (the issuer = the OpenID provider) - Anyone can verify a JWT without contacting the issuer (except to obtain the issuer's public key) - Pro tip: we can inspect a JWT with https://jwt.io/ .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## How the Kubernetes API uses JWT - Server side - enable OIDC authentication - indicate which issuer (provider) should be allowed - indicate which audience (or "client id") should be allowed - optionally, map or prefix user and group names - Client side - obtain JWT as described earlier - pass JWT as authentication token - renew JWT when needed (using the refresh token) .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## Demo time! - We will use [Google Accounts](https://accounts.google.com) as our OpenID provider - We will use the [Google OAuth Playground](https://developers.google.com/oauthplayground) as the "audience" or "client id" - We will obtain a JWT through Google Accounts and the OAuth Playground - We will enable OIDC in the Kubernetes API server - We will use the JWT to authenticate .footnote[If you can't or won't use a Google account, you can try to adapt this to another provider.] .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## Checking the API server logs - The API server logs will be particularly useful in this section (they will indicate e.g. why a specific token is rejected) - Let's keep an eye on the API server output! .exercise[ - Tail the logs of the API server: ```bash kubectl logs kube-apiserver-node1 --follow --namespace=kube-system ``` ] .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## Authenticate with the OpenID provider - We will use the Google OAuth Playground for convenience - In a real scenario, we would need our own OAuth client instead of the playground (even if we were still using Google as the OpenID provider) .exercise[ - Open the Google OAuth Playground: ``` https://developers.google.com/oauthplayground/ ``` - Enter our own custom scope in the text field: ``` https://www.googleapis.com/auth/userinfo.email ``` - Click on "Authorize APIs" and allow the playground to access our email address ] .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## Obtain our JSON Web Token - The previous step gave us an "authorization code" - We will use it to obtain tokens .exercise[ - Click on "Exchange authorization code for tokens" ] - The JWT is the very long `id_token` that shows up on the right hand side (it is a base64-encoded JSON object, and should therefore start with `eyJ`) .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## Using our JSON Web Token - We need to create a context (in kubeconfig) for our token (if we just add the token or use `kubectl --token`, our certificate will still be used) .exercise[ - Create a new authentication section in kubeconfig: ```bash kubectl config set-credentials myjwt --token=eyJ... ``` - Try to use it: ```bash kubectl --user=myjwt get nodes ``` ] We should get an `Unauthorized` response, since we haven't enabled OpenID Connect in the API server yet. We should also see `invalid bearer token` in the API server log output. .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## Enabling OpenID Connect - We need to add a few flags to the API server configuration - These two are mandatory: `--oidc-issuer-url` → URL of the OpenID provider `--oidc-client-id` → app requesting the authentication <br/>(in our case, that's the ID for the Google OAuth Playground) - This one is optional: `--oidc-username-claim` → which field should be used as user name <br/>(we will use the user's email address instead of an opaque ID) - See the [API server documentation](https://kubernetes.io/docs/reference/access-authn-authz/authentication/#configuring-the-api-server ) for more details about all available flags .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## Updating the API server configuration - The instructions below will work for clusters deployed with kubeadm (or where the control plane is deployed in static pods) - If your cluster is deployed differently, you will need to adapt them .exercise[ - Edit `/etc/kubernetes/manifests/kube-apiserver.yaml` - Add the following lines to the list of command-line flags: ```yaml - --oidc-issuer-url=https://accounts.google.com - --oidc-client-id=407408718192.apps.googleusercontent.com - --oidc-username-claim=email ``` ] .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## Restarting the API server - The kubelet monitors the files in `/etc/kubernetes/manifests` - When we save the pod manifest, kubelet will restart the corresponding pod (using the updated command line flags) .exercise[ - After making the changes described on the previous slide, save the file - Issue a simple command (like `kubectl version`) until the API server is back up (it might take between a few seconds and one minute for the API server to restart) - Restart the `kubectl logs` command to view the logs of the API server ] .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## Using our JSON Web Token - Now that the API server is set up to recognize our token, try again! .exercise[ - Try an API command with our token: ```bash kubectl --user=myjwt get nodes kubectl --user=myjwt get pods ``` ] We should see a message like: ``` Error from server (Forbidden): nodes is forbidden: User "jean.doe@gmail.com" cannot list resource "nodes" in API group "" at the cluster scope ``` → We were successfully *authenticated*, but not *authorized*. .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## Authorizing our user - As an extra step, let's grant read access to our user - We will use the pre-defined ClusterRole `view` .exercise[ - Create a ClusterRoleBinding allowing us to view resources: ```bash kubectl create clusterrolebinding i-can-view \ --user=`jean.doe@gmail.com` --clusterrole=view ``` (make sure to put *your* Google email address there) - Confirm that we can now list pods with our token: ```bash kubectl --user=myjwt get pods ``` ] .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- ## From demo to production .warning[This was a very simplified demo! In a real deployment...] - We wouldn't use the Google OAuth Playground - We *probably* wouldn't even use Google at all (it doesn't seem to provide a way to include groups!) - Some popular alternatives: - [Dex](https://github.com/dexidp/dex), [Keycloak](https://www.keycloak.org/) (self-hosted) - [Okta](https://developer.okta.com/docs/how-to/creating-token-with-groups-claim/#step-five-decode-the-jwt-to-verify) (SaaS) - We would use a helper (like the [kubelogin](https://github.com/int128/kubelogin) plugin) to automatically obtain tokens .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- class: extra-details ## Service Account tokens - The tokens used by Service Accounts are JWT tokens as well - They are signed and verified using a special service account key pair .exercise[ - Extract the token of a service account in the current namespace: ```bash kubectl get secrets -o jsonpath={..token} | base64 -d ``` - Copy-paste the token to a verification service like https://jwt.io - Notice that it says "Invalid Signature" ] .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- class: extra-details ## Verifying Service Account tokens - JSON Web Tokens embed the URL of the "issuer" (=OpenID provider) - The issuer provides its public key through a well-known discovery endpoint (similar to https://accounts.google.com/.well-known/openid-configuration) - There is no such endpoint for the Service Account key pair - But we can provide the public key ourselves for verification .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- class: extra-details ## Verifying a Service Account token - On clusters provisioned with kubeadm, the Service Account key pair is: `/etc/kubernetes/pki/sa.key` (used by the controller manager to generate tokens) `/etc/kubernetes/pki/sa.pub` (used by the API server to validate the same tokens) .exercise[ - Display the public key used to sign Service Account tokens: ```bash sudo cat /etc/kubernetes/pki/sa.pub ``` - Copy-paste the key in the "verify signature" area on https://jwt.io - It should now say "Signature Verified" ] ??? :EN:- Authenticating with OIDC :FR:- S'identifier avec OIDC .debug[[k8s/openid-connect.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/openid-connect.md)] --- class: pic .interstitial[] --- name: toc-the-csr-api class: title The CSR API .nav[ [Previous section](#toc-openid-connect) | [Back to table of contents](#toc-module-2) | [Next section](#toc-resource-limits) ] .debug[(automatically generated title slide)] --- # The CSR API - The Kubernetes API exposes CSR resources - We can use these resources to issue TLS certificates - First, we will go through a quick reminder about TLS certificates - Then, we will see how to obtain a certificate for a user - We will use that certificate to authenticate with the cluster - Finally, we will grant some privileges to that user .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Reminder about TLS - TLS (Transport Layer Security) is a protocol providing: - encryption (to prevent eavesdropping) - authentication (using public key cryptography) - When we access an https:// URL, the server authenticates itself (it proves its identity to us; as if it were "showing its ID") - But we can also have mutual TLS authentication (mTLS) (client proves its identity to server; server proves its identity to client) .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Authentication with certificates - To authenticate, someone (client or server) needs: - a *private key* (that remains known only to them) - a *public key* (that they can distribute) - a *certificate* (associating the public key with an identity) - A message encrypted with the private key can only be decrypted with the public key (and vice versa) - If I use someone's public key to encrypt/decrypt their messages, <br/> I can be certain that I am talking to them / they are talking to me - The certificate proves that I have the correct public key for them .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Certificate generation workflow This is what I do if I want to obtain a certificate. 1. Create public and private keys. 2. Create a Certificate Signing Request (CSR). (The CSR contains the identity that I claim and a public key.) 3. Send that CSR to the Certificate Authority (CA). 4. The CA verifies that I can claim the identity in the CSR. 5. The CA generates my certificate and gives it to me. The CA (or anyone else) never needs to know my private key. .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## The CSR API - The Kubernetes API has a CertificateSigningRequest resource type (we can list them with e.g. `kubectl get csr`) - We can create a CSR object (= upload a CSR to the Kubernetes API) - Then, using the Kubernetes API, we can approve/deny the request - If we approve the request, the Kubernetes API generates a certificate - The certificate gets attached to the CSR object and can be retrieved .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Using the CSR API - We will show how to use the CSR API to obtain user certificates - This will be a rather complex demo - ... And yet, we will take a few shortcuts to simplify it (but it will illustrate the general idea) - The demo also won't be automated (we would have to write extra code to make it fully functional) .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## General idea - We will create a Namespace named "users" - Each user will get a ServiceAccount in that Namespace - That ServiceAccount will give read/write access to *one* CSR object - Users will use that ServiceAccount's token to submit a CSR - We will approve the CSR (or not) - Users can then retrieve their certificate from their CSR object - ...And use that certificate for subsequent interactions .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Resource naming For a user named `jean.doe`, we will have: - ServiceAccount `jean.doe` in Namespace `users` - CertificateSigningRequest `user=jean.doe` - ClusterRole `user=jean.doe` giving read/write access to that CSR - ClusterRoleBinding `user=jean.doe` binding ClusterRole and ServiceAccount .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- class: extra-details ## About resource name constraints - Most Kubernetes identifiers and names are fairly restricted - They generally are DNS-1123 *labels* or *subdomains* (from [RFC 1123](https://tools.ietf.org/html/rfc1123)) - A label is lowercase letters, numbers, dashes; can't start or finish with a dash - A subdomain is one or multiple labels separated by dots - Some resources have more relaxed constraints, and can be "path segment names" (uppercase are allowed, as well as some characters like `#:?!,_`) - This includes RBAC objects (like Roles, RoleBindings...) and CSRs - See the [Identifiers and Names](https://github.com/kubernetes/community/blob/master/contributors/design-proposals/architecture/identifiers.md) design document and the [Object Names and IDs](https://kubernetes.io/docs/concepts/overview/working-with-objects/names/#path-segment-names) documentation page for more details .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Creating the user's resources .warning[If you want to use another name than `jean.doe`, update the YAML file!] .exercise[ - Create the global namespace for all users: ```bash kubectl create namespace users ``` - Create the ServiceAccount, ClusterRole, ClusterRoleBinding for `jean.doe`: ```bash kubectl apply -f ~/container.training/k8s/user=jean.doe.yaml ``` ] .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Extracting the user's token - Let's obtain the user's token and give it to them (the token will be their password) .exercise[ - List the user's secrets: ```bash kubectl --namespace=users describe serviceaccount jean.doe ``` - Show the user's token: ```bash kubectl --namespace=users describe secret `jean.doe-token-xxxxx` ``` ] .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Configure `kubectl` to use the token - Let's create a new context that will use that token to access the API .exercise[ - Add a new identity to our kubeconfig file: ```bash kubectl config set-credentials token:jean.doe --token=... ``` - Add a new context using that identity: ```bash kubectl config set-context jean.doe --user=token:jean.doe --cluster=`kubernetes` ``` (Make sure to adapt the cluster name if yours is different!) - Use that context: ```bash kubectl config use-context jean.doe ``` ] .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Access the API with the token - Let's check that our access rights are set properly .exercise[ - Try to access any resource: ```bash kubectl get pods ``` (This should tell us "Forbidden") - Try to access "our" CertificateSigningRequest: ```bash kubectl get csr user=jean.doe ``` (This should tell us "NotFound") ] .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Create a key and a CSR - There are many tools to generate TLS keys and CSRs - Let's use OpenSSL; it's not the best one, but it's installed everywhere (many people prefer cfssl, easyrsa, or other tools; that's fine too!) .exercise[ - Generate the key and certificate signing request: ```bash openssl req -newkey rsa:2048 -nodes -keyout key.pem \ -new -subj /CN=jean.doe/O=devs/ -out csr.pem ``` ] The command above generates: - a 2048-bit RSA key, without encryption, stored in key.pem - a CSR for the name `jean.doe` in group `devs` .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Inside the Kubernetes CSR object - The Kubernetes CSR object is a thin wrapper around the CSR PEM file - The PEM file needs to be encoded to base64 on a single line (we will use `base64 -w0` for that purpose) - The Kubernetes CSR object also needs to list the right "usages" (these are flags indicating how the certificate can be used) .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Sending the CSR to Kubernetes .exercise[ - Generate and create the CSR resource: ```bash kubectl apply -f - <<EOF apiVersion: certificates.k8s.io/v1beta1 kind: CertificateSigningRequest metadata: name: user=jean.doe spec: request: $(base64 -w0 < csr.pem) usages: - digital signature - key encipherment - client auth EOF ``` ] .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Adjusting certificate expiration - By default, the CSR API generates certificates valid 1 year - We want to generate short-lived certificates, so we will lower that to 1 hour - Fow now, this is configured [through an experimental controller manager flag](https://github.com/kubernetes/kubernetes/issues/67324) .exercise[ - Edit the static pod definition for the controller manager: ```bash sudo vim /etc/kubernetes/manifests/kube-controller-manager.yaml ``` - In the list of flags, add the following line: ```bash - --experimental-cluster-signing-duration=1h ``` ] .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Verifying and approving the CSR - Let's inspect the CSR, and if it is valid, approve it .exercise[ - Switch back to `cluster-admin`: ```bash kctx - ``` - Inspect the CSR: ```bash kubectl describe csr user=jean.doe ``` - Approve it: ```bash kubectl certificate approve user=jean.doe ``` ] .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Obtaining the certificate .exercise[ - Switch back to the user's identity: ```bash kctx - ``` - Retrieve the updated CSR object and extract the certificate: ```bash kubectl get csr user=jean.doe \ -o jsonpath={.status.certificate} \ | base64 -d > cert.pem ``` - Inspect the certificate: ```bash openssl x509 -in cert.pem -text -noout ``` ] .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Using the certificate .exercise[ - Add the key and certificate to kubeconfig: ```bash kubectl config set-credentials cert:jean.doe --embed-certs \ --client-certificate=cert.pem --client-key=key.pem ``` - Update the user's context to use the key and cert to authenticate: ```bash kubectl config set-context jean.doe --user cert:jean.doe ``` - Confirm that we are seen as `jean.doe` (but don't have permissions): ```bash kubectl get pods ``` ] .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## What's missing? We have just shown, step by step, a method to issue short-lived certificates for users. To be usable in real environments, we would need to add: - a kubectl helper to automatically generate the CSR and obtain the cert (and transparently renew the cert when needed) - a Kubernetes controller to automatically validate and approve CSRs (checking that the subject and groups are valid) - a way for the users to know the groups to add to their CSR (e.g.: annotations on their ServiceAccount + read access to the ServiceAccount) .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- ## Is this realistic? - Larger organizations typically integrate with their own directory - The general principle, however, is the same: - users have long-term credentials (password, token, ...) - they use these credentials to obtain other, short-lived credentials - This provides enhanced security: - the long-term credentials can use long passphrases, 2FA, HSM... - the short-term credentials are more convenient to use - we get strong security *and* convenience - Systems like Vault also have certificate issuance mechanisms ??? :EN:- Generating user certificates with the CSR API :FR:- Génération de certificats utilisateur avec la CSR API .debug[[k8s/csr-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/csr-api.md)] --- class: pic .interstitial[] --- name: toc-resource-limits class: title Resource Limits .nav[ [Previous section](#toc-the-csr-api) | [Back to table of contents](#toc-module-3) | [Next section](#toc-defining-min-max-and-default-resources) ] .debug[(automatically generated title slide)] --- # Resource Limits - We can attach resource indications to our pods (or rather: to the *containers* in our pods) - We can specify *limits* and/or *requests* - We can specify quantities of CPU and/or memory .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## CPU vs memory - CPU is a *compressible resource* (it can be preempted immediately without adverse effect) - Memory is an *incompressible resource* (it needs to be swapped out to be reclaimed; and this is costly) - As a result, exceeding limits will have different consequences for CPU and memory .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Exceeding CPU limits - CPU can be reclaimed instantaneously (in fact, it is preempted hundreds of times per second, at each context switch) - If a container uses too much CPU, it can be throttled (it will be scheduled less often) - The processes in that container will run slower (or rather: they will not run faster) .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Exceeding memory limits - Memory needs to be swapped out before being reclaimed - "Swapping" means writing memory pages to disk, which is very slow - On a classic system, a process that swaps can get 1000x slower (because disk I/O is 1000x slower than memory I/O) - Exceeding the memory limit (even by a small amount) can reduce performance *a lot* - Kubernetes *does not support swap* (more on that later!) - Exceeding the memory limit will cause the container to be killed .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Limits vs requests - Limits are "hard limits" (they can't be exceeded) - a container exceeding its memory limit is killed - a container exceeding its CPU limit is throttled - Requests are used for scheduling purposes - a container using *less* than what it requested will never be killed or throttled - the scheduler uses the requested sizes to determine placement - the resources requested by all pods on a node will never exceed the node size .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Pod quality of service Each pod is assigned a QoS class (visible in `status.qosClass`). - If limits = requests: - as long as the container uses less than the limit, it won't be affected - if all containers in a pod have *(limits=requests)*, QoS is considered "Guaranteed" - If requests < limits: - as long as the container uses less than the request, it won't be affected - otherwise, it might be killed/evicted if the node gets overloaded - if at least one container has *(requests<limits)*, QoS is considered "Burstable" - If a pod doesn't have any request nor limit, QoS is considered "BestEffort" .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Quality of service impact - When a node is overloaded, BestEffort pods are killed first - Then, Burstable pods that exceed their requests - Burstable and Guaranteed pods below their requests are never killed (except if their node fails) - If we only use Guaranteed pods, no pod should ever be killed (as long as they stay within their limits) (Pod QoS is also explained in [this page](https://kubernetes.io/docs/tasks/configure-pod-container/quality-service-pod/) of the Kubernetes documentation and in [this blog post](https://medium.com/google-cloud/quality-of-service-class-qos-in-kubernetes-bb76a89eb2c6).) .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Where is my swap? - The semantics of memory and swap limits on Linux cgroups are complex - In particular, it's not possible to disable swap for a cgroup (the closest option is to [reduce "swappiness"](https://unix.stackexchange.com/questions/77939/turning-off-swapping-for-only-one-process-with-cgroups)) - The architects of Kubernetes wanted to ensure that Guaranteed pods never swap - The only solution was to disable swap entirely .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Alternative point of view - Swap enables paging¹ of anonymous² memory - Even when swap is disabled, Linux will still page memory for: - executables, libraries - mapped files - Disabling swap *will reduce performance and available resources* - For a good time, read [kubernetes/kubernetes#53533](https://github.com/kubernetes/kubernetes/issues/53533) - Also read this [excellent blog post about swap](https://jvns.ca/blog/2017/02/17/mystery-swap/) ¹Paging: reading/writing memory pages from/to disk to reclaim physical memory ²Anonymous memory: memory that is not backed by files or blocks .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Enabling swap anyway - If you don't care that pods are swapping, you can enable swap - You will need to add the flag `--fail-swap-on=false` to kubelet (otherwise, it won't start!) .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Specifying resources - Resource requests are expressed at the *container* level - CPU is expressed in "virtual CPUs" (corresponding to the virtual CPUs offered by some cloud providers) - CPU can be expressed with a decimal value, or even a "milli" suffix (so 100m = 0.1) - Memory is expressed in bytes - Memory can be expressed with k, M, G, T, ki, Mi, Gi, Ti suffixes (corresponding to 10^3, 10^6, 10^9, 10^12, 2^10, 2^20, 2^30, 2^40) .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Specifying resources in practice This is what the spec of a Pod with resources will look like: ```yaml containers: - name: httpenv image: jpetazzo/httpenv resources: limits: memory: "100Mi" cpu: "100m" requests: memory: "100Mi" cpu: "10m" ``` This set of resources makes sure that this service won't be killed (as long as it stays below 100 MB of RAM), but allows its CPU usage to be throttled if necessary. .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Default values - If we specify a limit without a request: the request is set to the limit - If we specify a request without a limit: there will be no limit (which means that the limit will be the size of the node) - If we don't specify anything: the request is zero and the limit is the size of the node *Unless there are default values defined for our namespace!* .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## We need default resource values - If we do not set resource values at all: - the limit is "the size of the node" - the request is zero - This is generally *not* what we want - a container without a limit can use up all the resources of a node - if the request is zero, the scheduler can't make a smart placement decision - To address this, we can set default values for resources - This is done with a LimitRange object .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- class: pic .interstitial[] --- name: toc-defining-min-max-and-default-resources class: title Defining min, max, and default resources .nav[ [Previous section](#toc-resource-limits) | [Back to table of contents](#toc-module-3) | [Next section](#toc-namespace-quotas) ] .debug[(automatically generated title slide)] --- # Defining min, max, and default resources - We can create LimitRange objects to indicate any combination of: - min and/or max resources allowed per pod - default resource *limits* - default resource *requests* - maximal burst ratio (*limit/request*) - LimitRange objects are namespaced - They apply to their namespace only .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## LimitRange example ```yaml apiVersion: v1 kind: LimitRange metadata: name: my-very-detailed-limitrange spec: limits: - type: Container min: cpu: "100m" max: cpu: "2000m" memory: "1Gi" default: cpu: "500m" memory: "250Mi" defaultRequest: cpu: "500m" ``` .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Example explanation The YAML on the previous slide shows an example LimitRange object specifying very detailed limits on CPU usage, and providing defaults on RAM usage. Note the `type: Container` line: in the future, it might also be possible to specify limits per Pod, but it's not [officially documented yet](https://github.com/kubernetes/website/issues/9585). .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## LimitRange details - LimitRange restrictions are enforced only when a Pod is created (they don't apply retroactively) - They don't prevent creation of e.g. an invalid Deployment or DaemonSet (but the pods will not be created as long as the LimitRange is in effect) - If there are multiple LimitRange restrictions, they all apply together (which means that it's possible to specify conflicting LimitRanges, <br/>preventing any Pod from being created) - If a LimitRange specifies a `max` for a resource but no `default`, <br/>that `max` value becomes the `default` limit too .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- class: pic .interstitial[] --- name: toc-namespace-quotas class: title Namespace quotas .nav[ [Previous section](#toc-defining-min-max-and-default-resources) | [Back to table of contents](#toc-module-3) | [Next section](#toc-limiting-resources-in-practice) ] .debug[(automatically generated title slide)] --- # Namespace quotas - We can also set quotas per namespace - Quotas apply to the total usage in a namespace (e.g. total CPU limits of all pods in a given namespace) - Quotas can apply to resource limits and/or requests (like the CPU and memory limits that we saw earlier) - Quotas can also apply to other resources: - "extended" resources (like GPUs) - storage size - number of objects (number of pods, services...) .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Creating a quota for a namespace - Quotas are enforced by creating a ResourceQuota object - ResourceQuota objects are namespaced, and apply to their namespace only - We can have multiple ResourceQuota objects in the same namespace - The most restrictive values are used .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Limiting total CPU/memory usage - The following YAML specifies an upper bound for *limits* and *requests*: ```yaml apiVersion: v1 kind: ResourceQuota metadata: name: a-little-bit-of-compute spec: hard: requests.cpu: "10" requests.memory: 10Gi limits.cpu: "20" limits.memory: 20Gi ``` These quotas will apply to the namespace where the ResourceQuota is created. .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Limiting number of objects - The following YAML specifies how many objects of specific types can be created: ```yaml apiVersion: v1 kind: ResourceQuota metadata: name: quota-for-objects spec: hard: pods: 100 services: 10 secrets: 10 configmaps: 10 persistentvolumeclaims: 20 services.nodeports: 0 services.loadbalancers: 0 count/roles.rbac.authorization.k8s.io: 10 ``` (The `count/` syntax allows limiting arbitrary objects, including CRDs.) .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## YAML vs CLI - Quotas can be created with a YAML definition - ...Or with the `kubectl create quota` command - Example: ```bash kubectl create quota my-resource-quota --hard=pods=300,limits.memory=300Gi ``` - With both YAML and CLI form, the values are always under the `hard` section (there is no `soft` quota) .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Viewing current usage When a ResourceQuota is created, we can see how much of it is used: ``` kubectl describe resourcequota my-resource-quota Name: my-resource-quota Namespace: default Resource Used Hard -------- ---- ---- pods 12 100 services 1 5 services.loadbalancers 0 0 services.nodeports 0 0 ``` .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Advanced quotas and PriorityClass - Since Kubernetes 1.12, it is possible to create PriorityClass objects - Pods can be assigned a PriorityClass - Quotas can be linked to a PriorityClass - This allows us to reserve resources for pods within a namespace - For more details, check [this documentation page](https://kubernetes.io/docs/concepts/policy/resource-quotas/#resource-quota-per-priorityclass) .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- class: pic .interstitial[] --- name: toc-limiting-resources-in-practice class: title Limiting resources in practice .nav[ [Previous section](#toc-namespace-quotas) | [Back to table of contents](#toc-module-3) | [Next section](#toc-checking-pod-and-node-resource-usage) ] .debug[(automatically generated title slide)] --- # Limiting resources in practice - We have at least three mechanisms: - requests and limits per Pod - LimitRange per namespace - ResourceQuota per namespace - Let's see a simple recommendation to get started with resource limits .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Set a LimitRange - In each namespace, create a LimitRange object - Set a small default CPU request and CPU limit (e.g. "100m") - Set a default memory request and limit depending on your most common workload - for Java, Ruby: start with "1G" - for Go, Python, PHP, Node: start with "250M" - Set upper bounds slightly below your expected node size (80-90% of your node size, with at least a 500M memory buffer) .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Set a ResourceQuota - In each namespace, create a ResourceQuota object - Set generous CPU and memory limits (e.g. half the cluster size if the cluster hosts multiple apps) - Set generous objects limits - these limits should not be here to constrain your users - they should catch a runaway process creating many resources - example: a custom controller creating many pods .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Observe, refine, iterate - Observe the resource usage of your pods (we will see how in the next chapter) - Adjust individual pod limits - If you see trends: adjust the LimitRange (rather than adjusting every individual set of pod limits) - Observe the resource usage of your namespaces (with `kubectl describe resourcequota ...`) - Rinse and repeat regularly .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- ## Additional resources - [A Practical Guide to Setting Kubernetes Requests and Limits](http://blog.kubecost.com/blog/requests-and-limits/) - explains what requests and limits are - provides guidelines to set requests and limits - gives PromQL expressions to compute good values <br/>(our app needs to be running for a while) - [Kube Resource Report](https://github.com/hjacobs/kube-resource-report/) - generates web reports on resource usage - [static demo](https://hjacobs.github.io/kube-resource-report/sample-report/output/index.html) | [live demo](https://kube-resource-report.demo.j-serv.de/applications.html) ??? :EN:- Setting compute resource limits :EN:- Defining default policies for resource usage :EN:- Managing cluster allocation and quotas :EN:- Resource management in practice :FR:- Allouer et limiter les ressources des conteneurs :FR:- Définir des ressources par défaut :FR:- Gérer les quotas de ressources au niveau du cluster :FR:- Conseils pratiques .debug[[k8s/resource-limits.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/resource-limits.md)] --- class: pic .interstitial[] --- name: toc-checking-pod-and-node-resource-usage class: title Checking pod and node resource usage .nav[ [Previous section](#toc-limiting-resources-in-practice) | [Back to table of contents](#toc-module-3) | [Next section](#toc-cluster-sizing) ] .debug[(automatically generated title slide)] --- # Checking pod and node resource usage - Since Kubernetes 1.8, metrics are collected by the [resource metrics pipeline](https://kubernetes.io/docs/tasks/debug-application-cluster/resource-metrics-pipeline/) - The resource metrics pipeline is: - optional (Kubernetes can function without it) - necessary for some features (like the Horizontal Pod Autoscaler) - exposed through the Kubernetes API using the [aggregation layer](https://kubernetes.io/docs/concepts/extend-kubernetes/api-extension/apiserver-aggregation/) - usually implemented by the "metrics server" .debug[[k8s/metrics-server.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/metrics-server.md)] --- ## How to know if the metrics server is running? - The easiest way to know is to run `kubectl top` .exercise[ - Check if the core metrics pipeline is available: ```bash kubectl top nodes ``` ] If it shows our nodes and their CPU and memory load, we're good! .debug[[k8s/metrics-server.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/metrics-server.md)] --- ## Installing metrics server - The metrics server doesn't have any particular requirements (it doesn't need persistence, as it doesn't *store* metrics) - It has its own repository, [kubernetes-incubator/metrics-server](https://github.com/kubernetes-incubator/metrics-server) - The repository comes with [YAML files for deployment](https://github.com/kubernetes-incubator/metrics-server/tree/master/deploy/1.8%2B) - These files may not work on some clusters (e.g. if your node names are not in DNS) - The container.training repository has a [metrics-server.yaml](https://github.com/jpetazzo/container.training/blob/master/k8s/metrics-server.yaml#L90) file to help with that (we can `kubectl apply -f` that file if needed) .debug[[k8s/metrics-server.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/metrics-server.md)] --- ## Showing container resource usage - Once the metrics server is running, we can check container resource usage .exercise[ - Show resource usage across all containers: ```bash kubectl top pods --containers --all-namespaces ``` ] - We can also use selectors (`-l app=...`) .debug[[k8s/metrics-server.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/metrics-server.md)] --- ## Other tools - kube-capacity is a great CLI tool to view resources (https://github.com/robscott/kube-capacity) - It can show resource and limits, and compare them with usage - It can show utilization per node, or per pod - kube-resource-report can generate HTML reports (https://github.com/hjacobs/kube-resource-report) ??? :EN:- The *core metrics pipeline* :FR:- Le *core metrics pipeline* .debug[[k8s/metrics-server.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/metrics-server.md)] --- class: pic .interstitial[] --- name: toc-cluster-sizing class: title Cluster sizing .nav[ [Previous section](#toc-checking-pod-and-node-resource-usage) | [Back to table of contents](#toc-module-3) | [Next section](#toc-the-horizontal-pod-autoscaler) ] .debug[(automatically generated title slide)] --- # Cluster sizing - What happens when the cluster gets full? - How can we scale up the cluster? - Can we do it automatically? - What are other methods to address capacity planning? .debug[[k8s/cluster-sizing.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/cluster-sizing.md)] --- ## When are we out of resources? - kubelet monitors node resources: - memory - node disk usage (typically the root filesystem of the node) - image disk usage (where container images and RW layers are stored) - For each resource, we can provide two thresholds: - a hard threshold (if it's met, it provokes immediate action) - a soft threshold (provokes action only after a grace period) - Resource thresholds and grace periods are configurable (by passing kubelet command-line flags) .debug[[k8s/cluster-sizing.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/cluster-sizing.md)] --- ## What happens then? - If disk usage is too high: - kubelet will try to remove terminated pods - then, it will try to *evict* pods - If memory usage is too high: - it will try to evict pods - The node is marked as "under pressure" - This temporarily prevents new pods from being scheduled on the node .debug[[k8s/cluster-sizing.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/cluster-sizing.md)] --- ## Which pods get evicted? - kubelet looks at the pods' QoS and PriorityClass - First, pods with BestEffort QoS are considered - Then, pods with Burstable QoS exceeding their *requests* (but only if the exceeding resource is the one that is low on the node) - Finally, pods with Guaranteed QoS, and Burstable pods within their requests - Within each group, pods are sorted by PriorityClass - If there are pods with the same PriorityClass, they are sorted by usage excess (i.e. the pods whose usage exceeds their requests the most are evicted first) .debug[[k8s/cluster-sizing.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/cluster-sizing.md)] --- class: extra-details ## Eviction of Guaranteed pods - *Normally*, pods with Guaranteed QoS should not be evicted - A chunk of resources is reserved for node processes (like kubelet) - It is expected that these processes won't use more than this reservation - If they do use more resources anyway, all bets are off! - If this happens, kubelet must evict Guaranteed pods to preserve node stability (or Burstable pods that are still within their requested usage) .debug[[k8s/cluster-sizing.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/cluster-sizing.md)] --- ## What happens to evicted pods? - The pod is terminated - It is marked as `Failed` at the API level - If the pod was created by a controller, the controller will recreate it - The pod will be recreated on another node, *if there are resources available!* - For more details about the eviction process, see: - [this documentation page](https://kubernetes.io/docs/tasks/administer-cluster/out-of-resource/) about resource pressure and pod eviction, - [this other documentation page](https://kubernetes.io/docs/concepts/configuration/pod-priority-preemption/) about pod priority and preemption. .debug[[k8s/cluster-sizing.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/cluster-sizing.md)] --- ## What if there are no resources available? - Sometimes, a pod cannot be scheduled anywhere: - all the nodes are under pressure, - or the pod requests more resources than are available - The pod then remains in `Pending` state until the situation improves .debug[[k8s/cluster-sizing.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/cluster-sizing.md)] --- ## Cluster scaling - One way to improve the situation is to add new nodes - This can be done automatically with the [Cluster Autoscaler](https://github.com/kubernetes/autoscaler/tree/master/cluster-autoscaler) - The autoscaler will automatically scale up: - if there are pods that failed to be scheduled - The autoscaler will automatically scale down: - if nodes have a low utilization for an extended period of time .debug[[k8s/cluster-sizing.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/cluster-sizing.md)] --- ## Restrictions, gotchas ... - The Cluster Autoscaler only supports a few cloud infrastructures (see [here](https://github.com/kubernetes/autoscaler/tree/master/cluster-autoscaler/cloudprovider) for a list) - The Cluster Autoscaler cannot scale down nodes that have pods using: - local storage - affinity/anti-affinity rules preventing them from being rescheduled - a restrictive PodDisruptionBudget .debug[[k8s/cluster-sizing.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/cluster-sizing.md)] --- ## Other way to do capacity planning - "Running Kubernetes without nodes" - Systems like [Virtual Kubelet](https://virtual-kubelet.io/) or [Kiyot](https://static.elotl.co/docs/latest/kiyot/kiyot.html) can run pods using on-demand resources - Virtual Kubelet can leverage e.g. ACI or Fargate to run pods - Kiyot runs pods in ad-hoc EC2 instances (1 instance per pod) - Economic advantage (no wasted capacity) - Security advantage (stronger isolation between pods) Check [this blog post](http://jpetazzo.github.io/2019/02/13/running-kubernetes-without-nodes-with-kiyot/) for more details. ??? :EN:- What happens when the cluster is at, or over, capacity :EN:- Cluster sizing and scaling :FR:- Ce qui se passe quand il n'y a plus assez de ressources :FR:- Dimensionner et redimensionner ses clusters .debug[[k8s/cluster-sizing.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/cluster-sizing.md)] --- class: pic .interstitial[] --- name: toc-the-horizontal-pod-autoscaler class: title The Horizontal Pod Autoscaler .nav[ [Previous section](#toc-cluster-sizing) | [Back to table of contents](#toc-module-3) | [Next section](#toc-extending-the-kubernetes-api) ] .debug[(automatically generated title slide)] --- # The Horizontal Pod Autoscaler - What is the Horizontal Pod Autoscaler, or HPA? - It is a controller that can perform *horizontal* scaling automatically - Horizontal scaling = changing the number of replicas (adding/removing pods) - Vertical scaling = changing the size of individual replicas (increasing/reducing CPU and RAM per pod) - Cluster scaling = changing the size of the cluster (adding/removing nodes) .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## Principle of operation - Each HPA resource (or "policy") specifies: - which object to monitor and scale (e.g. a Deployment, ReplicaSet...) - min/max scaling ranges (the max is a safety limit!) - a target resource usage (e.g. the default is CPU=80%) - The HPA continuously monitors the CPU usage for the related object - It computes how many pods should be running: `TargetNumOfPods = ceil(sum(CurrentPodsCPUUtilization) / Target)` - It scales the related object up/down to this target number of pods .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## Pre-requirements - The metrics server needs to be running (i.e. we need to be able to see pod metrics with `kubectl top pods`) - The pods that we want to autoscale need to have resource requests (because the target CPU% is not absolute, but relative to the request) - The latter actually makes a lot of sense: - if a Pod doesn't have a CPU request, it might be using 10% of CPU... - ...but only because there is no CPU time available! - this makes sure that we won't add pods to nodes that are already resource-starved .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## Testing the HPA - We will start a CPU-intensive web service - We will send some traffic to that service - We will create an HPA policy - The HPA will automatically scale up the service for us .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## A CPU-intensive web service - Let's use `jpetazzo/busyhttp` (it is a web server that will use 1s of CPU for each HTTP request) .exercise[ - Deploy the web server: ```bash kubectl create deployment busyhttp --image=jpetazzo/busyhttp ``` - Expose it with a ClusterIP service: ```bash kubectl expose deployment busyhttp --port=80 ``` - Get the ClusterIP allocated to the service: ```bash kubectl get svc busyhttp ``` ] .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## Monitor what's going on - Let's start a bunch of commands to watch what is happening .exercise[ - Monitor pod CPU usage: ```bash watch kubectl top pods -l app=busyhttp ``` <!-- ```wait NAME``` ```tmux split-pane -v``` ```bash CLUSTERIP=$(kubectl get svc busyhttp -o jsonpath={.spec.clusterIP})``` --> - Monitor service latency: ```bash httping http://`$CLUSTERIP`/ ``` <!-- ```wait connected to``` ```tmux split-pane -v``` --> - Monitor cluster events: ```bash kubectl get events -w ``` <!-- ```wait Normal``` ```tmux split-pane -v``` ```bash CLUSTERIP=$(kubectl get svc busyhttp -o jsonpath={.spec.clusterIP})``` --> ] .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## Send traffic to the service - We will use `ab` (Apache Bench) to send traffic .exercise[ - Send a lot of requests to the service, with a concurrency level of 3: ```bash ab -c 3 -n 100000 http://`$CLUSTERIP`/ ``` <!-- ```wait be patient``` ```tmux split-pane -v``` ```tmux selectl even-vertical``` --> ] The latency (reported by `httping`) should increase above 3s. The CPU utilization should increase to 100%. (The server is single-threaded and won't go above 100%.) .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## Create an HPA policy - There is a helper command to do that for us: `kubectl autoscale` .exercise[ - Create the HPA policy for the `busyhttp` deployment: ```bash kubectl autoscale deployment busyhttp --max=10 ``` ] By default, it will assume a target of 80% CPU usage. This can also be set with `--cpu-percent=`. -- *The autoscaler doesn't seem to work. Why?* .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## What did we miss? - The events stream gives us a hint, but to be honest, it's not very clear: `missing request for cpu` - We forgot to specify a resource request for our Deployment! - The HPA target is not an absolute CPU% - It is relative to the CPU requested by the pod .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## Adding a CPU request - Let's edit the deployment and add a CPU request - Since our server can use up to 1 core, let's request 1 core .exercise[ - Edit the Deployment definition: ```bash kubectl edit deployment busyhttp ``` <!-- ```wait Please edit``` ```keys /resources``` ```key ^J``` ```keys $xxxo requests:``` ```key ^J``` ```key Space``` ```key Space``` ```keys cpu: "1"``` ```key Escape``` ```keys :wq``` ```key ^J``` --> - In the `containers` list, add the following block: ```yaml resources: requests: cpu: "1" ``` ] .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## Results - After saving and quitting, a rolling update happens (if `ab` or `httping` exits, make sure to restart it) - It will take a minute or two for the HPA to kick in: - the HPA runs every 30 seconds by default - it needs to gather metrics from the metrics server first - If we scale further up (or down), the HPA will react after a few minutes: - it won't scale up if it already scaled in the last 3 minutes - it won't scale down if it already scaled in the last 5 minutes .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## What about other metrics? - The HPA in API group `autoscaling/v1` only supports CPU scaling - The HPA in API group `autoscaling/v2beta2` supports metrics from various API groups: - metrics.k8s.io, aka metrics server (per-Pod CPU and RAM) - custom.metrics.k8s.io, custom metrics per Pod - external.metrics.k8s.io, external metrics (not associated to Pods) - Kubernetes doesn't implement any of these API groups - Using these metrics requires [registering additional APIs](https://kubernetes.io/docs/tasks/run-application/horizontal-pod-autoscale/#support-for-metrics-apis) - The metrics provided by metrics server are standard; everything else is custom - For more details, see [this great blog post](https://medium.com/uptime-99/kubernetes-hpa-autoscaling-with-custom-and-external-metrics-da7f41ff7846) or [this talk](https://www.youtube.com/watch?v=gSiGFH4ZnS8) .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- ## Cleanup - Since `busyhttp` uses CPU cycles, let's stop it before moving on .exercise[ - Delete the `busyhttp` Deployment: ```bash kubectl delete deployment busyhttp ``` <!-- ```key ^D``` ```key ^C``` ```key ^D``` ```key ^C``` ```key ^D``` ```key ^C``` ```key ^D``` ```key ^C``` --> ] ??? :EN:- Auto-scaling resources :FR:- *Auto-scaling* (dimensionnement automatique) des ressources .debug[[k8s/horizontal-pod-autoscaler.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/horizontal-pod-autoscaler.md)] --- class: pic .interstitial[] --- name: toc-extending-the-kubernetes-api class: title Extending the Kubernetes API .nav[ [Previous section](#toc-the-horizontal-pod-autoscaler) | [Back to table of contents](#toc-module-3) | [Next section](#toc-operators) ] .debug[(automatically generated title slide)] --- # Extending the Kubernetes API There are multiple ways to extend the Kubernetes API. We are going to cover: - Custom Resource Definitions (CRDs) - Admission Webhooks - The Aggregation Layer .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Revisiting the API server - The Kubernetes API server is a central point of the control plane (everything connects to it: controller manager, scheduler, kubelets) - Almost everything in Kubernetes is materialized by a resource - Resources have a type (or "kind") (similar to strongly typed languages) - We can see existing types with `kubectl api-resources` - We can list resources of a given type with `kubectl get <type>` .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Creating new types - We can create new types with Custom Resource Definitions (CRDs) - CRDs are created dynamically (without recompiling or restarting the API server) - CRDs themselves are resources: - we can create a new type with `kubectl create` and some YAML - we can see all our custom types with `kubectl get crds` - After we create a CRD, the new type works just like built-in types .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## A very simple CRD The YAML below describes a very simple CRD representing different kinds of coffee: ```yaml apiVersion: apiextensions.k8s.io/v1alpha1 kind: CustomResourceDefinition metadata: name: coffees.container.training spec: group: container.training version: v1alpha1 scope: Namespaced names: plural: coffees singular: coffee kind: Coffee shortNames: - cof ``` .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Creating a CRD - Let's create the Custom Resource Definition for our Coffee resource .exercise[ - Load the CRD: ```bash kubectl apply -f ~/container.training/k8s/coffee-1.yaml ``` - Confirm that it shows up: ```bash kubectl get crds ``` ] .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Creating custom resources The YAML below defines a resource using the CRD that we just created: ```yaml kind: Coffee apiVersion: container.training/v1alpha1 metadata: name: arabica spec: taste: strong ``` .exercise[ - Create a few types of coffee beans: ```bash kubectl apply -f ~/container.training/k8s/coffees.yaml ``` ] .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Viewing custom resources - By default, `kubectl get` only shows name and age of custom resources .exercise[ - View the coffee beans that we just created: ```bash kubectl get coffees ``` ] - We can improve that, but it's outside the scope of this section! .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## What can we do with CRDs? There are many possibilities! - *Operators* encapsulate complex sets of resources (e.g.: a PostgreSQL replicated cluster; an etcd cluster... <br/> see [awesome operators](https://github.com/operator-framework/awesome-operators) and [OperatorHub](https://operatorhub.io/) to find more) - Custom use-cases like [gitkube](https://gitkube.sh/) - creates a new custom type, `Remote`, exposing a git+ssh server - deploy by pushing YAML or Helm charts to that remote - Replacing built-in types with CRDs (see [this lightning talk by Tim Hockin](https://www.youtube.com/watch?v=ji0FWzFwNhA)) .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Little details - By default, CRDs are not *validated* (we can put anything we want in the `spec`) - When creating a CRD, we can pass an OpenAPI v3 schema (BETA!) (which will then be used to validate resources) - Generally, when creating a CRD, we also want to run a *controller* (otherwise nothing will happen when we create resources of that type) - The controller will typically *watch* our custom resources (and take action when they are created/updated) * Examples: [YAML to install the gitkube CRD](https://storage.googleapis.com/gitkube/gitkube-setup-stable.yaml), [YAML to install a redis operator CRD](https://github.com/amaizfinance/redis-operator/blob/master/deploy/crds/k8s_v1alpha1_redis_crd.yaml) * .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## (Ab)using the API server - If we need to store something "safely" (as in: in etcd), we can use CRDs - This gives us primitives to read/write/list objects (and optionally validate them) - The Kubernetes API server can run on its own (without the scheduler, controller manager, and kubelets) - By loading CRDs, we can have it manage totally different objects (unrelated to containers, clusters, etc.) .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Service catalog - *Service catalog* is another extension mechanism - It's not extending the Kubernetes API strictly speaking (but it still provides new features!) - It doesn't create new types; it uses: - ClusterServiceBroker - ClusterServiceClass - ClusterServicePlan - ServiceInstance - ServiceBinding - It uses the Open service broker API .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Admission controllers - Admission controllers are another way to extend the Kubernetes API - Instead of creating new types, admission controllers can transform or vet API requests - The diagram on the next slide shows the path of an API request (courtesy of Banzai Cloud) .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- class: pic  .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Types of admission controllers - *Validating* admission controllers can accept/reject the API call - *Mutating* admission controllers can modify the API request payload - Both types can also trigger additional actions (e.g. automatically create a Namespace if it doesn't exist) - There are a number of built-in admission controllers (see [documentation](https://kubernetes.io/docs/reference/access-authn-authz/admission-controllers/#what-does-each-admission-controller-do) for a list) - We can also dynamically define and register our own .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- class: extra-details ## Some built-in admission controllers - ServiceAccount: automatically adds a ServiceAccount to Pods that don't explicitly specify one - LimitRanger: applies resource constraints specified by LimitRange objects when Pods are created - NamespaceAutoProvision: automatically creates namespaces when an object is created in a non-existent namespace *Note: #1 and #2 are enabled by default; #3 is not.* .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Admission Webhooks - We can setup *admission webhooks* to extend the behavior of the API server - The API server will submit incoming API requests to these webhooks - These webhooks can be *validating* or *mutating* - Webhooks can be set up dynamically (without restarting the API server) - To setup a dynamic admission webhook, we create a special resource: a `ValidatingWebhookConfiguration` or a `MutatingWebhookConfiguration` - These resources are created and managed like other resources (i.e. `kubectl create`, `kubectl get`...) .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Webhook Configuration - A ValidatingWebhookConfiguration or MutatingWebhookConfiguration contains: - the address of the webhook - the authentication information to use with the webhook - a list of rules - The rules indicate for which objects and actions the webhook is triggered (to avoid e.g. triggering webhooks when setting up webhooks) .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## The aggregation layer - We can delegate entire parts of the Kubernetes API to external servers - This is done by creating APIService resources (check them with `kubectl get apiservices`!) - The APIService resource maps a type (kind) and version to an external service - All requests concerning that type are sent (proxied) to the external service - This allows to have resources like CRDs, but that aren't stored in etcd - Example: `metrics-server` (storing live metrics in etcd would be extremely inefficient) - Requires significantly more work than CRDs! .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- ## Documentation - [Custom Resource Definitions: when to use them](https://kubernetes.io/docs/concepts/extend-kubernetes/api-extension/custom-resources/) - [Custom Resources Definitions: how to use them](https://kubernetes.io/docs/tasks/access-kubernetes-api/custom-resources/custom-resource-definitions/) - [Service Catalog](https://kubernetes.io/docs/concepts/extend-kubernetes/service-catalog/) - [Built-in Admission Controllers](https://kubernetes.io/docs/reference/access-authn-authz/admission-controllers/) - [Dynamic Admission Controllers](https://kubernetes.io/docs/reference/access-authn-authz/extensible-admission-controllers/) - [Aggregation Layer](https://kubernetes.io/docs/concepts/extend-kubernetes/api-extension/apiserver-aggregation/) ??? :EN:- Extending the Kubernetes API :EN:- Custom Resource Definitions (CRDs) :EN:- The aggregation layer :EN:- Admission control and webhooks :FR:- Comment étendre l'API Kubernetes :FR:- Les CRDs *(Custom Resource Definitions)* :FR:- Extension via *aggregation layer*, *admission control*, *webhooks* .debug[[k8s/extending-api.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/extending-api.md)] --- class: pic .interstitial[] --- name: toc-operators class: title Operators .nav[ [Previous section](#toc-extending-the-kubernetes-api) | [Back to table of contents](#toc-module-3) | [Next section](#toc-volumes) ] .debug[(automatically generated title slide)] --- # Operators - Operators are one of the many ways to extend Kubernetes - We will define operators - We will see how they work - We will install a specific operator (for ElasticSearch) - We will use it to provision an ElasticSearch cluster .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## What are operators? *An operator represents **human operational knowledge in software,** <br/> to reliably manage an application. — [CoreOS](https://coreos.com/blog/introducing-operators.html)* Examples: - Deploying and configuring replication with MySQL, PostgreSQL ... - Setting up Elasticsearch, Kafka, RabbitMQ, Zookeeper ... - Reacting to failures when intervention is needed - Scaling up and down these systems .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## What are they made from? - Operators combine two things: - Custom Resource Definitions - controller code watching the corresponding resources and acting upon them - A given operator can define one or multiple CRDs - The controller code (control loop) typically runs within the cluster (running as a Deployment with 1 replica is a common scenario) - But it could also run elsewhere (nothing mandates that the code run on the cluster, as long as it has API access) .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Why use operators? - Kubernetes gives us Deployments, StatefulSets, Services ... - These mechanisms give us building blocks to deploy applications - They work great for services that are made of *N* identical containers (like stateless ones) - They also work great for some stateful applications like Consul, etcd ... (with the help of highly persistent volumes) - They're not enough for complex services: - where different containers have different roles - where extra steps have to be taken when scaling or replacing containers .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Use-cases for operators - Systems with primary/secondary replication Examples: MariaDB, MySQL, PostgreSQL, Redis ... - Systems where different groups of nodes have different roles Examples: ElasticSearch, MongoDB ... - Systems with complex dependencies (that are themselves managed with operators) Examples: Flink or Kafka, which both depend on Zookeeper .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## More use-cases - Representing and managing external resources (Example: [AWS S3 Operator](https://operatorhub.io/operator/awss3-operator-registry)) - Managing complex cluster add-ons (Example: [Istio operator](https://operatorhub.io/operator/istio)) - Deploying and managing our applications' lifecycles (more on that later) .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## How operators work - An operator creates one or more CRDs (i.e., it creates new "Kinds" of resources on our cluster) - The operator also runs a *controller* that will watch its resources - Each time we create/update/delete a resource, the controller is notified (we could write our own cheap controller with `kubectl get --watch`) .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## One operator in action - We will install [Elastic Cloud on Kubernetes](https://www.elastic.co/guide/en/cloud-on-k8s/current/k8s-quickstart.html), an ElasticSearch operator - This operator requires PersistentVolumes - We will install Rancher's [local path storage provisioner](https://github.com/rancher/local-path-provisioner) to automatically create these - Then, we will create an ElasticSearch resource - The operator will detect that resource and provision the cluster .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Installing a Persistent Volume provisioner (This step can be skipped if you already have a dynamic volume provisioner.) - This provisioner creates Persistent Volumes backed by `hostPath` (local directories on our nodes) - It doesn't require anything special ... - ... But losing a node = losing the volumes on that node! .exercise[ - Install the local path storage provisioner: ```bash kubectl apply -f ~/container.training/k8s/local-path-storage.yaml ``` ] .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Making sure we have a default StorageClass - The ElasticSearch operator will create StatefulSets - These StatefulSets will instantiate PersistentVolumeClaims - These PVCs need to be explicitly associated with a StorageClass - Or we need to tag a StorageClass to be used as the default one .exercise[ - List StorageClasses: ```bash kubectl get storageclasses ``` ] We should see the `local-path` StorageClass. .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Setting a default StorageClass - This is done by adding an annotation to the StorageClass: `storageclass.kubernetes.io/is-default-class: true` .exercise[ - Tag the StorageClass so that it's the default one: ```bash kubectl annotate storageclass local-path \ storageclass.kubernetes.io/is-default-class=true ``` - Check the result: ```bash kubectl get storageclasses ``` ] Now, the StorageClass should have `(default)` next to its name. .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Install the ElasticSearch operator - The operator provides: - a few CustomResourceDefinitions - a Namespace for its other resources - a ValidatingWebhookConfiguration for type checking - a StatefulSet for its controller and webhook code - a ServiceAccount, ClusterRole, ClusterRoleBinding for permissions - All these resources are grouped in a convenient YAML file .exercise[ - Install the operator: ```bash kubectl apply -f ~/container.training/k8s/eck-operator.yaml ``` ] .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Check our new custom resources - Let's see which CRDs were created .exercise[ - List all CRDs: ```bash kubectl get crds ``` ] This operator supports ElasticSearch, but also Kibana and APM. Cool! .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Create the `eck-demo` namespace - For clarity, we will create everything in a new namespace, `eck-demo` - This namespace is hard-coded in the YAML files that we are going to use - We need to create that namespace .exercise[ - Create the `eck-demo` namespace: ```bash kubectl create namespace eck-demo ``` - Switch to that namespace: ```bash kns eck-demo ``` ] .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- class: extra-details ## Can we use a different namespace? Yes, but then we need to update all the YAML manifests that we are going to apply in the next slides. The `eck-demo` namespace is hard-coded in these YAML manifests. Why? Because when defining a ClusterRoleBinding that references a ServiceAccount, we have to indicate in which namespace the ServiceAccount is located. .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Create an ElasticSearch resource - We can now create a resource with `kind: ElasticSearch` - The YAML for that resource will specify all the desired parameters: - how many nodes we want - image to use - add-ons (kibana, cerebro, ...) - whether to use TLS or not - etc. .exercise[ - Create our ElasticSearch cluster: ```bash kubectl apply -f ~/container.training/k8s/eck-elasticsearch.yaml ``` ] .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Operator in action - Over the next minutes, the operator will create our ES cluster - It will report our cluster status through the CRD .exercise[ - Check the logs of the operator: ```bash stern --namespace=elastic-system operator ``` <!-- ```wait elastic-operator-0``` ```tmux split-pane -v``` ---> - Watch the status of the cluster through the CRD: ```bash kubectl get es -w ``` <!-- ```longwait green``` ```key ^C``` ```key ^D``` ```key ^C``` --> ] .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Connecting to our cluster - It's not easy to use the ElasticSearch API from the shell - But let's check at least if ElasticSearch is up! .exercise[ - Get the ClusterIP of our ES instance: ```bash kubectl get services ``` - Issue a request with `curl`: ```bash curl http://`CLUSTERIP`:9200 ``` ] We get an authentication error. Our cluster is protected! .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Obtaining the credentials - The operator creates a user named `elastic` - It generates a random password and stores it in a Secret .exercise[ - Extract the password: ```bash kubectl get secret demo-es-elastic-user \ -o go-template="{{ .data.elastic | base64decode }} " ``` - Use it to connect to the API: ```bash curl -u elastic:`PASSWORD` http://`CLUSTERIP`:9200 ``` ] We should see a JSON payload with the `"You Know, for Search"` tagline. .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Sending data to the cluster - Let's send some data to our brand new ElasticSearch cluster! - We'll deploy a filebeat DaemonSet to collect node logs .exercise[ - Deploy filebeat: ```bash kubectl apply -f ~/container.training/k8s/eck-filebeat.yaml ``` - Wait until some pods are up: ```bash watch kubectl get pods -l k8s-app=filebeat ``` <!-- ```wait Running``` ```key ^C``` --> - Check that a filebeat index was created: ```bash curl -u elastic:`PASSWORD` http://`CLUSTERIP`:9200/_cat/indices ``` ] .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Deploying an instance of Kibana - Kibana can visualize the logs injected by filebeat - The ECK operator can also manage Kibana - Let's give it a try! .exercise[ - Deploy a Kibana instance: ```bash kubectl apply -f ~/container.training/k8s/eck-kibana.yaml ``` - Wait for it to be ready: ```bash kubectl get kibana -w ``` <!-- ```longwait green``` ```key ^C``` --> ] .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Connecting to Kibana - Kibana is automatically set up to conect to ElasticSearch (this is arranged by the YAML that we're using) - However, it will ask for authentication - It's using the same user/password as ElasticSearch .exercise[ - Get the NodePort allocated to Kibana: ```bash kubectl get services ``` - Connect to it with a web browser - Use the same user/password as before ] .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Setting up Kibana After the Kibana UI loads, we need to click around a bit .exercise[ - Pick "explore on my own" - Click on Use Elasticsearch data / Connect to your Elasticsearch index" - Enter `filebeat-*` for the index pattern and click "Next step" - Select `@timestamp` as time filter field name - Click on "discover" (the small icon looking like a compass on the left bar) - Play around! ] .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Scaling up the cluster - At this point, we have only one node - We are going to scale up - But first, we'll deploy Cerebro, an UI for ElasticSearch - This will let us see the state of the cluster, how indexes are sharded, etc. .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Deploying Cerebro - Cerebro is stateless, so it's fairly easy to deploy (one Deployment + one Service) - However, it needs the address and credentials for ElasticSearch - We prepared yet another manifest for that! .exercise[ - Deploy Cerebro: ```bash kubectl apply -f ~/container.training/k8s/eck-cerebro.yaml ``` - Lookup the NodePort number and connect to it: ```bash kubectl get services ``` ] .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Scaling up the cluster - We can see on Cerebro that the cluster is "yellow" (because our index is not replicated) - Let's change that! .exercise[ - Edit the ElasticSearch cluster manifest: ```bash kubectl edit es demo ``` - Find the field `count: 1` and change it to 3 - Save and quit <!-- ```wait Please edit``` ```keys /count:``` ```key ^J``` ```keys $r3:x``` ```key ^J``` --> ] .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Deploying our apps with operators - It is very simple to deploy with `kubectl create deployment` / `kubectl expose` - We can unlock more features by writing YAML and using `kubectl apply` - Kustomize or Helm let us deploy in multiple environments (and adjust/tweak parameters in each environment) - We can also use an operator to deploy our application .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Pros and cons of deploying with operators - The app definition and configuration is persisted in the Kubernetes API - Multiple instances of the app can be manipulated with `kubectl get` - We can add labels, annotations to the app instances - Our controller can execute custom code for any lifecycle event - However, we need to write this controller - We need to be careful about changes (what happens when the resource `spec` is updated?) .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Operators are not magic - Look at the ElasticSearch resource definition (`~/container.training/k8s/eck-elasticsearch.yaml`) - What should happen if we flip the TLS flag? Twice? - What should happen if we add another group of nodes? - What if we want different images or parameters for the different nodes? *Operators can be very powerful. <br/> But we need to know exactly the scenarios that they can handle.* ??? :EN:- Kubernetes operators :EN:- Deploying ElasticSearch with ECK :FR:- Les opérateurs :FR:- Déployer ElasticSearch avec ECK .debug[[k8s/operators.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators.md)] --- ## Designing an operator - Once we understand CRDs and operators, it's tempting to use them everywhere - Yes, we can do (almost) everything with operators ... - ... But *should we?* - Very often, the answer is **“no!”** - Operators are powerful, but significantly more complex than other solutions .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## When should we (not) use operators? - Operators are great if our app needs to react to cluster events (nodes or pods going down, and requiring extensive reconfiguration) - Operators *might* be helpful to encapsulate complexity (manipulate one single custom resource for an entire stack) - Operators are probably overkill if a Helm chart would suffice - That being said, if we really want to write an operator ... Read on! .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## What does it take to write an operator? - Writing a quick-and-dirty operator, or a POC/MVP, is easy - Writing a robust operator is hard - We will describe the general idea - We will identify some of the associated challenges - We will list a few tools that can help us .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Top-down vs. bottom-up - Both approaches are possible - Let's see what they entail, and their respective pros and cons .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Top-down approach - Start with high-level design (see next slide) - Pros: - can yield cleaner design that will be more robust - Cons: - must be able to anticipate all the events that might happen - design will be better only to the extent of what we anticipated - hard to anticipate if we don't have production experience .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## High-level design - What are we solving? (e.g.: geographic databases backed by PostGIS with Redis caches) - What are our use-cases, stories? (e.g.: adding/resizing caches and read replicas; load balancing queries) - What kind of outage do we want to address? (e.g.: loss of individual node, pod, volume) - What are our *non-features*, the things we don't want to address? (e.g.: loss of datacenter/zone; differentiating between read and write queries; <br/> cache invalidation; upgrading to newer major versions of Redis, PostGIS, PostgreSQL) .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Low-level design - What Custom Resource Definitions do we need? (one, many?) - How will we store configuration information? (part of the CRD spec fields, annotations, other?) - Do we need to store state? If so, where? - state that is small and doesn't change much can be stored via the Kubernetes API <br/> (e.g.: leader information, configuration, credentials) - things that are big and/or change a lot should go elsewhere <br/> (e.g.: metrics, bigger configuration file like GeoIP) .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- class: extra-details ## What can we store via the Kubernetes API? - The API server stores most Kubernetes resources in etcd - Etcd is designed for reliability, not for performance - If our storage needs exceed what etcd can offer, we need to use something else: - either directly - or by extending the API server <br/>(for instance by using the agregation layer, like [metrics server](https://github.com/kubernetes-incubator/metrics-server) does) .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Bottom-up approach - Start with existing Kubernetes resources (Deployment, Stateful Set...) - Run the system in production - Add scripts, automation, to facilitate day-to-day operations - Turn the scripts into an operator - Pros: simpler to get started; reflects actual use-cases - Cons: can result in convoluted designs requiring extensive refactor .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## General idea - Our operator will watch its CRDs *and associated resources* - Drawing state diagrams and finite state automata helps a lot - It's OK if some transitions lead to a big catch-all "human intervention" - Over time, we will learn about new failure modes and add to these diagrams - It's OK to start with CRD creation / deletion and prevent any modification (that's the easy POC/MVP we were talking about) - *Presentation* and *validation* will help our users (more on that later) .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Challenges - Reacting to infrastructure disruption can seem hard at first - Kubernetes gives us a lot of primitives to help: - Pods and Persistent Volumes will *eventually* recover - Stateful Sets give us easy ways to "add N copies" of a thing - The real challenges come with configuration changes (i.e., what to do when our users update our CRDs) - Keep in mind that [some] of the [largest] cloud [outages] haven't been caused by [natural catastrophes], or even code bugs, but by configuration changes [some]: https://www.datacenterdynamics.com/news/gcp-outage-mainone-leaked-google-cloudflare-ip-addresses-china-telecom/ [largest]: https://aws.amazon.com/message/41926/ [outages]: https://aws.amazon.com/message/65648/ [natural catastrophes]: https://www.datacenterknowledge.com/amazon/aws-says-it-s-never-seen-whole-data-center-go-down .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Configuration changes - It is helpful to analyze and understand how Kubernetes controllers work: - watch resource for modifications - compare desired state (CRD) and current state - issue actions to converge state - Configuration changes will probably require *another* state diagram or FSA - Again, it's OK to have transitions labeled as "unsupported" (i.e. reject some modifications because we can't execute them) .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Tools - CoreOS / RedHat Operator Framework [GitHub](https://github.com/operator-framework) | [Blog](https://developers.redhat.com/blog/2018/12/18/introduction-to-the-kubernetes-operator-framework/) | [Intro talk](https://www.youtube.com/watch?v=8k_ayO1VRXE) | [Deep dive talk](https://www.youtube.com/watch?v=fu7ecA2rXmc) | [Simple example](https://medium.com/faun/writing-your-first-kubernetes-operator-8f3df4453234) - Zalando Kubernetes Operator Pythonic Framework (KOPF) [GitHub](https://github.com/zalando-incubator/kopf) | [Docs](https://kopf.readthedocs.io/) | [Step-by-step tutorial](https://kopf.readthedocs.io/en/stable/walkthrough/problem/) - Mesosphere Kubernetes Universal Declarative Operator (KUDO) [GitHub](https://github.com/kudobuilder/kudo) | [Blog](https://mesosphere.com/blog/announcing-maestro-a-declarative-no-code-approach-to-kubernetes-day-2-operators/) | [Docs](https://kudo.dev/) | [Zookeeper example](https://github.com/kudobuilder/frameworks/tree/master/repo/stable/zookeeper) .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Validation - By default, a CRD is "free form" (we can put pretty much anything we want in it) - When creating a CRD, we can provide an OpenAPI v3 schema ([Example](https://github.com/amaizfinance/redis-operator/blob/master/deploy/crds/k8s_v1alpha1_redis_crd.yaml#L34)) - The API server will then validate resources created/edited with this schema - If we need a stronger validation, we can use a Validating Admission Webhook: - run an [admission webhook server](https://kubernetes.io/docs/reference/access-authn-authz/extensible-admission-controllers/#write-an-admission-webhook-server) to receive validation requests - register the webhook by creating a [ValidatingWebhookConfiguration](https://kubernetes.io/docs/reference/access-authn-authz/extensible-admission-controllers/#configure-admission-webhooks-on-the-fly) - each time the API server receives a request matching the configuration, <br/>the request is sent to our server for validation .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Presentation - By default, `kubectl get mycustomresource` won't display much information (just the name and age of each resource) - When creating a CRD, we can specify additional columns to print ([Example](https://github.com/amaizfinance/redis-operator/blob/master/deploy/crds/k8s_v1alpha1_redis_crd.yaml#L6), [Docs](https://kubernetes.io/docs/tasks/access-kubernetes-api/custom-resources/custom-resource-definitions/#additional-printer-columns)) - By default, `kubectl describe mycustomresource` will also be generic - `kubectl describe` can show events related to our custom resources (for that, we need to create Event resources, and fill the `involvedObject` field) - For scalable resources, we can define a `scale` sub-resource - This will enable the use of `kubectl scale` and other scaling-related operations .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## About scaling - It is possible to use the HPA (Horizontal Pod Autoscaler) with CRDs - But it is not always desirable - The HPA works very well for homogenous, stateless workloads - For other workloads, your mileage may vary - Some systems can scale across multiple dimensions (for instance: increase number of replicas, or number of shards?) - If autoscaling is desired, the operator will have to take complex decisions (example: Zalando's Elasticsearch Operator ([Video](https://www.youtube.com/watch?v=lprE0J0kAq0))) .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Versioning - As our operator evolves over time, we may have to change the CRD (add, remove, change fields) - Like every other resource in Kubernetes, [custom resources are versioned](https://kubernetes.io/docs/tasks/access-kubernetes-api/custom-resources/custom-resource-definition-versioning/ ) - When creating a CRD, we need to specify a *list* of versions - Versions can be marked as `stored` and/or `served` .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Stored version - Exactly one version has to be marked as the `stored` version - As the name implies, it is the one that will be stored in etcd - Resources in storage are never converted automatically (we need to read and re-write them ourselves) - Yes, this means that we can have different versions in etcd at any time - Our code needs to handle all the versions that still exist in storage .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Served versions - By default, the Kubernetes API will serve resources "as-is" (using their stored version) - It will assume that all versions are compatible storage-wise (i.e. that the spec and fields are compatible between versions) - We can provide [conversion webhooks](https://kubernetes.io/docs/tasks/access-kubernetes-api/custom-resources/custom-resource-definition-versioning/#webhook-conversion) to "translate" requests (the alternative is to upgrade all stored resources and stop serving old versions) .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Operator reliability - Remember that the operator itself must be resilient (e.g.: the node running it can fail) - Our operator must be able to restart and recover gracefully - Do not store state locally (unless we can reconstruct that state when we restart) - As indicated earlier, we can use the Kubernetes API to store data: - in the custom resources themselves - in other resources' annotations .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- ## Beyond CRDs - CRDs cannot use custom storage (e.g. for time series data) - CRDs cannot support arbitrary subresources (like logs or exec for Pods) - CRDs cannot support protobuf (for faster, more efficient communication) - If we need these things, we can use the [aggregation layer](https://kubernetes.io/docs/concepts/extend-kubernetes/api-extension/apiserver-aggregation/) instead - The aggregation layer proxies all requests below a specific path to another server (this is used e.g. by the metrics server) - [This documentation page](https://kubernetes.io/docs/concepts/extend-kubernetes/api-extension/custom-resources/#choosing-a-method-for-adding-custom-resources) compares the features of CRDs and API aggregation ??? :EN:- Guidelines to design our own operators :FR:- Comment concevoir nos propres opérateurs .debug[[k8s/operators-design.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/operators-design.md)] --- class: pic .interstitial[] --- name: toc-volumes class: title Volumes .nav[ [Previous section](#toc-operators) | [Back to table of contents](#toc-module-4) | [Next section](#toc-managing-configuration) ] .debug[(automatically generated title slide)] --- # Volumes - Volumes are special directories that are mounted in containers - Volumes can have many different purposes: - share files and directories between containers running on the same machine - share files and directories between containers and their host - centralize configuration information in Kubernetes and expose it to containers - manage credentials and secrets and expose them securely to containers - store persistent data for stateful services - access storage systems (like Ceph, EBS, NFS, Portworx, and many others) .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- class: extra-details ## Kubernetes volumes vs. Docker volumes - Kubernetes and Docker volumes are very similar (the [Kubernetes documentation](https://kubernetes.io/docs/concepts/storage/volumes/) says otherwise ... <br/> but it refers to Docker 1.7, which was released in 2015!) - Docker volumes allow us to share data between containers running on the same host - Kubernetes volumes allow us to share data between containers in the same pod - Both Docker and Kubernetes volumes enable access to storage systems - Kubernetes volumes are also used to expose configuration and secrets - Docker has specific concepts for configuration and secrets <br/> (but under the hood, the technical implementation is similar) - If you're not familiar with Docker volumes, you can safely ignore this slide! .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Volumes ≠ Persistent Volumes - Volumes and Persistent Volumes are related, but very different! - *Volumes*: - appear in Pod specifications (we'll see that in a few slides) - do not exist as API resources (**cannot** do `kubectl get volumes`) - *Persistent Volumes*: - are API resources (**can** do `kubectl get persistentvolumes`) - correspond to concrete volumes (e.g. on a SAN, EBS, etc.) - cannot be associated with a Pod directly; but through a Persistent Volume Claim - won't be discussed further in this section .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Adding a volume to a Pod - We will start with the simplest Pod manifest we can find - We will add a volume to that Pod manifest - We will mount that volume in a container in the Pod - By default, this volume will be an `emptyDir` (an empty directory) - It will "shadow" the directory where it's mounted .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Our basic Pod ```yaml apiVersion: v1 kind: Pod metadata: name: nginx-without-volume spec: containers: - name: nginx image: nginx ``` This is a MVP! (Minimum Viable Pod😉) It runs a single NGINX container. .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Trying the basic pod .exercise[ - Create the Pod: ```bash kubectl create -f ~/container.training/k8s/nginx-1-without-volume.yaml ``` <!-- ```bash kubectl wait pod/nginx-without-volume --for condition=ready ``` --> - Get its IP address: ```bash IPADDR=$(kubectl get pod nginx-without-volume -o jsonpath={.status.podIP}) ``` - Send a request with curl: ```bash curl $IPADDR ``` ] (We should see the "Welcome to NGINX" page.) .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Adding a volume - We need to add the volume in two places: - at the Pod level (to declare the volume) - at the container level (to mount the volume) - We will declare a volume named `www` - No type is specified, so it will default to `emptyDir` (as the name implies, it will be initialized as an empty directory at pod creation) - In that pod, there is also a container named `nginx` - That container mounts the volume `www` to path `/usr/share/nginx/html/` .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## The Pod with a volume ```yaml apiVersion: v1 kind: Pod metadata: name: nginx-with-volume spec: volumes: - name: www containers: - name: nginx image: nginx volumeMounts: - name: www mountPath: /usr/share/nginx/html/ ``` .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Trying the Pod with a volume .exercise[ - Create the Pod: ```bash kubectl create -f ~/container.training/k8s/nginx-2-with-volume.yaml ``` <!-- ```bash kubectl wait pod/nginx-with-volume --for condition=ready ``` --> - Get its IP address: ```bash IPADDR=$(kubectl get pod nginx-with-volume -o jsonpath={.status.podIP}) ``` - Send a request with curl: ```bash curl $IPADDR ``` ] (We should now see a "403 Forbidden" error page.) .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Populating the volume with another container - Let's add another container to the Pod - Let's mount the volume in *both* containers - That container will populate the volume with static files - NGINX will then serve these static files - To populate the volume, we will clone the Spoon-Knife repository - this repository is https://github.com/octocat/Spoon-Knife - it's very popular (more than 100K stars!) .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Sharing a volume between two containers .small[ ```yaml apiVersion: v1 kind: Pod metadata: name: nginx-with-git spec: volumes: - name: www containers: - name: nginx image: nginx volumeMounts: - name: www mountPath: /usr/share/nginx/html/ - name: git image: alpine command: [ "sh", "-c", "apk add git && git clone https://github.com/octocat/Spoon-Knife /www" ] volumeMounts: - name: www mountPath: /www/ restartPolicy: OnFailure ``` ] .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Sharing a volume, explained - We added another container to the pod - That container mounts the `www` volume on a different path (`/www`) - It uses the `alpine` image - When started, it installs `git` and clones the `octocat/Spoon-Knife` repository (that repository contains a tiny HTML website) - As a result, NGINX now serves this website .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Trying the shared volume - This one will be time-sensitive! - We need to catch the Pod IP address *as soon as it's created* - Then send a request to it *as fast as possible* .exercise[ - Watch the pods (so that we can catch the Pod IP address) ```bash kubectl get pods -o wide --watch ``` <!-- ```wait NAME``` ```tmux split-pane -v``` --> ] .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Shared volume in action .exercise[ - Create the pod: ```bash kubectl create -f ~/container.training/k8s/nginx-3-with-git.yaml ``` <!-- ```bash kubectl wait pod/nginx-with-git --for condition=initialized``` ```bash IP=$(kubectl get pod nginx-with-git -o jsonpath={.status.podIP})``` --> - As soon as we see its IP address, access it: ```bash curl `$IP` ``` <!-- ```bash /bin/sleep 5``` --> - A few seconds later, the state of the pod will change; access it again: ```bash curl `$IP` ``` ] The first time, we should see "403 Forbidden". The second time, we should see the HTML file from the Spoon-Knife repository. .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Explanations - Both containers are started at the same time - NGINX starts very quickly (it can serve requests immediately) - But at this point, the volume is empty (NGINX serves "403 Forbidden") - The other containers installs git and clones the repository (this takes a bit longer) - When the other container is done, the volume holds the repository (NGINX serves the HTML file) .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## The devil is in the details - The default `restartPolicy` is `Always` - This would cause our `git` container to run again ... and again ... and again (with an exponential back-off delay, as explained [in the documentation](https://kubernetes.io/docs/concepts/workloads/pods/pod-lifecycle/#restart-policy)) - That's why we specified `restartPolicy: OnFailure` .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Inconsistencies - There is a short period of time during which the website is not available (because the `git` container hasn't done its job yet) - With a bigger website, we could get inconsistent results (where only a part of the content is ready) - In real applications, this could cause incorrect results - How can we avoid that? .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Init Containers - We can define containers that should execute *before* the main ones - They will be executed in order (instead of in parallel) - They must all succeed before the main containers are started - This is *exactly* what we need here! - Let's see one in action .footnote[See [Init Containers](https://kubernetes.io/docs/concepts/workloads/pods/init-containers/) documentation for all the details.] .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Defining Init Containers .small[ ```yaml apiVersion: v1 kind: Pod metadata: name: nginx-with-init spec: volumes: - name: www containers: - name: nginx image: nginx volumeMounts: - name: www mountPath: /usr/share/nginx/html/ initContainers: - name: git image: alpine command: [ "sh", "-c", "apk add git && git clone https://github.com/octocat/Spoon-Knife /www" ] volumeMounts: - name: www mountPath: /www/ ``` ] .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Trying the init container .exercise[ - Create the pod: ```bash kubectl create -f ~/container.training/k8s/nginx-4-with-init.yaml ``` - Try to send HTTP requests as soon as the pod comes up <!-- ```key ^D``` ```key ^C``` --> ] - This time, instead of "403 Forbidden" we get a "connection refused" - NGINX doesn't start until the git container has done its job - We never get inconsistent results (a "half-ready" container) .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Other uses of init containers - Load content - Generate configuration (or certificates) - Database migrations - Waiting for other services to be up (to avoid flurry of connection errors in main container) - etc. .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- ## Volume lifecycle - The lifecycle of a volume is linked to the pod's lifecycle - This means that a volume is created when the pod is created - This is mostly relevant for `emptyDir` volumes (other volumes, like remote storage, are not "created" but rather "attached" ) - A volume survives across container restarts - A volume is destroyed (or, for remote storage, detached) when the pod is destroyed ??? :EN:- Sharing data between containers with volumes :EN:- When and how to use Init Containers :FR:- Partager des données grâce aux volumes :FR:- Quand et comment utiliser un *Init Container* .debug[[k8s/volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/volumes.md)] --- class: pic .interstitial[] --- name: toc-managing-configuration class: title Managing configuration .nav[ [Previous section](#toc-volumes) | [Back to table of contents](#toc-module-4) | [Next section](#toc-stateful-sets) ] .debug[(automatically generated title slide)] --- # Managing configuration - Some applications need to be configured (obviously!) - There are many ways for our code to pick up configuration: - command-line arguments - environment variables - configuration files - configuration servers (getting configuration from a database, an API...) - ... and more (because programmers can be very creative!) - How can we do these things with containers and Kubernetes? .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Passing configuration to containers - There are many ways to pass configuration to code running in a container: - baking it into a custom image - command-line arguments - environment variables - injecting configuration files - exposing it over the Kubernetes API - configuration servers - Let's review these different strategies! .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Baking custom images - Put the configuration in the image (it can be in a configuration file, but also `ENV` or `CMD` actions) - It's easy! It's simple! - Unfortunately, it also has downsides: - multiplication of images - different images for dev, staging, prod ... - minor reconfigurations require a whole build/push/pull cycle - Avoid doing it unless you don't have the time to figure out other options .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Command-line arguments - Pass options to `args` array in the container specification - Example ([source](https://github.com/coreos/pods/blob/master/kubernetes.yaml#L29)): ```yaml args: - "--data-dir=/var/lib/etcd" - "--advertise-client-urls=http://127.0.0.1:2379" - "--listen-client-urls=http://127.0.0.1:2379" - "--listen-peer-urls=http://127.0.0.1:2380" - "--name=etcd" ``` - The options can be passed directly to the program that we run ... ... or to a wrapper script that will use them to e.g. generate a config file .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Command-line arguments, pros & cons - Works great when options are passed directly to the running program (otherwise, a wrapper script can work around the issue) - Works great when there aren't too many parameters (to avoid a 20-lines `args` array) - Requires documentation and/or understanding of the underlying program ("which parameters and flags do I need, again?") - Well-suited for mandatory parameters (without default values) - Not ideal when we need to pass a real configuration file anyway .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Environment variables - Pass options through the `env` map in the container specification - Example: ```yaml env: - name: ADMIN_PORT value: "8080" - name: ADMIN_AUTH value: Basic - name: ADMIN_CRED value: "admin:0pensesame!" ``` .warning[`value` must be a string! Make sure that numbers and fancy strings are quoted.] 🤔 Why this weird `{name: xxx, value: yyy}` scheme? It will be revealed soon! .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## The downward API - In the previous example, environment variables have fixed values - We can also use a mechanism called the *downward API* - The downward API allows exposing pod or container information - either through special files (we won't show that for now) - or through environment variables - The value of these environment variables is computed when the container is started - Remember: environment variables won't (can't) change after container start - Let's see a few concrete examples! .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Exposing the pod's namespace ```yaml - name: MY_POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace ``` - Useful to generate FQDN of services (in some contexts, a short name is not enough) - For instance, the two commands should be equivalent: ``` curl api-backend curl api-backend.$MY_POD_NAMESPACE.svc.cluster.local ``` .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Exposing the pod's IP address ```yaml - name: MY_POD_IP valueFrom: fieldRef: fieldPath: status.podIP ``` - Useful if we need to know our IP address (we could also read it from `eth0`, but this is more solid) .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Exposing the container's resource limits ```yaml - name: MY_MEM_LIMIT valueFrom: resourceFieldRef: containerName: test-container resource: limits.memory ``` - Useful for runtimes where memory is garbage collected - Example: the JVM (the memory available to the JVM should be set with the `-Xmx ` flag) - Best practice: set a memory limit, and pass it to the runtime - Note: recent versions of the JVM can do this automatically (see [JDK-8146115](https://bugs.java.com/bugdatabase/view_bug.do?bug_id=JDK-8146115)) and [this blog post](https://very-serio.us/2017/12/05/running-jvms-in-kubernetes/) for detailed examples) .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## More about the downward API - [This documentation page](https://kubernetes.io/docs/tasks/inject-data-application/environment-variable-expose-pod-information/) tells more about these environment variables - And [this one](https://kubernetes.io/docs/tasks/inject-data-application/downward-api-volume-expose-pod-information/) explains the other way to use the downward API (through files that get created in the container filesystem) - That second link also includes a list of all the fields that can be used with the downward API .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Environment variables, pros and cons - Works great when the running program expects these variables - Works great for optional parameters with reasonable defaults (since the container image can provide these defaults) - Sort of auto-documented (we can see which environment variables are defined in the image, and their values) - Can be (ab)used with longer values ... - ... You *can* put an entire Tomcat configuration file in an environment ... - ... But *should* you? (Do it if you really need to, we're not judging! But we'll see better ways.) .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Injecting configuration files - Sometimes, there is no way around it: we need to inject a full config file - Kubernetes provides a mechanism for that purpose: `configmaps` - A configmap is a Kubernetes resource that exists in a namespace - Conceptually, it's a key/value map (values are arbitrary strings) - We can think about them in (at least) two different ways: - as holding entire configuration file(s) - as holding individual configuration parameters *Note: to hold sensitive information, we can use "Secrets", which are another type of resource behaving very much like configmaps. We'll cover them just after!* .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Configmaps storing entire files - In this case, each key/value pair corresponds to a configuration file - Key = name of the file - Value = content of the file - There can be one key/value pair, or as many as necessary (for complex apps with multiple configuration files) - Examples: ``` # Create a configmap with a single key, "app.conf" kubectl create configmap my-app-config --from-file=app.conf # Create a configmap with a single key, "app.conf" but another file kubectl create configmap my-app-config --from-file=app.conf=app-prod.conf # Create a configmap with multiple keys (one per file in the config.d directory) kubectl create configmap my-app-config --from-file=config.d/ ``` .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Configmaps storing individual parameters - In this case, each key/value pair corresponds to a parameter - Key = name of the parameter - Value = value of the parameter - Examples: ``` # Create a configmap with two keys kubectl create cm my-app-config \ --from-literal=foreground=red \ --from-literal=background=blue # Create a configmap from a file containing key=val pairs kubectl create cm my-app-config \ --from-env-file=app.conf ``` .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Exposing configmaps to containers - Configmaps can be exposed as plain files in the filesystem of a container - this is achieved by declaring a volume and mounting it in the container - this is particularly effective for configmaps containing whole files - Configmaps can be exposed as environment variables in the container - this is achieved with the downward API - this is particularly effective for configmaps containing individual parameters - Let's see how to do both! .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Passing a configuration file with a configmap - We will start a load balancer powered by HAProxy - We will use the [official `haproxy` image](https://hub.docker.com/_/haproxy/) - It expects to find its configuration in `/usr/local/etc/haproxy/haproxy.cfg` - We will provide a simple HAproxy configuration, `k8s/haproxy.cfg` - It listens on port 80, and load balances connections between IBM and Google .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Creating the configmap .exercise[ - Go to the `k8s` directory in the repository: ```bash cd ~/container.training/k8s ``` - Create a configmap named `haproxy` and holding the configuration file: ```bash kubectl create configmap haproxy --from-file=haproxy.cfg ``` - Check what our configmap looks like: ```bash kubectl get configmap haproxy -o yaml ``` ] .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Using the configmap We are going to use the following pod definition: ```yaml apiVersion: v1 kind: Pod metadata: name: haproxy spec: volumes: - name: config configMap: name: haproxy containers: - name: haproxy image: haproxy volumeMounts: - name: config mountPath: /usr/local/etc/haproxy/ ``` .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Using the configmap - The resource definition from the previous slide is in `k8s/haproxy.yaml` .exercise[ - Create the HAProxy pod: ```bash kubectl apply -f ~/container.training/k8s/haproxy.yaml ``` <!-- ```hide kubectl wait pod haproxy --for condition=ready``` --> - Check the IP address allocated to the pod: ```bash kubectl get pod haproxy -o wide IP=$(kubectl get pod haproxy -o json | jq -r .status.podIP) ``` ] .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Testing our load balancer - The load balancer will send: - half of the connections to Google - the other half to IBM .exercise[ - Access the load balancer a few times: ```bash curl $IP curl $IP curl $IP ``` ] We should see connections served by Google, and others served by IBM. <br/> (Each server sends us a redirect page. Look at the URL that they send us to!) .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Exposing configmaps with the downward API - We are going to run a Docker registry on a custom port - By default, the registry listens on port 5000 - This can be changed by setting environment variable `REGISTRY_HTTP_ADDR` - We are going to store the port number in a configmap - Then we will expose that configmap as a container environment variable .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Creating the configmap .exercise[ - Our configmap will have a single key, `http.addr`: ```bash kubectl create configmap registry --from-literal=http.addr=0.0.0.0:80 ``` - Check our configmap: ```bash kubectl get configmap registry -o yaml ``` ] .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Using the configmap We are going to use the following pod definition: ```yaml apiVersion: v1 kind: Pod metadata: name: registry spec: containers: - name: registry image: registry env: - name: REGISTRY_HTTP_ADDR valueFrom: configMapKeyRef: name: registry key: http.addr ``` .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Using the configmap - The resource definition from the previous slide is in `k8s/registry.yaml` .exercise[ - Create the registry pod: ```bash kubectl apply -f ~/container.training/k8s/registry.yaml ``` <!-- ```hide kubectl wait pod registry --for condition=ready``` --> - Check the IP address allocated to the pod: ```bash kubectl get pod registry -o wide IP=$(kubectl get pod registry -o json | jq -r .status.podIP) ``` - Confirm that the registry is available on port 80: ```bash curl $IP/v2/_catalog ``` ] .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- ## Passwords, tokens, sensitive information - For sensitive information, there is another special resource: *Secrets* - Secrets and Configmaps work almost the same way (we'll expose the differences on the next slide) - The *intent* is different, though: *"You should use secrets for things which are actually secret like API keys, credentials, etc., and use config map for not-secret configuration data."* *"In the future there will likely be some differentiators for secrets like rotation or support for backing the secret API w/ HSMs, etc."* (Source: [the author of both features](https://stackoverflow.com/a/36925553/580281 )) .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- class: extra-details ## Differences between configmaps and secrets - Secrets are base64-encoded when shown with `kubectl get secrets -o yaml` - keep in mind that this is just *encoding*, not *encryption* - it is very easy to [automatically extract and decode secrets](https://medium.com/@mveritym/decoding-kubernetes-secrets-60deed7a96a3) - [Secrets can be encrypted at rest](https://kubernetes.io/docs/tasks/administer-cluster/encrypt-data/) - With RBAC, we can authorize a user to access configmaps, but not secrets (since they are two different kinds of resources) .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- class: extra-details ## Immutable ConfigMaps and Secrets - Since Kubernetes 1.19, it is possible to mark a ConfigMap or Secret as *immutable* ```bash kubectl patch configmap xyz --patch='{"immutable": true}' ``` - This brings performance improvements when using lots of ConfigMaps and Secrets (lots = tens of thousands) - Once a ConfigMap or Secret has been marked as immutable: - its content cannot be changed anymore - the `immutable` field can't be changed back either - the only way to change it is to delete and re-create it - Pods using it will have to be re-created as well ??? :EN:- Managing application configuration :EN:- Exposing configuration with the downward API :EN:- Exposing configuration with Config Maps and Secrets :FR:- Gérer la configuration des applications :FR:- Configuration au travers de la *downward API* :FR:- Configuration via les *Config Maps* et *Secrets* .debug[[k8s/configuration.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/configuration.md)] --- class: pic .interstitial[] --- name: toc-stateful-sets class: title Stateful sets .nav[ [Previous section](#toc-managing-configuration) | [Back to table of contents](#toc-module-4) | [Next section](#toc-running-a-consul-cluster) ] .debug[(automatically generated title slide)] --- # Stateful sets - Stateful sets are a type of resource in the Kubernetes API (like pods, deployments, services...) - They offer mechanisms to deploy scaled stateful applications - At a first glance, they look like *deployments*: - a stateful set defines a pod spec and a number of replicas *R* - it will make sure that *R* copies of the pod are running - that number can be changed while the stateful set is running - updating the pod spec will cause a rolling update to happen - But they also have some significant differences .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Stateful sets unique features - Pods in a stateful set are numbered (from 0 to *R-1*) and ordered - They are started and updated in order (from 0 to *R-1*) - A pod is started (or updated) only when the previous one is ready - They are stopped in reverse order (from *R-1* to 0) - Each pod know its identity (i.e. which number it is in the set) - Each pod can discover the IP address of the others easily - The pods can persist data on attached volumes 🤔 Wait a minute ... Can't we already attach volumes to pods and deployments? .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Revisiting volumes - [Volumes](https://kubernetes.io/docs/concepts/storage/volumes/) are used for many purposes: - sharing data between containers in a pod - exposing configuration information and secrets to containers - accessing storage systems - Let's see examples of the latter usage .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Volumes types - There are many [types of volumes](https://kubernetes.io/docs/concepts/storage/volumes/#types-of-volumes) available: - public cloud storage (GCEPersistentDisk, AWSElasticBlockStore, AzureDisk...) - private cloud storage (Cinder, VsphereVolume...) - traditional storage systems (NFS, iSCSI, FC...) - distributed storage (Ceph, Glusterfs, Portworx...) - Using a persistent volume requires: - creating the volume out-of-band (outside of the Kubernetes API) - referencing the volume in the pod description, with all its parameters .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Using a cloud volume Here is a pod definition using an AWS EBS volume (that has to be created first): ```yaml apiVersion: v1 kind: Pod metadata: name: pod-using-my-ebs-volume spec: containers: - image: ... name: container-using-my-ebs-volume volumeMounts: - mountPath: /my-ebs name: my-ebs-volume volumes: - name: my-ebs-volume awsElasticBlockStore: volumeID: vol-049df61146c4d7901 fsType: ext4 ``` .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Using an NFS volume Here is another example using a volume on an NFS server: ```yaml apiVersion: v1 kind: Pod metadata: name: pod-using-my-nfs-volume spec: containers: - image: ... name: container-using-my-nfs-volume volumeMounts: - mountPath: /my-nfs name: my-nfs-volume volumes: - name: my-nfs-volume nfs: server: 192.168.0.55 path: "/exports/assets" ``` .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Shortcomings of volumes - Their lifecycle (creation, deletion...) is managed outside of the Kubernetes API (we can't just use `kubectl apply/create/delete/...` to manage them) - If a Deployment uses a volume, all replicas end up using the same volume - That volume must then support concurrent access - some volumes do (e.g. NFS servers support multiple read/write access) - some volumes support concurrent reads - some volumes support concurrent access for colocated pods - What we really need is a way for each replica to have its own volume .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Individual volumes - The Pods of a Stateful set can have individual volumes (i.e. in a Stateful set with 3 replicas, there will be 3 volumes) - These volumes can be either: - allocated from a pool of pre-existing volumes (disks, partitions ...) - created dynamically using a storage system - This introduces a bunch of new Kubernetes resource types: Persistent Volumes, Persistent Volume Claims, Storage Classes (and also `volumeClaimTemplates`, that appear within Stateful Set manifests!) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Stateful set recap - A Stateful sets manages a number of identical pods (like a Deployment) - These pods are numbered, and started/upgraded/stopped in a specific order - These pods are aware of their number (e.g., #0 can decide to be the primary, and #1 can be secondary) - These pods can find the IP addresses of the other pods in the set (through a *headless service*) - These pods can each have their own persistent storage (Deployments cannot do that) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- class: pic .interstitial[] --- name: toc-running-a-consul-cluster class: title Running a Consul cluster .nav[ [Previous section](#toc-stateful-sets) | [Back to table of contents](#toc-module-4) | [Next section](#toc-persistent-volumes-claims) ] .debug[(automatically generated title slide)] --- # Running a Consul cluster - Here is a good use-case for Stateful sets! - We are going to deploy a Consul cluster with 3 nodes - Consul is a highly-available key/value store (like etcd or Zookeeper) - One easy way to bootstrap a cluster is to tell each node: - the addresses of other nodes - how many nodes are expected (to know when quorum is reached) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Bootstrapping a Consul cluster *After reading the Consul documentation carefully (and/or asking around), we figure out the minimal command-line to run our Consul cluster.* ``` consul agent -data-dir=/consul/data -client=0.0.0.0 -server -ui \ -bootstrap-expect=3 \ -retry-join=`X.X.X.X` \ -retry-join=`Y.Y.Y.Y` ``` - Replace X.X.X.X and Y.Y.Y.Y with the addresses of other nodes - A node can add its own address (it will work fine) - ... Which means that we can use the same command-line on all nodes (convenient!) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Cloud Auto-join - Since version 1.4.0, Consul can use the Kubernetes API to find its peers - This is called [Cloud Auto-join] - Instead of passing an IP address, we need to pass a parameter like this: ``` consul agent -retry-join "provider=k8s label_selector=\"app=consul\"" ``` - Consul needs to be able to talk to the Kubernetes API - We can provide a `kubeconfig` file - If Consul runs in a pod, it will use the *service account* of the pod [Cloud Auto-join]: https://www.consul.io/docs/agent/cloud-auto-join.html#kubernetes-k8s- .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Setting up Cloud auto-join - We need to create a service account for Consul - We need to create a role that can `list` and `get` pods - We need to bind that role to the service account - And of course, we need to make sure that Consul pods use that service account .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Putting it all together - The file `k8s/consul-1.yaml` defines the required resources (service account, role, role binding, service, stateful set) - Inspired by this [excellent tutorial](https://github.com/kelseyhightower/consul-on-kubernetes) by Kelsey Hightower (many features from the original tutorial were removed for simplicity) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Running our Consul cluster - We'll use the provided YAML file .exercise[ - Create the stateful set and associated service: ```bash kubectl apply -f ~/container.training/k8s/consul-1.yaml ``` - Check the logs as the pods come up one after another: ```bash stern consul ``` <!-- ```wait Synced node info``` ```key ^C``` --> - Check the health of the cluster: ```bash kubectl exec consul-0 -- consul members ``` ] .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Caveats - The scheduler may place two Consul pods on the same node - if that node fails, we lose two Consul pods at the same time - this will cause the cluster to fail - Scaling down the cluster will cause it to fail - when a Consul member leaves the cluster, it needs to inform the others - otherwise, the last remaining node doesn't have quorum and stops functioning - This Consul cluster doesn't use real persistence yet - data is stored in the containers' ephemeral filesystem - if a pod fails, its replacement starts from a blank slate .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Improving pod placement - We need to tell the scheduler: *do not put two of these pods on the same node!* - This is done with an `affinity` section like the following one: ```yaml affinity: podAntiAffinity: requiredDuringSchedulingIgnoredDuringExecution: - labelSelector: matchExpressions: - key: app operator: In values: - consul topologyKey: kubernetes.io/hostname ``` .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Using a lifecycle hook - When a Consul member leaves the cluster, it needs to execute: ```bash consul leave ``` - This is done with a `lifecycle` section like the following one: ```yaml lifecycle: preStop: exec: command: - /bin/sh - -c - consul leave ``` .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Running a better Consul cluster - Let's try to add the scheduling constraint and lifecycle hook - We can do that in the same namespace or another one (as we like) - If we do that in the same namespace, we will see a rolling update (pods will be replaced one by one) .exercise[ - Deploy a better Consul cluster: ```bash kubectl apply -f ~/container.training/k8s/consul-2.yaml ``` ] .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Still no persistence, though - We aren't using actual persistence yet (no `volumeClaimTemplate`, Persistent Volume, etc.) - What happens if we lose a pod? - a new pod gets rescheduled (with an empty state) - the new pod tries to connect to the two others - it will be accepted (after 1-2 minutes of instability) - and it will retrieve the data from the other pods .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Failure modes - What happens if we lose two pods? - manual repair will be required - we will need to instruct the remaining one to act solo - then rejoin new pods - What happens if we lose three pods? (aka all of them) - we lose all the data (ouch) - If we run Consul without persistent storage, backups are a good idea! .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- class: pic .interstitial[] --- name: toc-persistent-volumes-claims class: title Persistent Volumes Claims .nav[ [Previous section](#toc-running-a-consul-cluster) | [Back to table of contents](#toc-module-4) | [Next section](#toc-local-persistent-volumes) ] .debug[(automatically generated title slide)] --- # Persistent Volumes Claims - Our Pods can use a special volume type: a *Persistent Volume Claim* - A Persistent Volume Claim (PVC) is also a Kubernetes resource (visible with `kubectl get persistentvolumeclaims` or `kubectl get pvc`) - A PVC is not a volume; it is a *request for a volume* - It should indicate at least: - the size of the volume (e.g. "5 GiB") - the access mode (e.g. "read-write by a single pod") .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## What's in a PVC? - A PVC contains at least: - a list of *access modes* (ReadWriteOnce, ReadOnlyMany, ReadWriteMany) - a size (interpreted as the minimal storage space needed) - It can also contain optional elements: - a selector (to restrict which actual volumes it can use) - a *storage class* (used by dynamic provisioning, more on that later) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## What does a PVC look like? Here is a manifest for a basic PVC: ```yaml kind: PersistentVolumeClaim apiVersion: v1 metadata: name: my-claim spec: accessModes: - ReadWriteOnce resources: requests: storage: 1Gi ``` .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Using a Persistent Volume Claim Here is a Pod definition like the ones shown earlier, but using a PVC: ```yaml apiVersion: v1 kind: Pod metadata: name: pod-using-a-claim spec: containers: - image: ... name: container-using-a-claim volumeMounts: - mountPath: /my-vol name: my-volume volumes: - name: my-volume persistentVolumeClaim: claimName: my-claim ``` .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Creating and using Persistent Volume Claims - PVCs can be created manually and used explicitly (as shown on the previous slides) - They can also be created and used through Stateful Sets (this will be shown later) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Lifecycle of Persistent Volume Claims - When a PVC is created, it starts existing in "Unbound" state (without an associated volume) - A Pod referencing an unbound PVC will not start (the scheduler will wait until the PVC is bound to place it) - A special controller continuously monitors PVCs to associate them with PVs - If no PV is available, one must be created: - manually (by operator intervention) - using a *dynamic provisioner* (more on that later) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- class: extra-details ## Which PV gets associated to a PVC? - The PV must satisfy the PVC constraints (access mode, size, optional selector, optional storage class) - The PVs with the closest access mode are picked - Then the PVs with the closest size - It is possible to specify a `claimRef` when creating a PV (this will associate it to the specified PVC, but only if the PV satisfies all the requirements of the PVC; otherwise another PV might end up being picked) - For all the details about the PersistentVolumeClaimBinder, check [this doc](https://github.com/kubernetes/community/blob/master/contributors/design-proposals/storage/persistent-storage.md#matching-and-binding) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Persistent Volume Claims and Stateful sets - A Stateful set can define one (or more) `volumeClaimTemplate` - Each `volumeClaimTemplate` will create one Persistent Volume Claim per pod - Each pod will therefore have its own individual volume - These volumes are numbered (like the pods) - Example: - a Stateful set is named `db` - it is scaled to replicas - it has a `volumeClaimTemplate` named `data` - then it will create pods `db-0`, `db-1`, `db-2` - these pods will have volumes named `data-db-0`, `data-db-1`, `data-db-2` .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Persistent Volume Claims are sticky - When updating the stateful set (e.g. image upgrade), each pod keeps its volume - When pods get rescheduled (e.g. node failure), they keep their volume (this requires a storage system that is not node-local) - These volumes are not automatically deleted (when the stateful set is scaled down or deleted) - If a stateful set is scaled back up later, the pods get their data back .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Dynamic provisioners - A *dynamic provisioner* monitors unbound PVCs - It can create volumes (and the corresponding PV) on the fly - This requires the PVCs to have a *storage class* (annotation `volume.beta.kubernetes.io/storage-provisioner`) - A dynamic provisioner only acts on PVCs with the right storage class (it ignores the other ones) - Just like `LoadBalancer` services, dynamic provisioners are optional (i.e. our cluster may or may not have one pre-installed) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## What's a Storage Class? - A Storage Class is yet another Kubernetes API resource (visible with e.g. `kubectl get storageclass` or `kubectl get sc`) - It indicates which *provisioner* to use (which controller will create the actual volume) - And arbitrary parameters for that provisioner (replication levels, type of disk ... anything relevant!) - Storage Classes are required if we want to use [dynamic provisioning](https://kubernetes.io/docs/concepts/storage/dynamic-provisioning/) (but we can also create volumes manually, and ignore Storage Classes) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## The default storage class - At most one storage class can be marked as the default class (by annotating it with `storageclass.kubernetes.io/is-default-class=true`) - When a PVC is created, it will be annotated with the default storage class (unless it specifies an explicit storage class) - This only happens at PVC creation (existing PVCs are not updated when we mark a class as the default one) .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Dynamic provisioning setup This is how we can achieve fully automated provisioning of persistent storage. 1. Configure a storage system. (It needs to have an API, or be capable of automated provisioning of volumes.) 2. Install a dynamic provisioner for this storage system. (This is some specific controller code.) 3. Create a Storage Class for this system. (It has to match what the dynamic provisioner is expecting.) 4. Annotate the Storage Class to be the default one. .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- ## Dynamic provisioning usage After setting up the system (previous slide), all we need to do is: *Create a Stateful Set that makes use of a `volumeClaimTemplate`.* This will trigger the following actions. 1. The Stateful Set creates PVCs according to the `volumeClaimTemplate`. 2. The Stateful Set creates Pods using these PVCs. 3. The PVCs are automatically annotated with our Storage Class. 4. The dynamic provisioner provisions volumes and creates the corresponding PVs. 5. The PersistentVolumeClaimBinder associates the PVs and the PVCs together. 6. PVCs are now bound, the Pods can start. ??? :EN:- Deploying apps with Stateful Sets :EN:- Example: deploying a Consul cluster :EN:- Understanding Persistent Volume Claims and Storage Classes :FR:- Déployer une application avec un *Stateful Set* :FR:- Example : lancer un cluster Consul :FR:- Comprendre les *Persistent Volume Claims* et *Storage Classes* .debug[[k8s/statefulsets.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/statefulsets.md)] --- class: pic .interstitial[] --- name: toc-local-persistent-volumes class: title Local Persistent Volumes .nav[ [Previous section](#toc-persistent-volumes-claims) | [Back to table of contents](#toc-module-4) | [Next section](#toc-highly-available-persistent-volumes) ] .debug[(automatically generated title slide)] --- # Local Persistent Volumes - We want to run that Consul cluster *and* actually persist data - But we don't have a distributed storage system - We are going to use local volumes instead (similar conceptually to `hostPath` volumes) - We can use local volumes without installing extra plugins - However, they are tied to a node - If that node goes down, the volume becomes unavailable .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## With or without dynamic provisioning - We will deploy a Consul cluster *with* persistence - That cluster's StatefulSet will create PVCs - These PVCs will remain unbound¹, until we will create local volumes manually (we will basically do the job of the dynamic provisioner) - Then, we will see how to automate that with a dynamic provisioner .footnote[¹Unbound = without an associated Persistent Volume.] .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## If we have a dynamic provisioner ... - The labs in this section assume that we *do not* have a dynamic provisioner - If we do have one, we need to disable it .exercise[ - Check if we have a dynamic provisioner: ```bash kubectl get storageclass ``` - If the output contains a line with `(default)`, run this command: ```bash kubectl annotate sc storageclass.kubernetes.io/is-default-class- --all ``` - Check again that it is no longer marked as `(default)` ] .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## Deploying Consul - Let's use a new manifest for our Consul cluster - The only differences between that file and the previous one are: - `volumeClaimTemplate` defined in the Stateful Set spec - the corresponding `volumeMounts` in the Pod spec .exercise[ - Apply the persistent Consul YAML file: ```bash kubectl apply -f ~/container.training/k8s/consul-3.yaml ``` ] .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## Observing the situation - Let's look at Persistent Volume Claims and Pods .exercise[ - Check that we now have an unbound Persistent Volume Claim: ```bash kubectl get pvc ``` - We don't have any Persistent Volume: ```bash kubectl get pv ``` - The Pod `consul-0` is not scheduled yet: ```bash kubectl get pods -o wide ``` ] *Hint: leave these commands running with `-w` in different windows.* .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## Explanations - In a Stateful Set, the Pods are started one by one - `consul-1` won't be created until `consul-0` is running - `consul-0` has a dependency on an unbound Persistent Volume Claim - The scheduler won't schedule the Pod until the PVC is bound (because the PVC might be bound to a volume that is only available on a subset of nodes; for instance EBS are tied to an availability zone) .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## Creating Persistent Volumes - Let's create 3 local directories (`/mnt/consul`) on node2, node3, node4 - Then create 3 Persistent Volumes corresponding to these directories .exercise[ - Create the local directories: ```bash for NODE in node2 node3 node4; do ssh $NODE sudo mkdir -p /mnt/consul done ``` - Create the PV objects: ```bash kubectl apply -f ~/container.training/k8s/volumes-for-consul.yaml ``` ] .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## Check our Consul cluster - The PVs that we created will be automatically matched with the PVCs - Once a PVC is bound, its pod can start normally - Once the pod `consul-0` has started, `consul-1` can be created, etc. - Eventually, our Consul cluster is up, and backend by "persistent" volumes .exercise[ - Check that our Consul clusters has 3 members indeed: ```bash kubectl exec consul-0 -- consul members ``` ] .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## Devil is in the details (1/2) - The size of the Persistent Volumes is bogus (it is used when matching PVs and PVCs together, but there is no actual quota or limit) .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## Devil is in the details (2/2) - This specific example worked because we had exactly 1 free PV per node: - if we had created multiple PVs per node ... - we could have ended with two PVCs bound to PVs on the same node ... - which would have required two pods to be on the same node ... - which is forbidden by the anti-affinity constraints in the StatefulSet - To avoid that, we need to associated the PVs with a Storage Class that has: ```yaml volumeBindingMode: WaitForFirstConsumer ``` (this means that a PVC will be bound to a PV only after being used by a Pod) - See [this blog post](https://kubernetes.io/blog/2018/04/13/local-persistent-volumes-beta/) for more details .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## Bulk provisioning - It's not practical to manually create directories and PVs for each app - We *could* pre-provision a number of PVs across our fleet - We could even automate that with a Daemon Set: - creating a number of directories on each node - creating the corresponding PV objects - We also need to recycle volumes - ... This can quickly get out of hand .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## Dynamic provisioning - We could also write our own provisioner, which would: - watch the PVCs across all namespaces - when a PVC is created, create a corresponding PV on a node - Or we could use one of the dynamic provisioners for local persistent volumes (for instance the [Rancher local path provisioner](https://github.com/rancher/local-path-provisioner)) .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- ## Strategies for local persistent volumes - Remember, when a node goes down, the volumes on that node become unavailable - High availability will require another layer of replication (like what we've just seen with Consul; or primary/secondary; etc) - Pre-provisioning PVs makes sense for machines with local storage (e.g. cloud instance storage; or storage directly attached to a physical machine) - Dynamic provisioning makes sense for large number of applications (when we can't or won't dedicate a whole disk to a volume) - It's possible to mix both (using distinct Storage Classes) ??? :EN:- Static vs dynamic volume provisioning :EN:- Example: local persistent volume provisioner :FR:- Création statique ou dynamique de volumes :FR:- Exemple : création de volumes locaux .debug[[k8s/local-persistent-volumes.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/local-persistent-volumes.md)] --- class: pic .interstitial[] --- name: toc-highly-available-persistent-volumes class: title Highly available Persistent Volumes .nav[ [Previous section](#toc-local-persistent-volumes) | [Back to table of contents](#toc-module-4) | [Next section](#toc-) ] .debug[(automatically generated title slide)] --- # Highly available Persistent Volumes - How can we achieve true durability? - How can we store data that would survive the loss of a node? -- - We need to use Persistent Volumes backed by highly available storage systems - There are many ways to achieve that: - leveraging our cloud's storage APIs - using NAS/SAN systems or file servers - distributed storage systems -- - We are going to see one distributed storage system in action .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Our test scenario - We will set up a distributed storage system on our cluster - We will use it to deploy a SQL database (PostgreSQL) - We will insert some test data in the database - We will disrupt the node running the database - We will see how it recovers .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Portworx - Portworx is a *commercial* persistent storage solution for containers - It works with Kubernetes, but also Mesos, Swarm ... - It provides [hyper-converged](https://en.wikipedia.org/wiki/Hyper-converged_infrastructure) storage (=storage is provided by regular compute nodes) - We're going to use it here because it can be deployed on any Kubernetes cluster (it doesn't require any particular infrastructure) - We don't endorse or support Portworx in any particular way (but we appreciate that it's super easy to install!) .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## A useful reminder - We're installing Portworx because we need a storage system - If you are using AKS, EKS, GKE ... you already have a storage system (but you might want another one, e.g. to leverage local storage) - If you have setup Kubernetes yourself, there are other solutions available too - on premises, you can use a good old SAN/NAS - on a private cloud like OpenStack, you can use e.g. Cinder - everywhere, you can use other systems, e.g. Gluster, StorageOS .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Installing Portworx - Portworx installation is relatively simple - ... But we made it *even simpler!* - We are going to use a YAML manifest that will take care of everything - Warning: this manifest is customized for a very specific setup (like the VMs that we provide during workshops and training sessions) - It will probably *not work* If you are using a different setup (like Docker Desktop, k3s, MicroK8S, Minikube ...) .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## The simplified Portworx installer - The Portworx installation will take a few minutes - Let's start it, then we'll explain what happens behind the scenes .exercise[ - Install Portworx: ```bash kubectl apply -f ~/container.training/k8s/portworx.yaml ``` ] <!-- ##VERSION ## --> *Note: this was tested with Kubernetes 1.18. Newer versions may or may not work.* .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- class: extra-details ## What's in this YAML manifest? - Portworx installation itself, pre-configured for our setup - A default *Storage Class* using Portworx - A *Daemon Set* to create loop devices on each node of the cluster .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- class: extra-details ## Portworx installation - The official way to install Portworx is to use [PX-Central](https://central.portworx.com/) (this requires a free account) - PX-Central will ask us a few questions about our cluster (Kubernetes version, on-prem/cloud deployment, etc.) - Using our answers, it will generate a YAML manifest that we can use .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- class: extra-details ## Portworx storage configuration - Portworx needs at least one *block device* - Block device = disk or partition on a disk - We can see block devices with `lsblk` (or `cat /proc/partitions` if we're old school like that!) - If we don't have a spare disk or partition, we can use a *loop device* - A loop device is a block device actually backed by a file - These are frequently used to mount ISO (CD/DVD) images or VM disk images .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- class: extra-details ## Setting up a loop device - Our `portworx.yaml` manifest includes a *Daemon Set* that will: - create a 10 GB (empty) file on each node - load the `loop` module (if it's not already loaded) - associate a loop device with the 10 GB file - After these steps, we have a block device that Portworx can use .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- class: extra-details ## Implementation details - The file is `/portworx.blk` (it is a [sparse file](https://en.wikipedia.org/wiki/Sparse_file) created with `truncate`) - The loop device is `/dev/loop4` - This can be verified by running `sudo losetup` - The *Daemon Set* uses a privileged *Init Container* - We can check the logs of that container with: ```bash kubectl logs --selector=app=setup-loop4-for-portworx \ -c setup-loop4-for-portworx ``` .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Waiting for Portworx to be ready - The installation process will take a few minutes .exercise[ - Check out the logs: ```bash stern -n kube-system portworx ``` - Wait until it gets quiet (you should see `portworx service is healthy`, too) <!-- ```longwait PX node status reports portworx service is healthy``` ```key ^C``` --> ] .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Dynamic provisioning of persistent volumes - We are going to run PostgreSQL in a Stateful set - The Stateful set will specify a `volumeClaimTemplate` - That `volumeClaimTemplate` will create Persistent Volume Claims - Kubernetes' [dynamic provisioning](https://kubernetes.io/docs/concepts/storage/dynamic-provisioning/) will satisfy these Persistent Volume Claims (by creating Persistent Volumes and binding them to the claims) - The Persistent Volumes are then available for the PostgreSQL pods .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Storage Classes - It's possible that multiple storage systems are available - Or, that a storage system offers multiple tiers of storage (SSD vs. magnetic; mirrored or not; etc.) - We need to tell Kubernetes *which* system and tier to use - This is achieved by creating a Storage Class - A `volumeClaimTemplate` can indicate which Storage Class to use - It is also possible to mark a Storage Class as "default" (it will be used if a `volumeClaimTemplate` doesn't specify one) .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Check our default Storage Class - The YAML manifest applied earlier should define a default storage class .exercise[ - Check that we have a default storage class: ```bash kubectl get storageclass ``` ] There should be a storage class showing as `portworx-replicated (default)`. .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- class: extra-details ## Our default Storage Class This is our Storage Class (in `k8s/storage-class.yaml`): ```yaml kind: StorageClass apiVersion: storage.k8s.io/v1beta1 metadata: name: portworx-replicated annotations: storageclass.kubernetes.io/is-default-class: "true" provisioner: kubernetes.io/portworx-volume parameters: repl: "2" priority_io: "high" ``` - It says "use Portworx to create volumes and keep 2 replicas of these volumes" - The annotation makes this Storage Class the default one .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Our Postgres Stateful set - The next slide shows `k8s/postgres.yaml` - It defines a Stateful set - With a `volumeClaimTemplate` requesting a 1 GB volume - That volume will be mounted to `/var/lib/postgresql/data` - There is another little detail: we enable the `stork` scheduler - The `stork` scheduler is optional (it's specific to Portworx) - It helps the Kubernetes scheduler to colocate the pod with its volume (see [this blog post](https://portworx.com/stork-storage-orchestration-kubernetes/) for more details about that) .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- .small[ ```yaml apiVersion: apps/v1 kind: StatefulSet metadata: name: postgres spec: selector: matchLabels: app: postgres serviceName: postgres template: metadata: labels: app: postgres spec: schedulerName: stork containers: - name: postgres image: postgres:12 env: - name: POSTGRES_HOST_AUTH_METHOD value: trust volumeMounts: - mountPath: /var/lib/postgresql/data name: postgres volumeClaimTemplates: - metadata: name: postgres spec: accessModes: ["ReadWriteOnce"] resources: requests: storage: 1Gi ``` ] .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Creating the Stateful set - Before applying the YAML, watch what's going on with `kubectl get events -w` .exercise[ - Apply that YAML: ```bash kubectl apply -f ~/container.training/k8s/postgres.yaml ``` <!-- ```hide kubectl wait pod postgres-0 --for condition=ready``` --> ] .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Testing our PostgreSQL pod - We will use `kubectl exec` to get a shell in the pod - Good to know: we need to use the `postgres` user in the pod .exercise[ - Get a shell in the pod, as the `postgres` user: ```bash kubectl exec -ti postgres-0 -- su postgres ``` <!-- autopilot prompt detection expects $ or # at the beginning of the line. ```wait postgres@postgres``` ```keys PS1="\u@\h:\w\n\$ "``` ```key ^J``` --> - Check that default databases have been created correctly: ```bash psql -l ``` ] (This should show us 3 lines: postgres, template0, and template1.) .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Inserting data in PostgreSQL - We will create a database and populate it with `pgbench` .exercise[ - Create a database named `demo`: ```bash createdb demo ``` - Populate it with `pgbench`: ```bash pgbench -i demo ``` ] - The `-i` flag means "create tables" - If you want more data in the test tables, add e.g. `-s 10` (to get 10x more rows) .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Checking how much data we have now - The `pgbench` tool inserts rows in table `pgbench_accounts` .exercise[ - Check that the `demo` base exists: ```bash psql -l ``` - Check how many rows we have in `pgbench_accounts`: ```bash psql demo -c "select count(*) from pgbench_accounts" ``` - Check that `pgbench_history` is currently empty: ```bash psql demo -c "select count(*) from pgbench_history" ``` ] .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Testing the load generator - Let's use `pgbench` to generate a few transactions .exercise[ - Run `pgbench` for 10 seconds, reporting progress every second: ```bash pgbench -P 1 -T 10 demo ``` - Check the size of the history table now: ```bash psql demo -c "select count(*) from pgbench_history" ``` ] Note: on small cloud instances, a typical speed is about 100 transactions/second. .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Generating transactions - Now let's use `pgbench` to generate more transactions - While it's running, we will disrupt the database server .exercise[ - Run `pgbench` for 10 minutes, reporting progress every second: ```bash pgbench -P 1 -T 600 demo ``` - You can use a longer time period if you need more time to run the next steps <!-- ```tmux split-pane -h``` --> ] .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Find out which node is hosting the database - We can find that information with `kubectl get pods -o wide` .exercise[ - Check the node running the database: ```bash kubectl get pod postgres-0 -o wide ``` ] We are going to disrupt that node. -- By "disrupt" we mean: "disconnect it from the network". .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Disconnect the node - We will use `iptables` to block all traffic exiting the node (except SSH traffic, so we can repair the node later if needed) .exercise[ - SSH to the node to disrupt: ```bash ssh `nodeX` ``` - Allow SSH traffic leaving the node, but block all other traffic: ```bash sudo iptables -I OUTPUT -p tcp --sport 22 -j ACCEPT sudo iptables -I OUTPUT 2 -j DROP ``` ] .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Check that the node is disconnected .exercise[ - Check that the node can't communicate with other nodes: ``` ping node1 ``` - Logout to go back on `node1` <!-- ```key ^D``` --> - Watch the events unfolding with `kubectl get events -w` and `kubectl get pods -w` ] - It will take some time for Kubernetes to mark the node as unhealthy - Then it will attempt to reschedule the pod to another node - In about a minute, our pod should be up and running again .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Check that our data is still available - We are going to reconnect to the (new) pod and check .exercise[ - Get a shell on the pod: ```bash kubectl exec -ti postgres-0 -- su postgres ``` <!-- ```wait postgres@postgres``` ```keys PS1="\u@\h:\w\n\$ "``` ```key ^J``` --> - Check how many transactions are now in the `pgbench_history` table: ```bash psql demo -c "select count(*) from pgbench_history" ``` <!-- ```key ^D``` --> ] If the 10-second test that we ran earlier gave e.g. 80 transactions per second, and we failed the node after 30 seconds, we should have about 2400 row in that table. .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Double-check that the pod has really moved - Just to make sure the system is not bluffing! .exercise[ - Look at which node the pod is now running on ```bash kubectl get pod postgres-0 -o wide ``` ] .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Re-enable the node - Let's fix the node that we disconnected from the network .exercise[ - SSH to the node: ```bash ssh `nodeX` ``` - Remove the iptables rule blocking traffic: ```bash sudo iptables -D OUTPUT 2 ``` ] .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- class: extra-details ## A few words about this PostgreSQL setup - In a real deployment, you would want to set a password - This can be done by creating a `secret`: ``` kubectl create secret generic postgres \ --from-literal=password=$(base64 /dev/urandom | head -c16) ``` - And then passing that secret to the container: ```yaml env: - name: POSTGRES_PASSWORD valueFrom: secretKeyRef: name: postgres key: password ``` .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- class: extra-details ## Troubleshooting Portworx - If we need to see what's going on with Portworx: ``` PXPOD=$(kubectl -n kube-system get pod -l name=portworx -o json | jq -r .items[0].metadata.name) kubectl -n kube-system exec $PXPOD -- /opt/pwx/bin/pxctl status ``` - We can also connect to Lighthouse (a web UI) - check the port with `kubectl -n kube-system get svc px-lighthouse` - connect to that port - the default login/password is `admin/Password1` - then specify `portworx-service` as the endpoint .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- class: extra-details ## Removing Portworx - Portworx provides a storage driver - It needs to place itself "above" the Kubelet (it installs itself straight on the nodes) - To remove it, we need to do more than just deleting its Kubernetes resources - It is done by applying a special label: ``` kubectl label nodes --all px/enabled=remove --overwrite ``` - Then removing a bunch of local files: ``` sudo chattr -i /etc/pwx/.private.json sudo rm -rf /etc/pwx /opt/pwx ``` (on each node where Portworx was running) .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- class: extra-details ## Dynamic provisioning without a provider - What if we want to use Stateful sets without a storage provider? - We will have to create volumes manually (by creating Persistent Volume objects) - These volumes will be automatically bound with matching Persistent Volume Claims - We can use local volumes (essentially bind mounts of host directories) - Of course, these volumes won't be available in case of node failure - Check [this blog post](https://kubernetes.io/blog/2018/04/13/local-persistent-volumes-beta/) for more information and gotchas .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- ## Acknowledgements The Portworx installation tutorial, and the PostgreSQL example, were inspired by [Portworx examples on Katacoda](https://katacoda.com/portworx/scenarios/), in particular: - [installing Portworx on Kubernetes](https://www.katacoda.com/portworx/scenarios/deploy-px-k8s) (with adapatations to use a loop device and an embedded key/value store) - [persistent volumes on Kubernetes using Portworx](https://www.katacoda.com/portworx/scenarios/px-k8s-vol-basic) (with adapatations to specify a default Storage Class) - [HA PostgreSQL on Kubernetes with Portworx](https://www.katacoda.com/portworx/scenarios/px-k8s-postgres-all-in-one) (with adaptations to use a Stateful Set and simplify PostgreSQL's setup) ??? :EN:- Using highly available persistent volumes :EN:- Example: deploying a database that can withstand node outages :FR:- Utilisation de volumes à haute disponibilité :FR:- Exemple : déployer une base de données survivant à la défaillance d'un nœud .debug[[k8s/portworx.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/k8s/portworx.md)] --- class: title, self-paced Thank you! .debug[[shared/thankyou.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/thankyou.md)] --- class: title, in-person That's all, folks! <br/> Questions?  .debug[[shared/thankyou.md](https://github.com/jpetazzo/container.training/tree/2020-09-skillsmatter/slides/shared/thankyou.md)]